Two Sides of the Same Coin

Two Sides of the Same Coin

Every organization pursuing artificial intelligence is having one of two conversations. The first: what is our AI strategy? Where do we deploy? How do we scale? How do we stay competitive? The second — less common, but far more consequential: what is our AI governance?

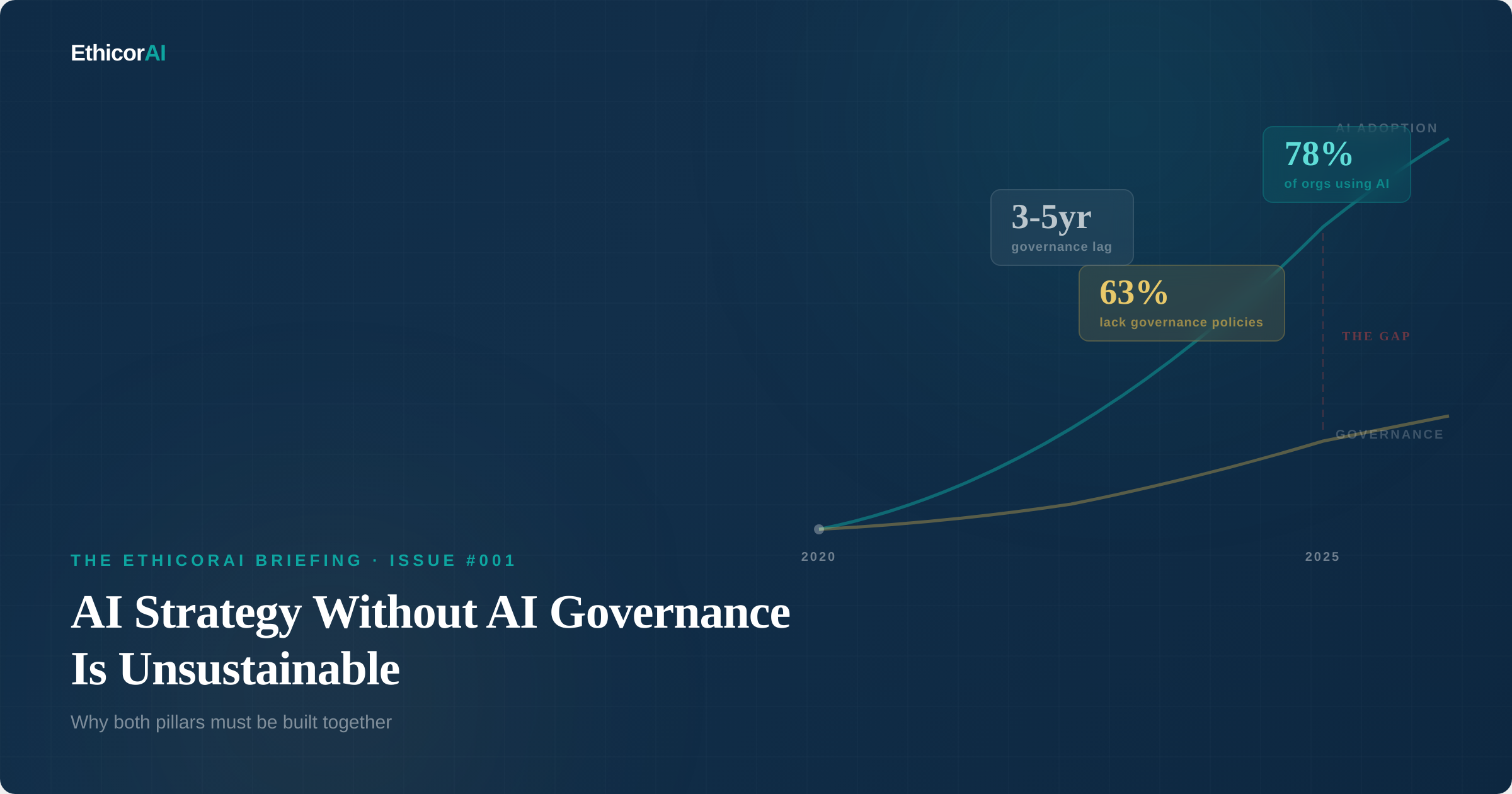

Too often, these conversations happen in separate rooms, led by different teams, with different priorities. Strategy is seen as the accelerator. Governance is seen as the brake. This framing is not just wrong — it's dangerous.

AI strategy without AI governance is unsustainable. And AI governance without AI strategy is directionless. They are not competing priorities. They are two pillars that hold up the same structure. When either one is missing, the whole thing eventually collapses.

Welcome to the first issue of The EthicoRAI Briefing. This newsletter exists because the organizations that get AI right will be the ones that build strategy and governance together — not one before the other, not one instead of the other, but as an integrated capability from day one.

What AI Strategy Actually Looks Like

An AI strategy answers a fundamental question: how will this organization create value with AI? It's the roadmap that connects AI capabilities to business outcomes. While every organization's strategy is different, mature AI strategies typically rest on five pillars:

1. Vision and Use Cases. Where will AI be deployed and why? This includes identifying high-value use cases, prioritizing them by business impact and feasibility, and defining what success looks like. A strategy without clear use cases is just aspiration.

2. Data Infrastructure. AI runs on data. The strategy must address how data will be collected, stored, processed, and governed to support AI initiatives. This includes data quality, data pipelines, data architecture, and access controls. Organizations with poor data foundations consistently fail at AI, regardless of how good their models are.

3. Technology and Architecture. What platforms, tools, and infrastructure will support AI development and deployment? This covers model development environments, deployment pipelines, cloud vs. on-premise decisions, and integration with existing systems. Build vs. buy decisions live here.

4. Talent and Organization. Who will build, deploy, maintain, and oversee AI systems? This includes data scientists, ML engineers, AI product managers, and — critically — governance and ethics professionals. The talent pillar also covers organizational structure: centralized AI teams, federated models, or centers of excellence.

5. Scaling and Operationalization. How does the organization move from AI experiments to AI at scale? This is where most strategies stall. Scaling requires repeatable processes, MLOps practices, monitoring infrastructure, and — here's the connection — governance frameworks that can support dozens or hundreds of AI systems, not just a handful of pilots.

Risk Alert

Most AI strategies focus heavily on pillars 1 through 3 and underinvest in pillars 4 and 5. This creates a pattern that's become all too familiar: impressive pilots that never scale, because the organization lacks the people, processes, and governance to operationalize AI responsibly.

What AI Governance Actually Looks Like

If strategy answers "how will we create value with AI?" then governance answers: how will we create value with AI responsibly, safely, and sustainably?

AI governance is the set of policies, processes, roles, and accountability structures that ensure AI systems are developed, deployed, and operated in ways that are trustworthy and compliant. It's the organizational immune system that detects and manages AI risk before it becomes AI harm.

Mature AI governance programs rest on their own set of pillars:

1. Visibility. You can't govern what you can't see. This starts with an AI inventory — a comprehensive register of every AI system in the organization, including third-party and shadow AI. Each entry captures what the system does, what data it uses, what decisions it influences, and who is accountable.

2. Risk Assessment. For each AI system, governance requires a structured assessment of potential harms across multiple dimensions — fairness, privacy, security, safety, transparency, and more. This assessment determines the level of oversight each system requires and the controls that must be in place.

3. Policies and Standards. Written, communicated, and enforced policies that define acceptable AI use, development standards, deployment requirements, monitoring expectations, and incident response procedures. These policies must be living documents that evolve with the technology and regulatory landscape.

4. Accountability and Oversight. Clear assignment of responsibility for AI governance outcomes. This includes governance roles (Chief AI Officer, AI Ethics Lead, AI Governance Committee), reporting lines, escalation paths, and human oversight requirements for high-risk systems. Accountability without authority is meaningless — governance roles need organizational backing.

5. Continuous Monitoring and Improvement. Governance doesn't end at deployment. It requires ongoing monitoring of AI system performance, fairness metrics, data drift, incident tracking, and regular reviews. The management system approach — plan, do, check, act — ensures governance improves over time rather than becoming stale documentation.

How Strategy and Governance Enable Each Other

Here's the insight that changes everything: strategy and governance are not in tension. They are mutually reinforcing.

Consider how governance enables strategy:

Governance accelerates scaling. Organizations with mature governance programs deploy AI more broadly and more quickly than those without. Why? Because governance provides the repeatable assessment processes, risk frameworks, and approval pathways that allow new AI systems to move from development to production without ad hoc deliberation every time. Without governance, every new deployment is a negotiation between legal, IT, compliance, and business teams. With governance, it's a process.

Governance builds the trust that strategy depends on. AI strategy requires buy-in from stakeholders — the board, regulators, customers, employees, and partners. That buy-in depends on trust, and trust depends on demonstrable governance. An organization that can show how it manages AI risk, ensures fairness, and maintains transparency will earn stakeholder confidence that an ungoverned organization never will.

Governance prevents the incidents that derail strategy. A single high-profile AI failure — a biased hiring tool, a data breach, a hallucination incident — can set an AI strategy back by years. Not because the technology failed, but because organizational trust was lost. Governance is the insurance policy that protects strategic investment.

And consider how strategy enables governance:

Strategy provides direction for governance. Governance without strategy is risk management in a vacuum. When governance knows where AI is heading — which use cases, which domains, which risk levels — it can build proportionate controls in advance rather than scrambling to catch up.

Strategy provides resources for governance. Governance programs need investment: people, tools, training, and organizational authority. That investment is much easier to justify when it's framed as an enabler of strategic objectives rather than a standalone compliance cost.

Strategy provides context for proportionality. Good governance is proportional — it applies lighter controls to low-risk AI and rigorous controls to high-risk AI. Understanding the strategic AI portfolio helps governance teams calibrate their oversight appropriately, avoiding both under-governance (risk) and over-governance (bureaucracy).

What To Do

If your organization has an AI strategy document, check whether governance appears in it — not as a footnote, but as a pillar. If it doesn't, that's your first action item. If your organization has neither, start both conversations simultaneously. They should be designed together, not sequentially.

The Cost of Getting This Wrong

The consequences of building strategy without governance are not hypothetical. They are playing out in real time across industries:

Regulatory exposure. The EU AI Act is already enforceable, with major compliance deadlines approaching. Organizations that scaled AI without governance are now scrambling to retrofit compliance — at a cost that dwarfs what building it in from the start would have required.

Reputational damage. Public incidents of AI bias, data breaches, and harmful outputs erode customer trust and brand value. In an environment where consumers and employees increasingly expect responsible AI use, ungoverned AI is a reputational time bomb.

Operational disruption. When an ungoverned AI system fails — and it will — the organization lacks the incident response processes to contain the damage quickly. The result is larger impact, longer recovery, and more extensive remediation.

Strategic paralysis. Paradoxically, organizations that skip governance often end up moving slower, not faster. After an incident, leadership becomes risk-averse. Legal blocks new deployments. The board asks hard questions nobody can answer. The AI strategy stalls — not because of governance, but because of its absence.

Five Questions to Assess Where You Stand

A quick self-assessment. If you can answer "yes" to all five, your strategy and governance are likely well-integrated. If not, the gaps you find are exactly where you need to focus.

1. Can you list every AI system operating in your organization? Including third-party tools, embedded AI in SaaS platforms, and tools employees adopted on their own. If the answer is "we think so," the real answer is no.

2. Does every AI system have an accountable owner? Not just a developer or vendor contact — someone in your organization who is responsible for its governance outcomes and risk management.

3. Are your AI systems classified by risk level? With governance requirements proportional to impact. A chatbot and a credit scoring model should not have the same oversight.

4. Do your AI strategy and governance documents reference each other? If strategy and governance were developed independently, they're probably not aligned.

5. Could you respond to an AI incident today? If a model produces biased outcomes, leaks data, or causes harm — do you have a defined process to detect, contain, investigate, and remediate?

Where to Start

If you're beginning from scratch, here is the sequence that works:

Step 1: Inventory. Before anything else, find out what AI you have. Survey department heads, review procurement records, scan vendor contracts. Build a simple register: system name, purpose, data inputs, risk level, owner. A rough inventory today is infinitely more valuable than a perfect one in six months.

Step 2: Risk-assess. For each system, evaluate which risk domains apply and at what severity. Focus governance resources on the systems that score highest.

Step 3: Align. Bring your AI strategy and governance leads into the same room. Map governance controls to strategic priorities. Ensure that every strategic AI initiative has a governance pathway, and every governance requirement has strategic context.

What To Do

This week, do one thing: ask your IT, procurement, and department leads to name every AI tool they know about in the organization. Compile the list. That single exercise is the foundation of both your strategy and your governance — and for most organizations, it will be the first time anyone has tried to answer that question.

What's Coming in This Newsletter

The EthicoRAI Briefing will be your weekly guide to AI governance, risk, and regulation. Expect deep dives into frameworks and standards, practical implementation guidance, regulatory updates, and risk analyses grounded in what's actually happening — not what might happen someday.

AI governance is a field being built in real time. The organizations that engage with it now will define the standard for responsible AI. This newsletter is for the people doing that work.

See you next week.

Next Issue

Issue #002: The EU AI Act Explained — What It Actually Requires and When. The world's first comprehensive AI regulation is already in effect. We break down the four risk tiers, the key deadlines, and why it matters even if you're not based in Europe.