The Regulation Everyone's Talking About

If you work in AI, compliance, risk, or technology leadership, you've heard about the EU AI Act. It's the world's first comprehensive AI regulation, and it's already law. Not a proposal. Not a draft. Law.

But there's a gap between knowing the AI Act exists and understanding what it actually requires. This issue closes that gap. We'll walk through the structure, the timelines, the obligations, and the misconceptions — so you can assess what it means for your organization, regardless of where you're based.

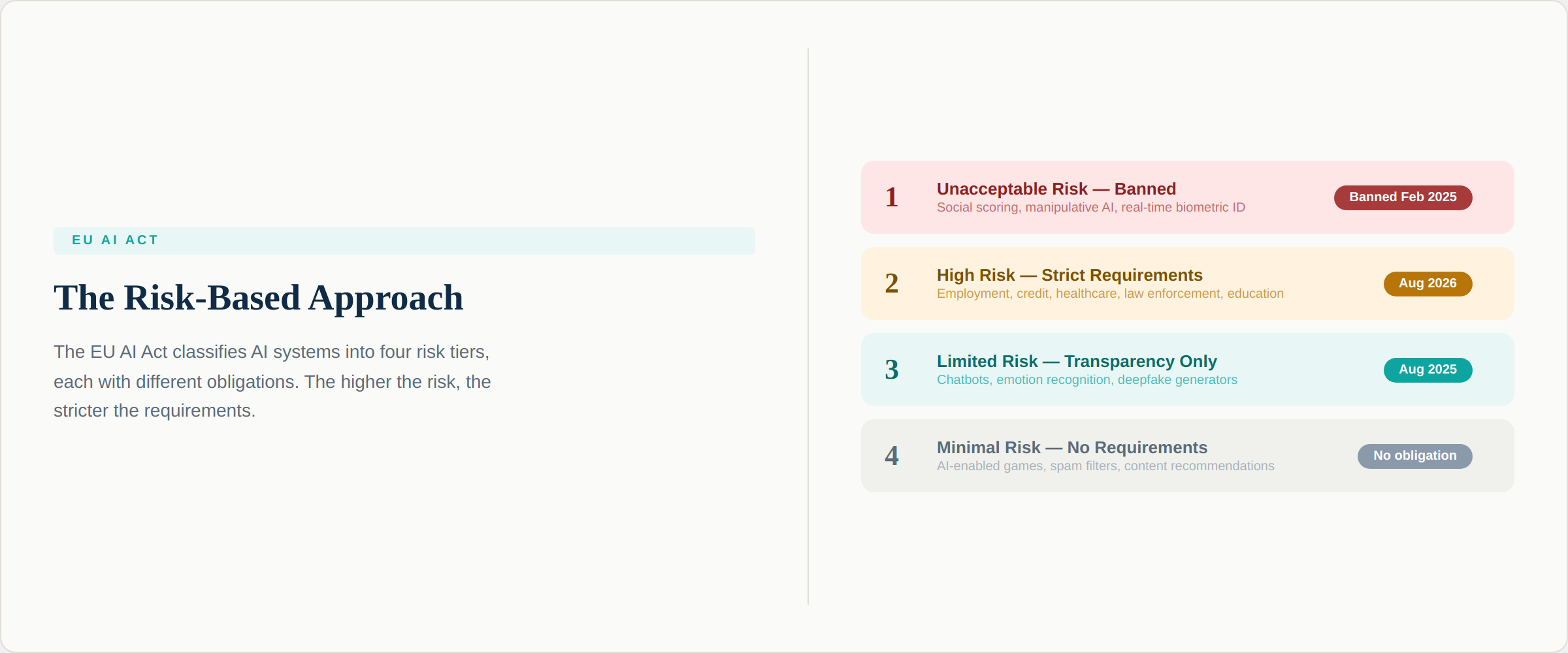

The Architecture: A Risk-Based Approach

The EU AI Act doesn't regulate all AI the same way. Instead, it classifies AI systems into four risk tiers, with obligations proportional to the potential for harm. This risk-based architecture is the single most important concept in the Act.

Tier 1: Unacceptable Risk — Banned. Certain AI practices are prohibited outright because they pose an unacceptable threat to fundamental rights. These include social scoring systems by governments, AI that manipulates people through subliminal or exploitative techniques, real-time remote biometric identification in public spaces for law enforcement (with narrow exceptions), and AI that infers emotions in workplaces or education settings. These prohibitions have been legally enforceable since February 2, 2025.

Tier 2: High Risk — Strict Requirements. AI systems used in areas where errors or biases could have significant consequences for individuals face the most extensive compliance requirements. This includes AI used in employment and hiring, credit and insurance decisions, healthcare, law enforcement, education, critical infrastructure management, and migration and border control. High-risk system rules take effect August 2, 2026 — the biggest compliance deadline on the horizon.

Tier 3: Limited Risk — Transparency Obligations. AI systems that interact with people or generate content carry transparency requirements. Users must be informed when they're interacting with an AI system (chatbots), content generated by AI must be labeled (deepfakes, synthetic media), and emotion recognition systems must disclose their nature. General-purpose AI (GPAI) models — including large language models — fall largely in this category, with transparency rules effective since August 2, 2025.

Tier 4: Minimal Risk — No Specific Requirements. The vast majority of AI systems — spam filters, AI-enabled games, content recommendation algorithms — carry minimal risk and face no specific obligations under the Act. However, organizations are encouraged to voluntarily adopt codes of conduct.

Map every AI system in your inventory to one of these four tiers. If any fall into Tier 1, you should have discontinued them already. If any fall into Tier 2, you have until August 2026 to comply — that's less than a year away.

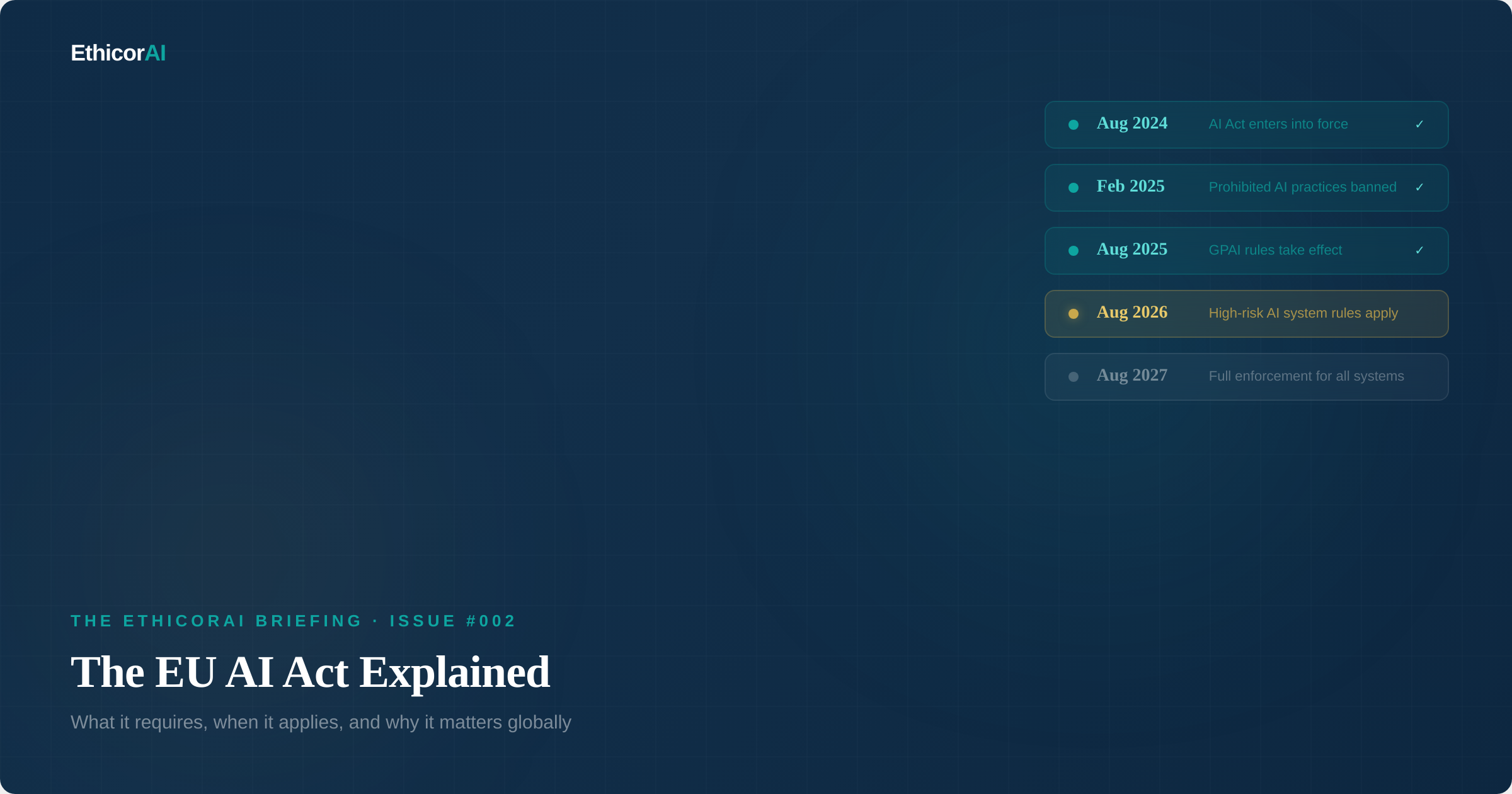

The Timeline: What's Happened and What's Coming

The AI Act entered into force on August 1, 2024, but its requirements apply gradually over a multi-year timeline. Understanding where we are in that timeline is essential for planning.

August 1, 2024: The AI Act formally entered into force. No requirements applied yet — this started the clock on the phased implementation.

February 2, 2025: Prohibited AI practices became legally enforceable. Organizations operating banned AI systems must have ceased their use by this date. AI literacy requirements also took effect — organizations deploying AI must ensure their staff has sufficient understanding of AI systems.

August 2, 2025: Rules for General-Purpose AI (GPAI) models became applicable. Providers of GPAI models must now comply with transparency requirements, maintain technical documentation, implement copyright compliance policies, and publish training data summaries. The EU AI Office became fully operational, and Member States were required to designate national competent authorities. A Code of Practice for GPAI was published to help providers demonstrate compliance.

August 2, 2026: This is the major compliance deadline. Requirements for high-risk AI systems take full effect. Providers must implement conformity assessments, maintain quality management systems, ensure human oversight mechanisms, conduct post-market monitoring, and register high-risk systems in the EU database. Transparency obligations under Article 50 also start to apply. Each EU member state must have established at least one AI regulatory sandbox.

August 2, 2027: Full enforcement for all AI systems, including those that were already on the market before the Act. GPAI models placed on the market before August 2025 must be fully compliant.

If you're a provider or deployer of high-risk AI systems, August 2026 is your compliance deadline — and it is now less than 9 months away. The conformity assessment, technical documentation, and quality management system requirements are substantial. Organizations that haven't started preparing are already behind.

The Roles: Who Is Responsible for What?

The AI Act assigns different obligations depending on your role in the AI supply chain. Understanding which role you occupy is the first step in determining your compliance obligations.

Providers are organizations that develop an AI system or have one developed on their behalf, and place it on the market or put it into service. Providers carry the heaviest obligations — conformity assessments, technical documentation, quality management systems, post-market monitoring, and incident reporting.

Deployers are organizations that use an AI system under their authority, except for personal non-professional activity. Deployers must use AI systems in accordance with instructions, ensure human oversight, monitor system performance, and conduct fundamental rights impact assessments for certain high-risk systems.

Importers and distributors have verification obligations — ensuring that providers have met their requirements before AI systems enter the EU market.

A critical nuance: if you significantly modify a provider's AI system, you may be reclassified as a provider yourself, inheriting the full set of provider obligations.

The Extraterritorial Reach

Perhaps the most important misconception about the EU AI Act: it doesn't only apply to EU companies.

The Act applies to any organization that places an AI system on the EU market or puts one into service within the EU — regardless of where that organization is based. If you're a US, Indian, or Singaporean company and your AI system is used by EU citizens or affects people within the EU, you're in scope.

This is the same extraterritorial logic that made the GDPR a global standard for data protection. The AI Act is designed to have the same effect for AI governance. Organizations worldwide are treating it as the de facto compliance benchmark — because if you can comply with the EU AI Act, you're likely compliant with most other AI regulations.

Common Misconceptions

"The AI Act only affects big tech companies." Wrong. The Act applies based on risk level and role, not company size. A 50-person company that deploys AI for hiring decisions faces the same high-risk obligations as a multinational. There are limited exemptions for SMEs and startups (reduced fees, priority access to regulatory sandboxes), but the core requirements apply equally.

"We just use AI tools — we don't develop them." Being a deployer doesn't exempt you. Deployers have their own set of obligations, including ensuring human oversight, conducting impact assessments for high-risk systems, and reporting serious incidents to authorities.

"Compliance can wait until 2027." The phased timeline means different deadlines for different obligations. Prohibited practices are already enforceable. GPAI rules are already active. High-risk requirements hit in August 2026. Waiting until 2027 means you've missed multiple compliance deadlines.

"The Act doesn't apply outside Europe." As discussed above, the extraterritorial reach means this applies to any organization whose AI systems affect people in the EU.

Determine your role under the AI Act (provider, deployer, or both). Map your AI systems to the risk tiers. Identify which deadlines apply to you. If high-risk systems are in scope, begin your conformity assessment preparation now — not in 2026.

The Enforcement Question

The AI Act carries significant penalties. Violations of prohibited practices can result in fines of up to 35 million euros or 7% of global annual turnover (whichever is higher). Non-compliance with high-risk requirements can trigger fines of up to 15 million euros or 3% of global turnover. Providing incorrect information to authorities carries fines of up to 7.5 million euros or 1% of turnover.

Enforcement will be handled at two levels: the EU AI Office oversees GPAI model compliance and systemic risks, while national market surveillance authorities handle enforcement for all other AI systems. The effectiveness of enforcement remains to be seen — many Member States are still establishing their competent authorities — but the legal framework and penalty structure are in place.

What This Means for Your Organization

The EU AI Act is not the only AI regulation in the world — and future issues will cover the global regulatory landscape in detail. But it is the most comprehensive, the most detailed, and the most influential. Whether or not you operate in the EU, understanding the AI Act is essential because it is establishing the global baseline for AI governance expectations.

The practical takeaway: build your AI governance program to the EU AI Act standard, and you'll be well-positioned for whatever comes next — whether that's regulation in your home jurisdiction, client due diligence requirements, or industry certification.

Next Issue

Issue #003: AI Risk Taxonomy — The 6 Domains Every Governance Leader Must Know. Before you can manage AI risk, you need a shared vocabulary for it. We break down the six risk domains that define the AI governance landscape — from bias and fairness to accountability.