Before You Can Manage Risk, You Need a Language for It

In our first issue, we made the case that AI strategy and governance must work together. In our second, we walked through the EU AI Act. This week, we go deeper into the substance of what governance is actually managing: risk.

AI risk is not a single thing. It's a landscape — a set of distinct but interconnected domains, each with its own failure modes, metrics, and mitigation strategies. Without a shared vocabulary for these domains, governance conversations become fragmented: the legal team worries about compliance, the data team worries about privacy, engineering worries about reliability, and nobody sees the full picture.

This issue provides that shared vocabulary. The taxonomy we present here is grounded in ISO/IEC 42001 — the international standard for AI management systems — which defines objectives related to responsible AI use across nine interconnected areas. This isn't an academic exercise. It's the framework that auditors, regulators, and governance professionals use in practice.

Why AI Risk Is Different

Before we map the domains, it's worth understanding why AI risk requires its own taxonomy — why we can't simply extend existing risk frameworks from cybersecurity or operational risk management.

Three characteristics make AI risk distinct:

Opacity. Many AI systems — particularly deep learning models — operate as black boxes. Unlike traditional software where you can trace a decision through code, AI models learn patterns from data in ways that even their creators can't fully explain. This opacity means risks can be invisible until they manifest as harm.

Emergence. AI risks often emerge from the interaction between models, data, and context — not from any single component. A model trained on historical hiring data might perform well on accuracy metrics but systematically discriminate against protected groups. The bias isn't "in" the model or "in" the data — it emerges from their interaction.

Scale. AI systems make decisions at a speed and scale that humans cannot manually review. A credit scoring model might evaluate thousands of applications per day. When something goes wrong, the impact compounds rapidly before anyone notices.

The most dangerous AI risks are the ones that look like success. A hiring model that improves "efficiency" by 30% might be doing so by systematically filtering out qualified candidates from underrepresented groups. The metric says it's working. The impact says otherwise.

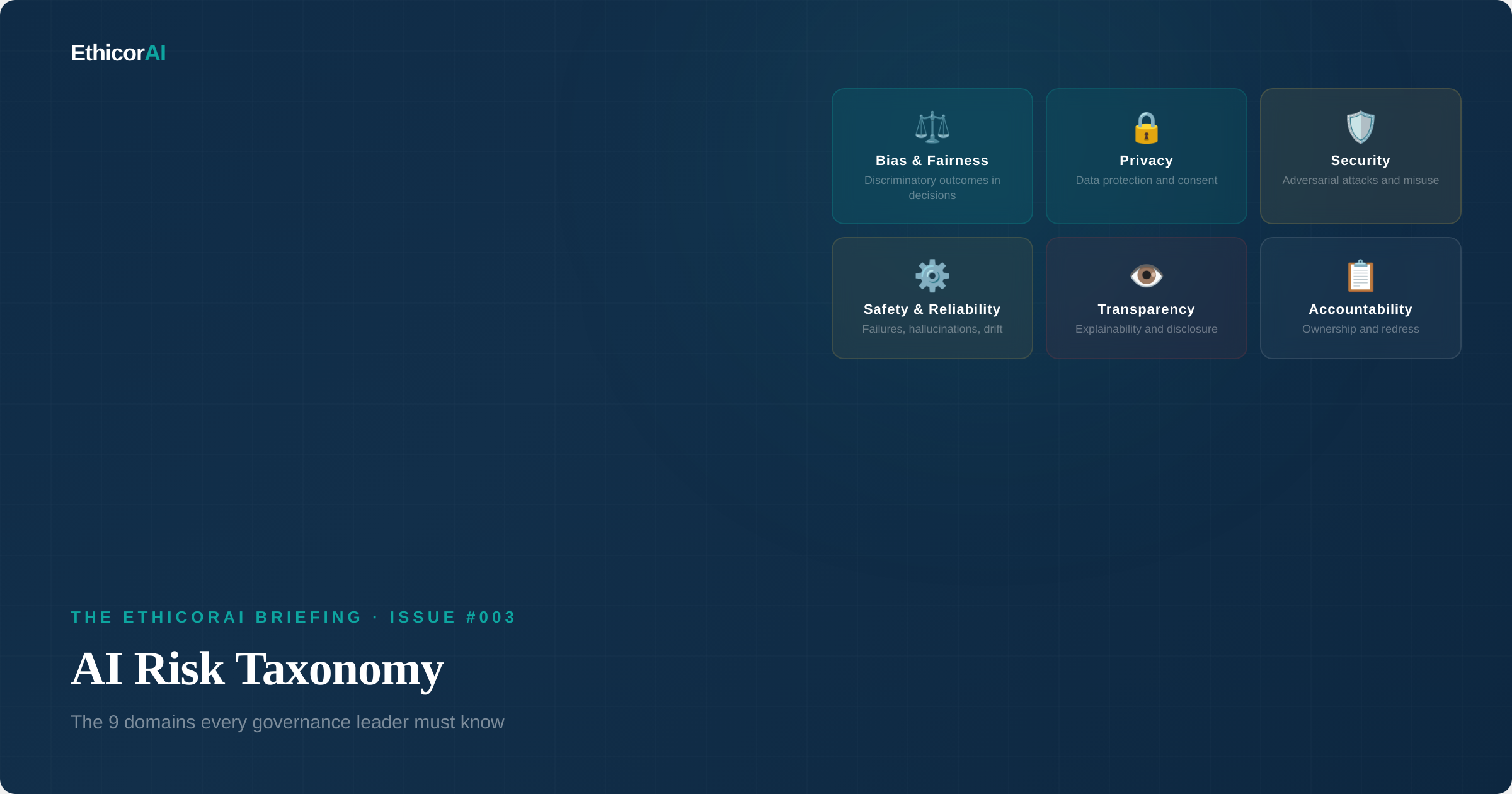

The Nine Domains of AI Risk

ISO/IEC 42001 — the world's first certifiable AI management system standard — identifies objectives related to responsible AI use that map to nine interconnected risk domains. These domains aren't isolated concerns; they overlap and reinforce each other. But understanding each one individually is essential for building comprehensive governance.

1. Fairness and Bias

What it is: The risk that AI systems produce outcomes that systematically disadvantage certain groups based on protected characteristics — race, gender, age, disability, religion, or other attributes.

Why it matters: Bias in AI isn't just an ethics concern — it's increasingly a legal one. The EU AI Act requires fairness assessments for high-risk systems. NYC Local Law 144 mandates annual bias audits for automated employment decision tools. Existing anti-discrimination laws already apply to AI-assisted decisions.

Real-world example: A major technology company's AI recruiting tool was found to penalize resumes containing the word "women's." The model had learned from historical hiring data that predominantly featured male candidates and encoded that pattern as a preference. The system was decommissioned, but not before it had influenced real hiring decisions.

Key risk factors: Training data that underrepresents certain groups. Historical data that encodes past discrimination. Proxy variables that correlate with protected characteristics. Feedback loops where biased outputs influence future training data.

2. Transparency

What it is: The risk that stakeholders — users, affected individuals, regulators — cannot understand that AI is being used, what role it plays in decisions, or how it was developed and validated.

Why it matters: Transparency is both a regulatory requirement and a trust imperative. The EU AI Act requires disclosure when users interact with AI systems. It's the foundation upon which other governance objectives rest — you can't assess fairness, demand accountability, or exercise oversight without knowing that AI is involved in the first place.

Key risk factors: Failure to disclose AI usage to affected individuals. Insufficient documentation of model design, training data, and validation processes. Lack of model cards and system descriptions. Absence of clear communication about AI capabilities and limitations.

3. Explainability

What it is: The risk that the reasoning behind AI-driven decisions cannot be understood or communicated to those who need to understand them — whether that's an affected individual, an auditor, or a governance committee.

Why it matters: Explainability is distinct from transparency. Transparency says "AI is being used." Explainability says "here's how it reached this decision." GDPR's Article 22 gives individuals the right to meaningful information about the logic involved in automated decisions. Beyond compliance, explainability is essential for human oversight — you can't effectively oversee a system you can't interpret.

Real-world example: An insurance company deployed an AI system to assess claims but could not explain to claimants why their claims were denied. When challenged, even the technical team couldn't articulate the model's decision logic. The inability to provide explanations created regulatory exposure, customer complaints, and ultimately a system redesign.

Key risk factors: Black-box models that resist interpretation. Complex model architectures where explanation is technically feasible but practically infeasible. Trade-offs between model performance and explainability. Explanations that are technically accurate but meaningless to non-technical stakeholders.

4. Reliability

What it is: The risk that AI systems produce incorrect, inconsistent, or unpredictable outputs — including hallucinations, model drift, and failure to perform as intended under real-world conditions.

Why it matters: AI systems can fail in ways that traditional software does not. Language models hallucinate — generating confident responses that are factually wrong. Models drift over time as real-world data diverges from training distributions. Performance validated in testing environments may not hold in production.

Real-world example: A lawyer used a generative AI tool to draft a legal brief citing six court cases. All six were hallucinated — they didn't exist. The brief was submitted to court without verification, resulting in sanctions and a landmark moment in the debate about AI reliability in professional settings.

Key risk factors: Hallucination in generative AI. Model drift as real-world conditions change. Models expressing high confidence in wrong answers. Insufficient testing for edge cases and adversarial conditions. Performance gaps between development and production environments.

5. Safety

What it is: The risk that AI systems cause physical, psychological, or economic harm to individuals or communities — through direct action, flawed recommendations, or failures in critical applications.

Why it matters: Safety becomes paramount when AI operates in domains where errors have serious consequences: healthcare, transportation, critical infrastructure, and financial services. Unlike reliability (which concerns accuracy), safety concerns the downstream impact of failures on human wellbeing.

Key risk factors: AI in safety-critical applications without adequate fail-safes. Autonomous systems making decisions without human oversight in high-stakes contexts. Cascading failures across multi-model pipelines. Insufficient human override capabilities.

For every AI system in your inventory, ask: what happens when it's wrong? If the answer involves impact on individuals — denied services, incorrect medical guidance, financial harm — that system requires mandatory human oversight and output verification processes.

6. Robustness and Redundancy

What it is: The risk that AI systems break down under unexpected conditions, adversarial inputs, or stress — and that no fallback mechanisms exist to maintain functionality or safety when they do.

Why it matters: Robustness is the system's ability to maintain performance under conditions it wasn't specifically designed for. Redundancy is the organizational backup plan when robustness fails. Together, they determine whether an AI failure is a minor hiccup or a catastrophic breakdown.

Real-world example: Researchers demonstrated that adding imperceptible patterns to images could cause autonomous vehicle perception systems to misidentify stop signs as speed limit signs. The same class of vulnerability — adversarial fragility — applies to any AI system that processes external inputs.

Key risk factors: Lack of adversarial testing. No graceful degradation pathways when systems encounter out-of-distribution inputs. Single points of failure with no fallback. Insufficient stress testing under edge conditions. Missing manual override capabilities.

7. Privacy

What it is: The risk that AI systems collect, process, store, or expose personal data in ways that violate privacy rights, regulatory requirements, or individual expectations.

Why it matters: AI systems are data-hungry by nature. Training models requires vast amounts of data — some of which inevitably includes personal information. The intersection of AI and privacy regulation (GDPR, CCPA, India's DPDP Act) creates complex compliance challenges that span the entire AI lifecycle from data collection through model deployment.

Real-world example: Employees at several organizations were found pasting confidential customer data, internal reports, and proprietary code into public AI chatbots. The data entered model training pipelines, creating both privacy violations and intellectual property exposure. This "shadow AI" problem is one we'll explore in detail in a future issue.

Key risk factors: Training data containing personal information without consent. Inference attacks that extract personal data from model outputs. Data minimization failures. Cross-border transfers violating jurisdictional requirements. Inadequate retention and deletion practices.

8. Security

What it is: The risk that AI systems are vulnerable to adversarial attacks, unauthorized access, manipulation, or misuse — either as targets of attacks or as tools weaponized by attackers.

Why it matters: AI introduces attack surfaces that traditional cybersecurity doesn't address. Prompt injection can manipulate language models. Data poisoning can corrupt training datasets. Model extraction can steal intellectual property. And increasingly, attackers use AI to automate and scale their own attack capabilities.

Key risk factors: Prompt injection and goal hijacking. Data poisoning of training datasets. Model theft and extraction. Supply chain compromise in AI development tools. The recursive problem: deploying AI for security creates new security risks (a paradox we'll explore in depth later).

9. Accessibility

What it is: The risk that AI systems create barriers for people with disabilities or exclude populations from their benefits — either by design, by default, or through failure to consider diverse user needs.

Why it matters: AI has enormous potential to improve accessibility — voice interfaces, real-time captioning, assistive technologies. But poorly designed AI can also create new barriers: voice recognition that fails for speech impairments, facial recognition that doesn't work for certain skin tones, interfaces that aren't compatible with screen readers, or automated systems that lack alternative access paths.

Key risk factors: Training data that underrepresents people with disabilities. Interface designs that assume specific physical or cognitive capabilities. Automated processes with no human-accessible alternative. Failure to test with diverse user populations including those using assistive technologies.

Accessibility is the domain most frequently overlooked in AI governance programs — yet it intersects with fairness, transparency, and safety. An AI system that excludes people with disabilities from accessing services isn't just an accessibility failure; it's a fairness and potentially a legal failure.

10. Accountability

There is a tenth dimension that sits above the nine domains: accountability. While ISO 42001 treats accountability as a cross-cutting principle, in practice it functions as its own risk domain — the risk that no person or entity is clearly responsible for the governance outcomes of an AI system.

When a model makes a harmful decision, who is responsible — the developer, the deployer, the data provider, or the user? The EU AI Act addresses this by assigning specific obligations to specific roles. But organizational accountability — who within your organization owns AI risk — is something you must define yourself.

Real-world example: When an AI chatbot deployed by an airline provided incorrect refund information, the airline argued the chatbot was a separate entity. A tribunal rejected this argument, ruling the airline liable for information provided by its AI. The precedent: you own what your AI says and does.

How Risks Change by AI Type

The risk profile changes significantly depending on the type of AI system:

Classical Machine Learning (decision trees, regression, random forests) — Primary risks: fairness in training data, reliability through model drift, explainability challenges. These are well-understood systems with established testing methodologies.

Generative AI (large language models, image generators, code assistants) — Primary risks: reliability (hallucinations), privacy (data leakage through prompts), safety (harmful content generation), transparency (users not knowing AI generated the content). New risk categories that didn't exist with classical ML.

Agentic AI (autonomous agents that take actions, call APIs, chain decisions) — Primary risks: all of the above, plus safety (unauthorized actions), security (privilege escalation, goal hijacking), robustness (cascading failures), and accountability (attribution gaps across multi-agent chains). This is the frontier of AI risk. We'll dedicate significant coverage to it in future issues.

From Taxonomy to Action

A taxonomy is only useful if it drives action. Here's how to put this framework to work:

1. Map your inventory to the nine domains. For each AI system, assess which risk domains are relevant and at what severity. A hiring AI triggers fairness, privacy, transparency, and explainability risks. A customer service chatbot triggers reliability, safety, transparency, and accountability risks. Not every system triggers every domain.

2. Build domain-specific expertise. Your governance team doesn't need to be expert in all nine domains simultaneously. Identify which are most relevant to your AI portfolio and build depth there first. Partner with existing functions — legal for accountability, security for adversarial risks, data teams for privacy, accessibility specialists for inclusive design.

3. Prioritize by impact. Focus governance attention on the systems and domains where the intersection of likelihood and impact is highest. A high-bias-risk system affecting thousands of job applicants deserves more immediate attention than a low-risk content recommendation algorithm.

Create a risk matrix: list your AI systems as rows and the nine domains as columns. For each cell, mark the risk level (High, Medium, Low, or N/A). This single exercise gives you a comprehensive view of your AI risk landscape — and tells you exactly where to focus governance resources first. This is also the foundation for the AI impact assessment required under ISO 42001.

Next Issue

Issue #004: ISO/IEC 42001 Demystified — What It Takes to Build a Certified AI Management System. The world's first certifiable standard for AI governance. We go deep on the clauses, the controls, and what auditors actually look for — with practical insights you won't find in the standard itself.