The Standard That's Changing How Organizations Govern AI

The Standard That's Changing How Organizations Govern AI

If you've been following this newsletter, you know the foundations: AI governance matters (Issue #001), the EU AI Act sets the regulatory bar (Issue #002), and nine risk domains define the landscape (Issue #003). This week, we tackle the question that follows: how do you actually build a governance system that works in practice?

The answer, increasingly, is ISO/IEC 42001 — the world's first certifiable standard for AI management systems. Published in December 2023, it has rapidly become the governance backbone that organizations use to demonstrate responsible AI practices to regulators, clients, and stakeholders.

But here's what most overviews of ISO 42001 miss: it's not a compliance checklist. It's a management system. That distinction is everything. A checklist gives you a point-in-time snapshot. A management system gives you a living, breathing capability that improves over time. Organizations that treat ISO 42001 like the former will struggle in audits. Those that treat it like the latter will build AI governance that actually works.

The Paradigm Shift: Impact Beyond the Organization

If you've worked with other ISO management system standards — ISO 27001 for information security, ISO 9001 for quality — you'll recognize the structure of 42001. It follows the same Harmonized Structure (Plan-Do-Check-Act), the same clause numbering, the same management review and continual improvement logic.

But ISO 42001 introduces something fundamentally different in how it approaches risk and impact.

Historically, ISO management system standards focused primarily on impact to the organization and its customers. Risk assessment was centered on what could go wrong for the business: operational disruption, financial loss, customer dissatisfaction, regulatory penalties. Even ISO 27001 — which deals with information security — frames risk largely in terms of organizational assets. ISO 27701 started shifting this by introducing privacy impact and focusing on the individual.

ISO 42001 takes this shift much further. It introduces the concept of the AI System Impact Assessment — a requirement to evaluate the impact of your AI systems not just on your organization and customers, but on individuals, groups of individuals, and society at large. This is not a nice-to-have annex. It's a core requirement.

This means your risk assessment needs to answer questions that traditional management systems never asked: How does this AI system affect people who interact with it? How does it affect communities that don't interact with it directly but are affected by its decisions? Could it create systemic effects on society — labour markets, access to services, democratic processes?

Risk Alert

The biggest misconception about ISO 42001 is treating it as "ISO 27001 for AI." The security standard protects organizational assets. The AI standard protects people and society. If your risk assessment only considers what could go wrong for the business, you've missed the point of the standard — and an auditor will find that gap.

AI System Impact Assessment: The Requirement Most Organizations Underestimate

The AI System Impact Assessment is, in practice, the single most important requirement in ISO 42001 — and the one most organizations underestimate. ISO/IEC 42005:2025, published as a companion standard, provides detailed guidance on how to conduct these assessments.

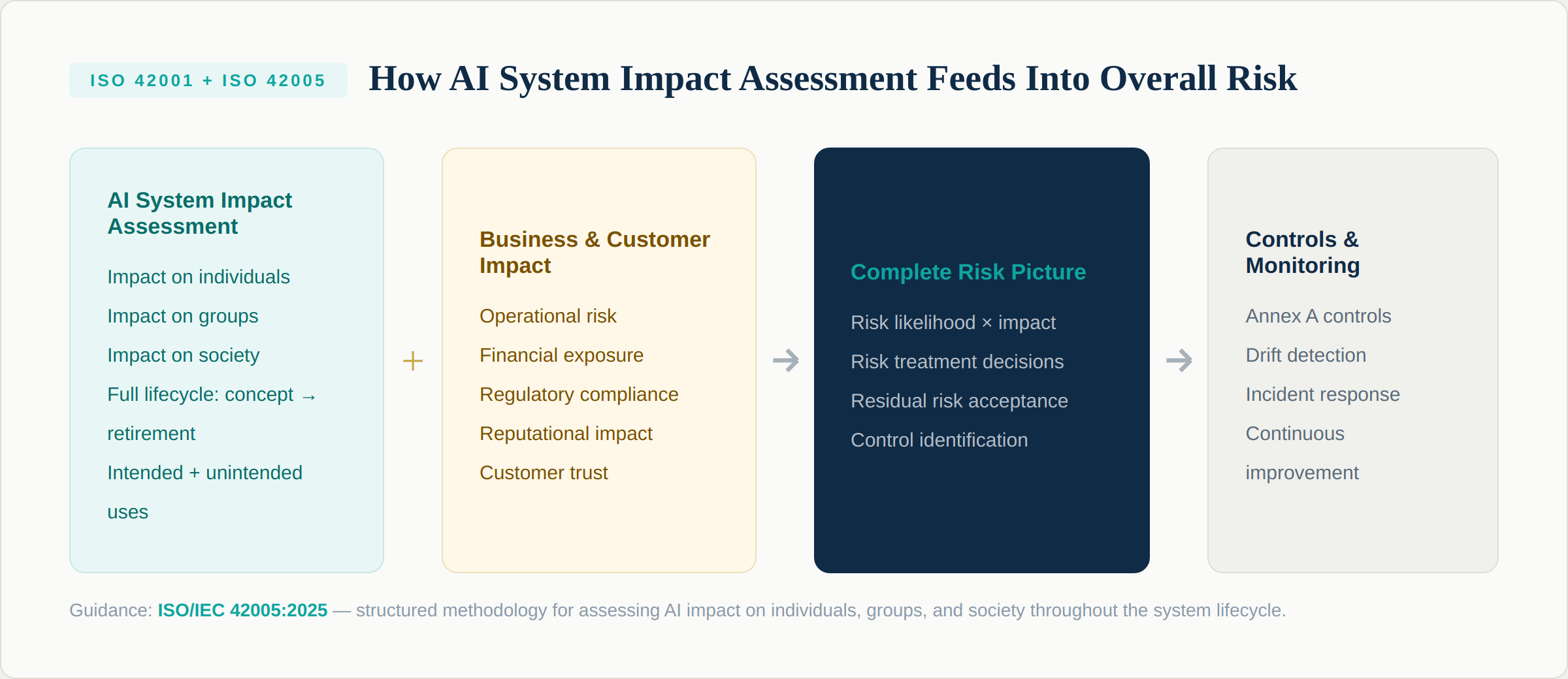

Here's how the pieces fit together. The AI System Impact Assessment evaluates impact across three dimensions: impact on individuals (people directly affected by AI decisions), impact on groups (communities, demographic groups, protected classes), and impact on society (systemic effects, market dynamics, democratic processes). This assessment covers the full AI system lifecycle — from initial conceptualization through development, deployment, operation, and eventual retirement or decommissioning.

Here's how the pieces fit together. The AI System Impact Assessment evaluates impact across three dimensions: impact on individuals (people directly affected by AI decisions), impact on groups (communities, demographic groups, protected classes), and impact on society (systemic effects, market dynamics, democratic processes). This assessment covers the full AI system lifecycle — from initial conceptualization through development, deployment, operation, and eventual retirement or decommissioning.

Critically, this impact assessment doesn't replace your organizational risk assessment. It feeds into it. The AI system impact (individuals, groups, society) combines with business and customer impact (operational risk, financial exposure, regulatory compliance, reputational damage) to create the complete risk picture. From that complete picture, you identify controls, determine risk treatment, and accept residual risk.

ISO 42005 provides structured guidance for this process, including a taxonomy of harms and benefits (Annex C), example assessment templates (Annex E), and explicit mapping to ISO 42001 requirements (Annex A). If you're implementing 42001, treating 42005 as a companion document is strongly recommended.

What To Do

Start your implementation with an AI System Impact Assessment for your highest-risk system. This single exercise will reveal 80% of the gaps a certification audit would find. Assess intended uses, but also reasonably foreseeable unintended uses — and evaluate impact across individuals, groups, and society, not just business operations.

The Use Case Trap: Why Output Risk ? Application Risk

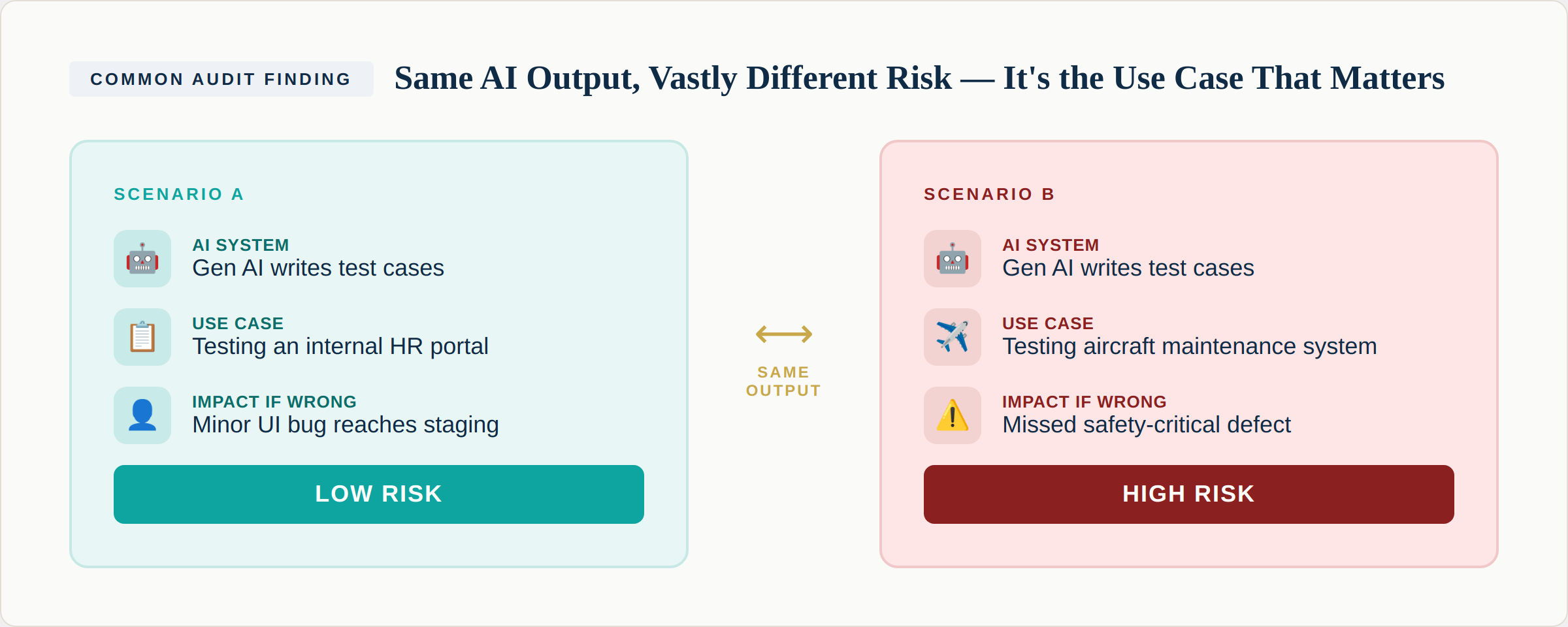

One of the most common failures in AI risk assessment — and one that auditors look for specifically — is confusing the risk of the AI output with the risk of how that output is used.

Consider a generative AI system that writes software test cases. The output generation itself is a straightforward, low-complexity activity. The AI produces test scripts. If you assess risk based solely on what the AI system does — "it generates test cases" — you'd classify it as low risk.

Consider a generative AI system that writes software test cases. The output generation itself is a straightforward, low-complexity activity. The AI produces test scripts. If you assess risk based solely on what the AI system does — "it generates test cases" — you'd classify it as low risk.

But risk doesn't live in the output. Risk lives in the use case. If those test cases are for an internal HR portal, a missed edge case means a minor bug reaches staging. Low impact. If those same test cases are for an aircraft maintenance system, a missed edge case could mean a safety-critical defect goes undetected. The impact is fundamentally different — not because the AI changed, but because the context changed.

This is why ISO 42001 requires you to assess AI systems in their context of use, not in isolation. It's also why the impact assessment must consider the full lifecycle, including how downstream consumers use AI outputs for decision-making.

Monitoring and Drift: The Living System Requirement

ISO 42001 is built on the Plan-Do-Check-Act cycle. The "Check" phase isn't a one-time event. It's a continuous requirement to monitor your AI systems, evaluate their performance, and act on what you find.

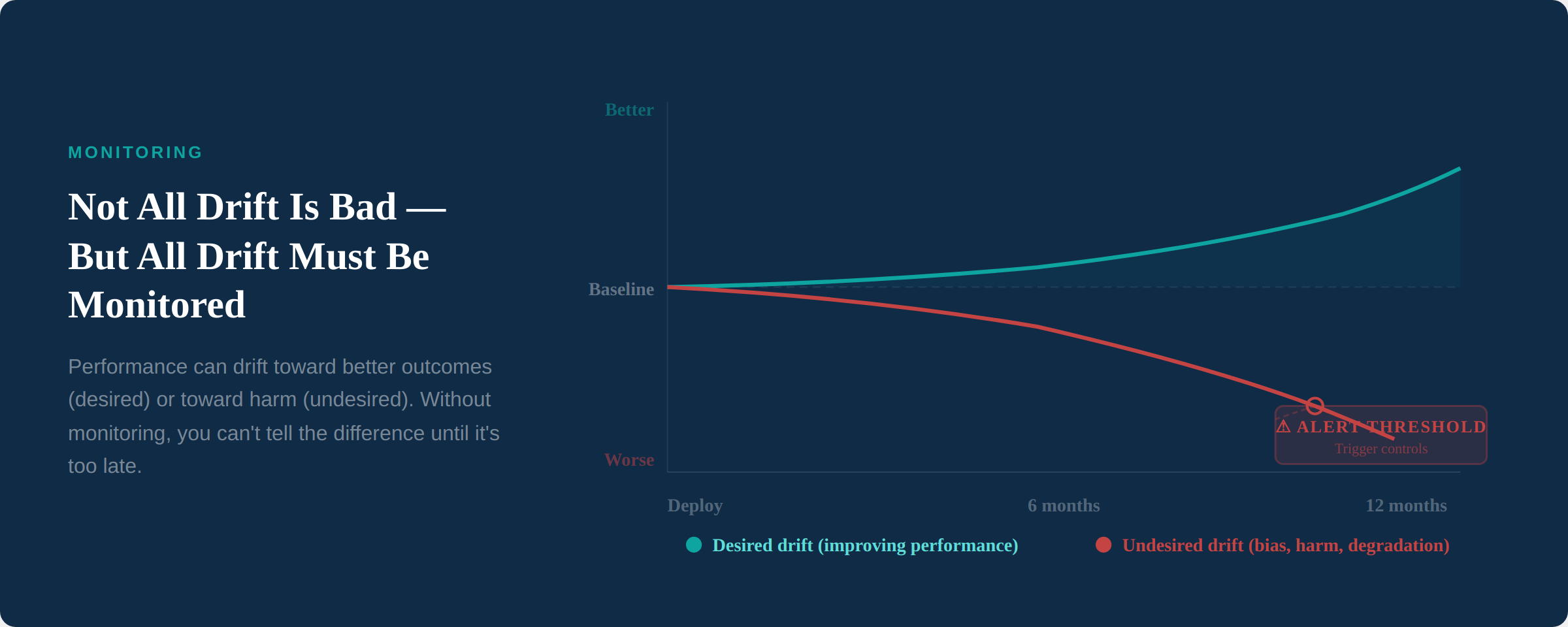

In AI systems, performance will drift. This is not a question of if — it's a question of direction. Drift can be positive: the model improves its accuracy over time as it processes more data, produces fewer errors, or better calibrates its confidence. This is desired drift. But drift can also be negative: the model begins producing biased outcomes, its accuracy degrades as real-world conditions diverge from training data, or it develops blind spots in edge cases. This is undesired drift.

In AI systems, performance will drift. This is not a question of if — it's a question of direction. Drift can be positive: the model improves its accuracy over time as it processes more data, produces fewer errors, or better calibrates its confidence. This is desired drift. But drift can also be negative: the model begins producing biased outcomes, its accuracy degrades as real-world conditions diverge from training data, or it develops blind spots in edge cases. This is undesired drift.

The critical point is that you cannot distinguish desired from undesired drift without monitoring. Both look like "the model's behavior is changing." Only by tracking specific metrics — fairness indicators, error rates, confidence distributions, output variance — over time can you determine whether drift is improving or degrading the system.

Your management system needs to define what you monitor, how frequently, what thresholds trigger alerts, and what controls are activated when drift crosses into undesired territory. This is where logging requirements become essential. Without adequate logging, you have no evidence base for monitoring, no audit trail for investigations, and no data to support management reviews.

Defining AI Incidents: The Question Nobody Has Answered

Every management system needs an incident management process. ISO 42001 is no different. But AI introduces a question that most organizations haven't adequately addressed: what constitutes an AI incident?

In information security, incident definitions are relatively established: unauthorized access, data breach, system compromise. In AI, the boundaries are much less clear.

Consider this scenario: an AI system makes a decision — a loan denial, a resume screening, a content moderation action — and the affected person perceives the decision as biased. No bias has been confirmed through formal testing. But the individual believes, based on their experience, that the AI treated them unfairly. Is that an AI incident?

Most organizations would say no — it's a complaint, not an incident. But from a governance perspective, that distinction is dangerous. A pattern of perceived bias that goes uninvestigated is a governance failure, whether or not formal testing eventually confirms actual bias. At minimum, it's a signal that should feed into your monitoring and review processes.

Your AI management system needs clear incident definitions that go beyond traditional categories. These should include confirmed system failures (hallucinations, incorrect outputs), detected bias or drift beyond thresholds, complaints or reports of perceived unfairness, data quality incidents affecting AI inputs, unauthorized use of AI systems, and near-misses — situations where harm was narrowly avoided.

Risk Alert

The failure to proactively define AI incidents is one of the most common gaps auditors find. If your organization can't answer "what is an AI incident?" clearly and consistently, your incident management process is a paper exercise. Define it now — before your first real incident forces you to improvise.

Annex A and Annex B: The AI-Specific Controls

If you're familiar with ISO 27001, you know that Annex A provides the control set. ISO 42001 follows the same pattern, but with controls specific to AI governance.

Annex A provides the AI-specific controls organized across key areas: AI policies, internal organization, resources for AI systems, AI system impact assessment, AI system lifecycle management, data for AI systems, system performance and monitoring, and third-party and customer relationships.

Annex B provides implementation guidance for these controls — how to interpret and operationalize them. Together, they define what your management system needs to address.

A few controls deserve particular attention:

AI System Impact Assessment controls require documented assessment of impacts on individuals, groups, and society — not just organizational impact. As discussed above, this is the single biggest shift from traditional management system thinking.

Data governance controls address the quality, provenance, and suitability of data used in AI systems — including training data, validation data, and operational data. Poor data governance undermines everything else in the system.

Lifecycle management controls cover the full AI system lifecycle from design through retirement. This includes requirements for documentation, testing, validation, deployment approval, and decommissioning — ensuring that governance applies at every stage, not just at deployment.

Third-party controls address the governance of AI systems and components obtained from external providers. Given how many organizations use third-party AI — embedded in SaaS platforms, purchased as APIs, or developed by vendors — these controls are essential for managing supply chain risk.

What Auditors Actually Look For

If you're pursuing certification, understanding what auditors actually evaluate — versus what organizations assume they evaluate — can save months of misaligned effort.

Auditors look for evidence that the system is alive. The most common audit failure isn't missing documentation. It's documentation that exists but shows no evidence of review, update, or use. A risk register that was created six months ago and never reviewed. Policies that were approved by management but never communicated to staff. Monitoring procedures that exist on paper but produce no logs. The management system must show signs of life: reviewed, updated, improved, actively used in decision-making.

Auditors look for top management commitment. Clause 5 requires leadership involvement — not just signature on a policy, but demonstrable engagement. This means management reviews with real decisions, resource allocation for AI governance, and evidence that leadership receives and acts on AI risk information.

Auditors look for proportionality. A management system that applies the same level of control to every AI system — regardless of risk — suggests the organization doesn't understand its own risk landscape. Auditors want to see evidence that you've differentiated between high-risk and low-risk systems and applied governance proportionally.

Auditors look for corrective actions that close the loop. Finding nonconformities isn't the problem — every organization has them. The question is whether the organization identifies root causes, implements corrective actions, and verifies that those actions were effective. A nonconformity without a closed corrective action cycle is a red flag.

What To Do

Before starting your certification journey, do a gap assessment. But don't just check whether documentation exists — check whether it shows evidence of use. Are risk registers reviewed quarterly? Are monitoring logs being generated? Are management reviews producing decisions? If the answer is "we have the document but it hasn't been touched since we wrote it," that's your gap.

The Certification Journey: What to Expect

ISO 42001 certification follows the standard two-stage audit process used for all ISO management system certifications.

Stage 1 (Documentation Review): The certification body reviews your management system documentation — policies, procedures, risk assessments, impact assessments, scope definition, and statement of applicability. This stage identifies any major gaps before the on-site audit. It's typically conducted partially remotely.

Stage 2 (Implementation Audit): Auditors evaluate whether the management system described in your documentation is actually implemented and effective. They interview staff, review records, examine evidence of monitoring and review, and assess whether the system is being followed in practice. This is where the "alive system vs. paper system" distinction becomes critical.

Timeline: From decision to certify to achieving certification, most organizations need 6-12 months — depending on starting maturity. Organizations with existing ISO management systems (27001, 9001) can move faster because the infrastructure (management review, internal audit, document control) already exists.

Cost considerations: Certification costs vary by organization size, scope, and certification body. But the larger cost is typically internal — the effort to build the management system, conduct impact assessments, implement controls, train staff, and establish monitoring. Organizations that underestimate the internal investment often rush the implementation and end up with a paper system that struggles in Stage 2.

When to Pursue Certification vs. Alignment

Certification isn't right for every organization. Here's how to decide:

Pursue certification when: You operate in a regulated sector where AI governance assurance is expected. Your clients or partners require certification as a procurement condition. You want a competitive differentiator in markets where trust matters. You need an external validation mechanism to drive internal discipline.

Pursue alignment without certification when: You're an early-stage organization building governance capability for the first time. Your risk exposure doesn't justify the certification investment. You want to adopt the framework but lack the organizational maturity for a formal management system. You're using ISO 42001 as a reference alongside other frameworks (NIST AI RMF, EU AI Act compliance).

Alignment without certification can still be valuable — the standard provides an excellent structure for building governance even without formal audit. Many organizations use it as a maturity model, progressively implementing controls as their AI portfolio grows.

Key Takeaways

ISO 42001 is not a checklist — it's a management system that must demonstrate continuous operation and improvement. The AI System Impact Assessment is the most important and most underestimated requirement, demanding evaluation of impact on individuals, groups, and society. Risk must be assessed in the context of use, not the context of output — the same AI system can be low-risk or high-risk depending on how its outputs are consumed. Monitoring and drift detection require defined logging, thresholds, and response procedures. AI incident definitions must be proactively established, not improvised after the first failure. And above all, auditors are looking for a living system, not a documentation library.

Next Issue

Issue #005: Shadow AI — The Governance Risk Hiding in Every Department. Your employees are already using AI tools you don't know about. We explore the scale of the problem, why banning AI fails, and how to build a discovery and governance framework that enables rather than blocks.