The Tool Nobody Knew About

During a recent governance audit, we encountered a scenario that perfectly illustrates why Shadow AI is one of the most urgent governance risks organizations face today.

A project manager at a technology services company had access to two things: the time card data of his team members and visibility into what work they completed. Using these inputs, he built an application that calculated and analyzed the productivity of each team member. The application was generated using AI — a Python script produced by a large language model, deployed quietly, and used regularly.

When asked whether the tool influenced performance appraisals, the manager said no. But in practice, the productivity scores it generated were being referenced — consciously or unconsciously — in appraisal discussions. Those discussions affected promotions, project assignments, and in extreme cases, employment decisions.

Step back and consider what was actually happening. An AI system was being used to evaluate human performance. The AI-generated code had never been reviewed for accuracy or bias. No impact assessment had been conducted. HR didn't know it existed. The governance team didn't know it existed. The people being evaluated by it didn't know it existed. And yet it was influencing decisions that directly affected their careers and livelihoods.

This is Shadow AI. Not a hypothetical risk. Not a future concern. Something that is almost certainly happening in your organization right now.

What Is Shadow AI?

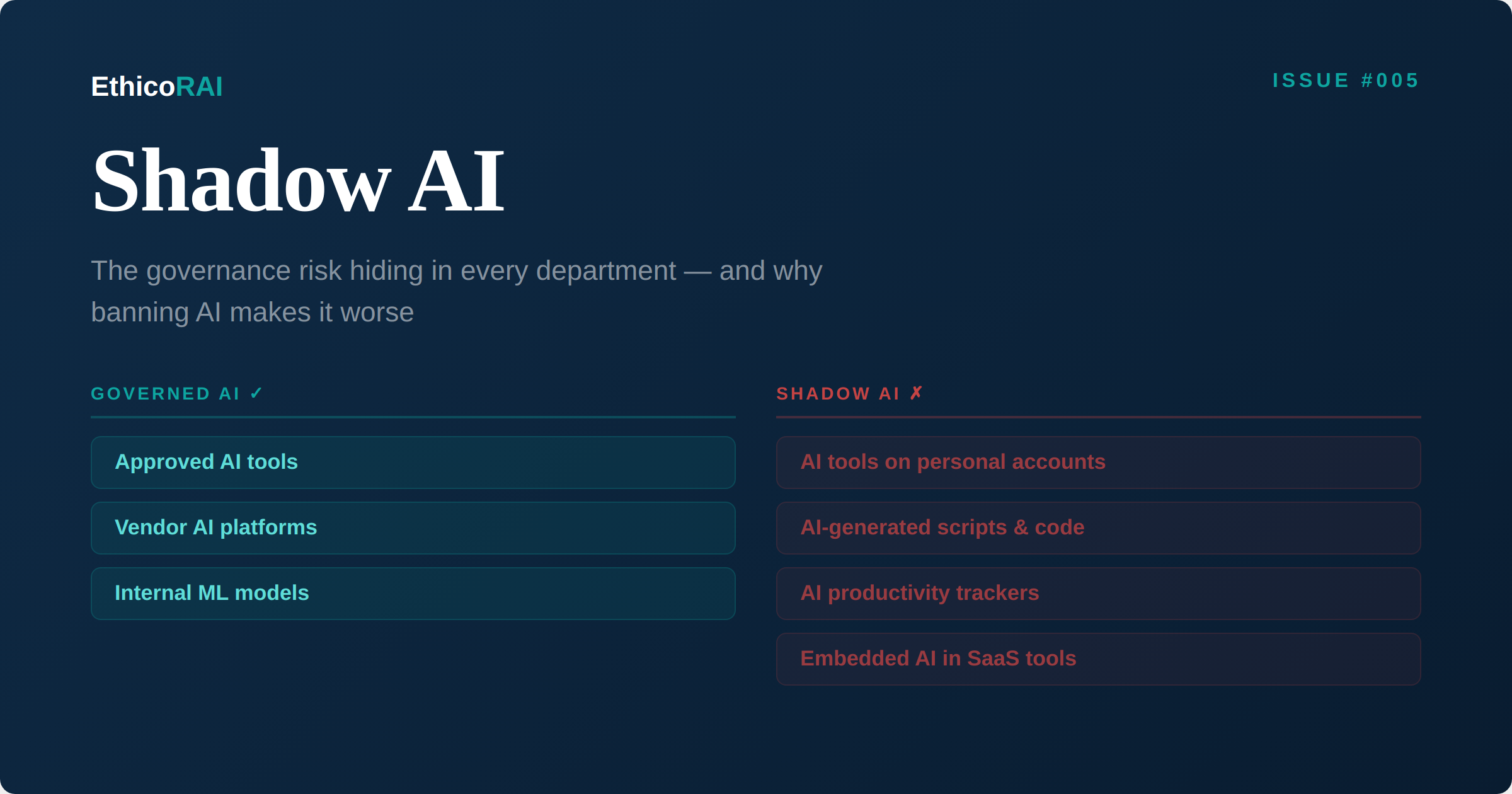

Shadow AI is any use of artificial intelligence within an organization that occurs outside the visibility and governance of the teams responsible for AI oversight. It's the AI equivalent of Shadow IT — but with significantly higher risk, because AI systems don't just process data. They generate outputs that inform decisions, and those decisions affect people.

Shadow AI includes employees using personal AI accounts for work tasks, teams building AI-powered tools without governance approval, AI features embedded in SaaS platforms that nobody evaluated, code generated by AI assistants that enters production without review, and AI-powered analytics that influence business decisions without anyone recognizing them as AI systems.

The scale of the problem is significant. According to the IBM Cost of a Data Breach Report 2025, 63% of organizations lack AI governance policies to manage AI use and prevent the proliferation of Shadow AI. That figure predates the current wave of AI tools that make it trivially easy for any employee to build AI-powered applications.

Shadow AI is not primarily a technology problem. It's a governance visibility problem. The most dangerous Shadow AI isn't the employee using a personal AI account to draft emails — it's the manager who built an AI-powered decision tool that nobody knows about, affecting people who have no idea they're being evaluated by an algorithm.

Why People Create Shadow AI

Before we can address Shadow AI, we need to understand why it exists. And the answer is rarely malicious. In almost every case, Shadow AI emerges from one of four motivations:

Productivity pressure. Employees are under pressure to deliver more, faster. AI tools offer a genuine productivity advantage. If the organization hasn't provided approved alternatives, people will find their own.

Ease of access. Building an AI-powered tool today requires no specialized training. A project manager with a personal AI subscription can generate working Python code in minutes. The barrier between "idea" and "deployed application" has effectively disappeared.

Governance friction. In organizations where the process for approving new tools is slow, bureaucratic, or unclear, employees route around it. If getting an AI tool approved takes three months but building one takes an afternoon, the outcome is predictable.

Lack of awareness. Many people don't recognize what they're doing as "using AI" in a way that requires governance. The project manager in our opening example likely didn't think of his productivity tool as an "AI system." He thought of it as a spreadsheet replacement.

The Shadow AI Risk Spectrum

Not all Shadow AI carries the same risk. Understanding where different types fall on the spectrum is essential for building a proportionate response.

Level 1 — Convenience. Using AI to brainstorm ideas, draft emails, or summarize meeting notes. No sensitive data is involved. No decisions are being made. The risk is minimal, and the productivity benefit is real. Governance should enable this, not block it.

Level 2 — Productivity. AI-generated code, reports, or analyses that enter internal workflows. The risk increases because these outputs may be treated as accurate without verification, and errors can propagate through processes. The AI-generated test cases we discussed in Issue #004 fall here — low risk in isolation, but the risk depends entirely on how the output is used.

Level 3 — Data Exposure. Pasting customer data, proprietary information, source code, or confidential documents into AI tools. This is where privacy and security risks become significant. Data entered into external AI services may be processed, stored, or used for model training in ways that violate privacy regulations, contractual obligations, or intellectual property protections.

Level 4 — Decision-Making. AI systems that evaluate people, allocate resources, approve or deny services, or make decisions with real-world consequences. Our opening scenario sits squarely here. This is the highest-risk category because it directly affects individuals — and it intersects with every regulatory framework from the EU AI Act to anti-discrimination law.

When you discover Shadow AI in your organization — and you will — resist the urge to treat all instances the same. A blanket crackdown on Level 1 usage wastes governance resources and erodes trust. Focus your attention on Levels 3 and 4, where the actual harm potential lives.

Why Banning AI Fails

The instinctive response to Shadow AI is to ban unauthorized AI use. Issue a policy. Block AI tools on the corporate network. Prohibit AI use without explicit approval. This approach feels decisive. It is also counterproductive.

Here's why bans fail:

People work around them. If you block AI tools on the corporate network, employees use personal devices. If you ban AI code generation, they paste code from home sessions. The tools are too accessible and too useful for a ban to hold. You don't eliminate Shadow AI — you push it further into the shadows, making it harder to detect and govern.

Bans penalize the wrong people. The employees most likely to comply with an AI ban are the ones who would use AI responsibly anyway. The ones building undisclosed decision-making tools will continue regardless, because they already weren't following governance processes.

You lose the productivity benefit. AI tools genuinely improve productivity. Organizations that ban AI while competitors embrace it will fall behind. The question is not whether your employees will use AI. It's whether they'll use it visibly or invisibly.

Bans create a false sense of security. Leadership believes the problem is solved because a policy exists. Meanwhile, Shadow AI proliferates beneath the surface, now with even less likelihood of being reported or discovered because employees fear disciplinary action.

The Enable-and-Govern Approach

The alternative to banning is enabling — with governance. This means making it easier to use AI responsibly than to use it irresponsibly. The approach has four components:

1. Provide approved alternatives. If employees need AI tools, give them AI tools — with appropriate guardrails. Enterprise versions of major AI platforms (such as ChatGPT Enterprise, Microsoft Copilot, Google Gemini for Workspace, or Claude for Enterprise) offer data protection controls, usage logging, and administrative oversight that personal accounts don't. The cost of enterprise licenses is a fraction of the cost of a single Shadow AI incident.

2. Create a fast-track approval process. When someone identifies a new AI use case, the path to approval should be measured in days, not months. Build a lightweight self-assessment form: What does the tool do? What data does it access? Who is affected by its outputs? How will accuracy be verified? For low-risk use cases (Levels 1-2), approval should be near-automatic. Reserve deep assessment for Levels 3-4.

3. Build an AI use registry. Every AI tool — approved or discovered — goes into a central register. This is the AI inventory we discussed in Issue #001. It should capture the tool name, its purpose, what data it accesses, who uses it, and what decisions it informs. The registry isn't a permission system — it's a visibility system. You can't govern what you can't see.

4. Establish clear, proportionate policies. Your AI acceptable use policy should do three things. First, explicitly permit low-risk AI use (Levels 1-2) to remove the incentive to hide. Second, define clear boundaries around data exposure (Level 3) — what data can and cannot be entered into AI tools. Third, require governance review for any AI use that informs decisions about people (Level 4).

Start with discovery. Send a simple, non-punitive survey to department heads: "What AI tools are people in your team using?" Frame it as inventory-building, not investigation. Pair it with a message from leadership that says: "We want to enable AI use, not ban it — but we need to know what's happening so we can support it responsibly." You will be surprised by what surfaces.

Discovery: Finding the Shadow AI You Already Have

Even with an enabling approach, Shadow AI will exist. Your organization needs a discovery capability — an ongoing process for identifying AI use that hasn't been registered. This includes:

Network and procurement analysis. Review network traffic for connections to AI service APIs. Review procurement and expense reports for AI tool subscriptions. Review browser extension installations for AI-powered plugins. These technical signals can identify Shadow AI at Levels 1-3.

Department conversations. The most effective discovery method is the simplest: talk to people. Regular conversations with department heads, team leads, and power users about how they're using technology will surface AI usage that no technical scan would find — including the custom-built tools like our opening example.

New hire and role-change checkpoints. When someone joins a team or changes roles, ask what AI tools they used in their previous position and what they'd like to use in the new one. This captures AI usage patterns that would otherwise go undetected.

Incident-driven discovery. When something goes wrong — a data quality issue, an unexplained decision, a customer complaint about a biased outcome — include "was AI involved?" in the investigation. You may discover that an AI system was part of the workflow, unknown to the people investigating the incident.

The Regulatory Dimension

Shadow AI doesn't just create operational risk. It creates regulatory exposure that organizations often underestimate.

EU AI Act. If an undiscovered AI system falls into the "high-risk" category under the EU AI Act — and any system evaluating people for employment, credit, or services likely does — the organization is non-compliant. You can't perform a conformity assessment on a system you don't know exists.

GDPR and privacy regulations. Data entered into unauthorized AI tools may constitute a data transfer to a third-party processor without a legal basis. If that tool is hosted outside the EU, it may also constitute a cross-border transfer without adequate safeguards.

Anti-discrimination law. If a Shadow AI system produces biased outcomes — as our productivity tracker example could easily do — the organization is liable regardless of whether it knew the system existed. Ignorance is not a defense.

ISO 42001 implications. If you're pursuing or maintaining ISO 42001 certification, Shadow AI is a direct threat to your management system. Your AI inventory is incomplete. Your risk assessments don't cover systems you can't see. Your controls aren't applied to tools operating outside your governance framework. An auditor will find this gap.

The project manager who built the AI productivity tracker didn't just create a governance gap. He created potential liability under employment law, data protection regulation, and anti-discrimination legislation — for an organization that didn't know the system existed. That's the true cost of Shadow AI: accountability for systems you didn't authorize and can't explain.

Building a Shadow AI Response Framework

Here's a practical framework for addressing Shadow AI in your organization, combining the enable-and-govern approach with ongoing discovery:

Phase 1 — Discover (Weeks 1-4). Conduct an organization-wide AI discovery exercise. Combine technical scanning with department conversations. Build an initial AI inventory. Identify any Level 4 (decision-making) Shadow AI that needs immediate attention.

Phase 2 — Enable (Weeks 4-8). Deploy approved AI tools with appropriate enterprise controls. Create a lightweight self-assessment process for new AI use cases. Publish a clear, enabling AI acceptable use policy. Communicate the message: "We want you to use AI. Here's how to do it responsibly."

Phase 3 — Govern (Weeks 8-12). Integrate AI discovery into ongoing governance processes. Establish regular department check-ins about AI usage. Build AI literacy training that helps employees recognize when their use of AI requires governance review — particularly when it moves from convenience or productivity into data exposure or decision-making territory.

Phase 4 — Monitor (Ongoing). Continuously scan for new Shadow AI. Review the AI registry quarterly. Update policies as new AI capabilities emerge. Track metrics: number of AI tools discovered, time from discovery to governance integration, percentage of AI use that's visible vs. shadow.

The Culture Shift

Ultimately, solving Shadow AI requires a culture shift. Employees need to feel safe reporting their AI use without fear of punishment. They need to understand why governance exists — not as a bureaucratic obstacle, but as a system that protects them, their colleagues, and the people affected by their work.

The project manager who built the productivity tracker wasn't trying to harm anyone. He was trying to manage his team better. The problem wasn't his intent. The problem was that no one in the organization had created an environment where he would think to ask: "Should I check with someone before building this?"

That's the question your governance program needs to make instinctive. Not through fear. Through understanding.

Next Issue

Issue #006: 2025 in Review — The Year AI Governance Became Non-Negotiable. A look back at the regulatory milestones, industry incidents, and framework developments that shaped the AI governance landscape in 2025 — and what they signal for the year ahead.