Looking Back to Look Forward

Looking Back to Look Forward

If you had to summarize 2025 in a single sentence for AI governance professionals, it would be this: the year we stopped asking whether organizations need AI governance and started asking how to make it work.

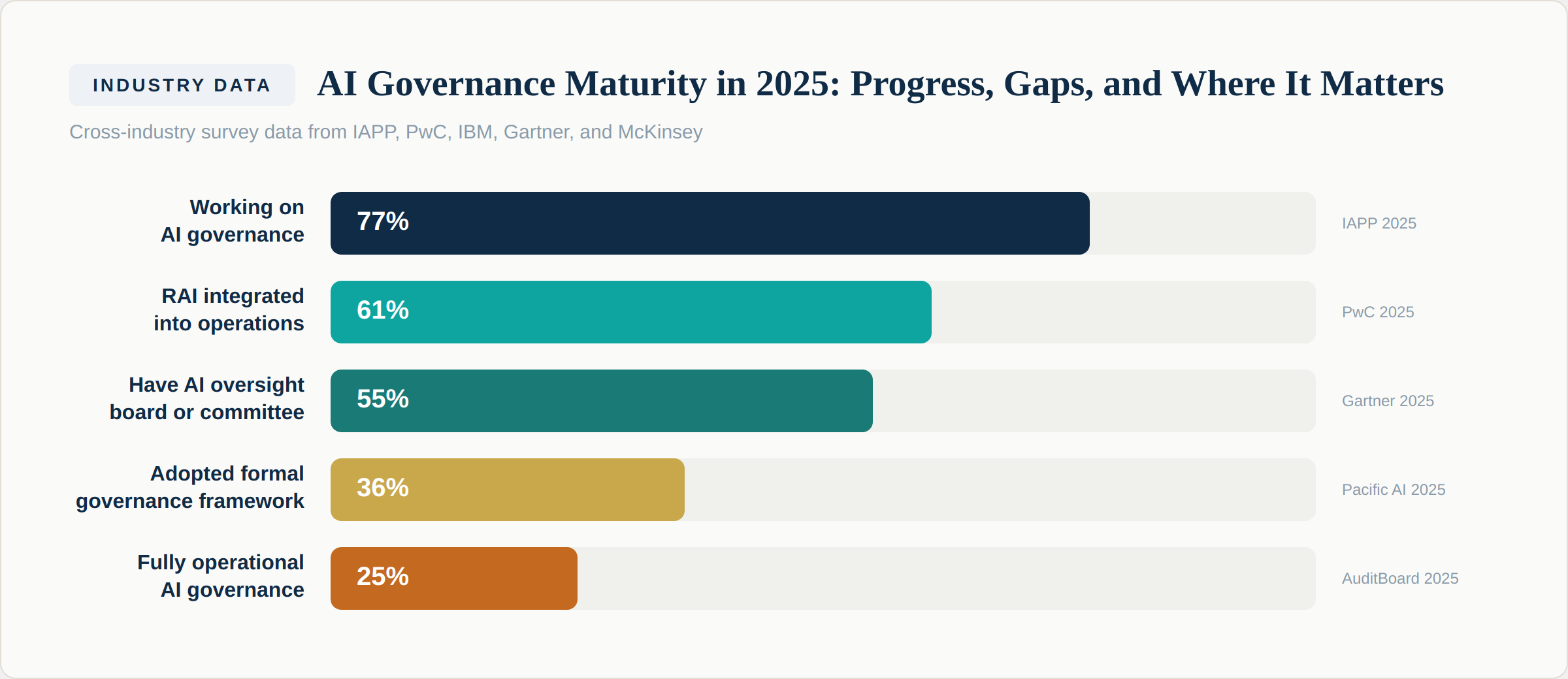

The data tells a clear story. According to the IAPP AI Governance Profession Report 2025, 77% of organizations are now actively working on AI governance — with the figure jumping to nearly 90% among organizations already using AI. That is a dramatic shift from even two years ago, when governance was an afterthought for most enterprises. More telling: 30% of organizations not yet using AI reported working on governance first — a "governance before deployment" approach that signals a fundamental change in how leaders think about AI readiness.

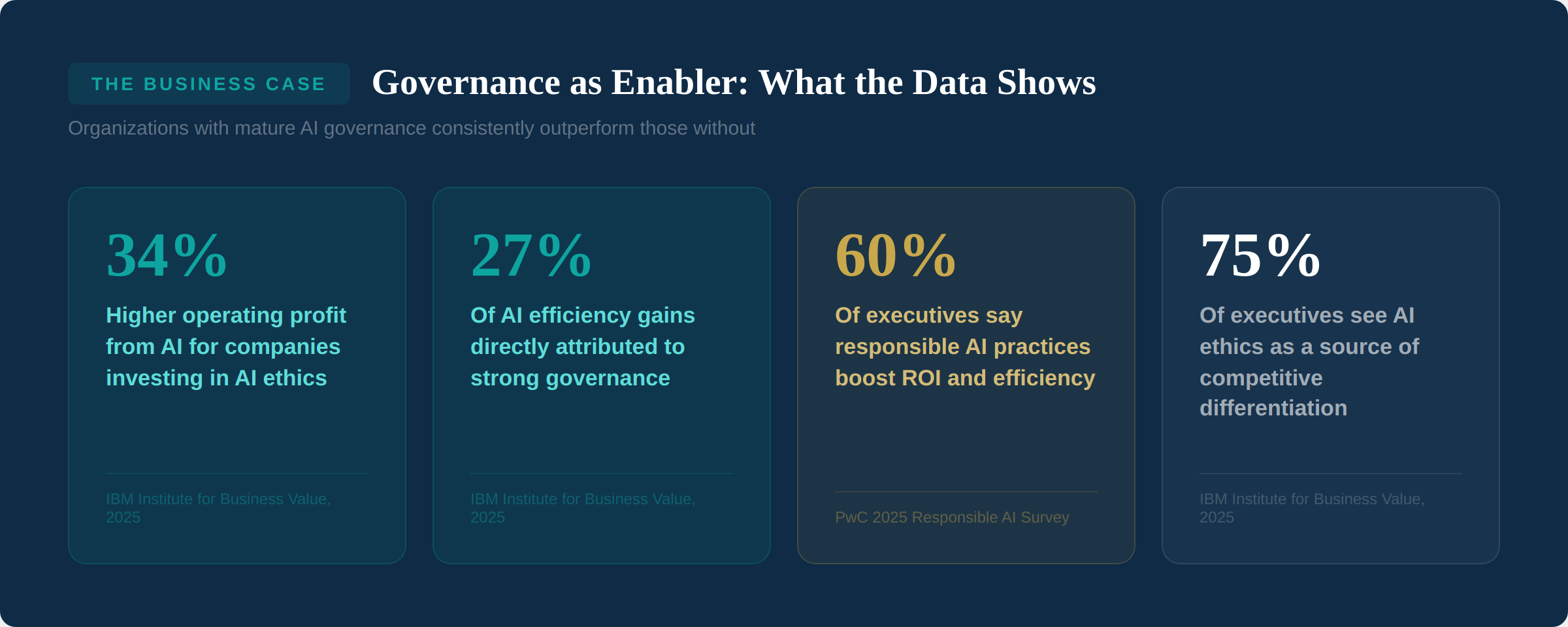

But here's what makes 2025 truly significant: for the first time, we have hard data showing that governance isn't slowing AI down. It's accelerating it. The IBM Institute for Business Value, surveying 1,000 senior leaders across 17 industries, found that 27% of AI efficiency gains stem directly from strong governance, and companies investing more heavily in AI ethics report 34% higher operating profit from AI. Meanwhile, PwC's 2025 Responsible AI Survey found that 60% of executives say responsible AI practices boost ROI and efficiency, and 55% report improved customer experience and innovation.

This issue looks at the key developments that defined 2025 — the reports, the regulations, the incidents, and the shifts in thinking — and what they mean for the year ahead.

The Governance Maturity Picture

One of the most useful things we can do with a year-in-review is synthesize across the major reports to build a single picture of where the industry stands. Here is what we see when we combine data from IAPP, PwC, IBM, Gartner, McKinsey, and others:

The pattern is clear: awareness is high, activity is widespread, but operational maturity remains low. The IAPP report found that only 1.5% of organizations — 10 out of 671 surveyed — said they would not need additional AI governance staff in the next 12 months. Everyone else is still building. A Gartner 2025 poll of over 1,800 executive leaders found that 55% now have an AI board or dedicated oversight committee. McKinsey's State of AI survey found that only 28% of organizations said the CEO takes direct responsibility for AI governance oversight, though high-performing organizations — those seeing real EBIT impact from AI — are three times more likely to have strong senior leadership ownership and engagement.

The pattern is clear: awareness is high, activity is widespread, but operational maturity remains low. The IAPP report found that only 1.5% of organizations — 10 out of 671 surveyed — said they would not need additional AI governance staff in the next 12 months. Everyone else is still building. A Gartner 2025 poll of over 1,800 executive leaders found that 55% now have an AI board or dedicated oversight committee. McKinsey's State of AI survey found that only 28% of organizations said the CEO takes direct responsibility for AI governance oversight, though high-performing organizations — those seeing real EBIT impact from AI — are three times more likely to have strong senior leadership ownership and engagement.

The gap between "working on it" (77%) and "fully operational" (25%) is where the opportunity — and the risk — lives. Most organizations have policies. Far fewer have turned those policies into consistent, repeatable processes with clear ownership, metrics, and continuous oversight.

What To Do

If you're in that 77% "working on it" group, the maturity chart above tells you exactly where to focus. Don't aim for perfection. Aim for the next level: from policy to framework, from framework to operations, from operations to continuous improvement. Progress, not perfection, is what separates organizations that govern AI well from those that just talk about it.

The Business Case: Governance as Enabler

The single most important narrative shift of 2025 was the death of the "governance versus speed" argument. For years, governance was framed as a brake — something that slows down innovation and adds bureaucratic overhead. The data from 2025 decisively disproves this.

The IBM Institute for Business Value report is particularly striking. Based on a survey of 1,000 senior business and technology leaders, it found that one in four failed AI initiatives can be traced back to weak governance. More than half of executives said their companies still have no clear approach to managing AI risk, ethics, or accountability. Yet among those that do, the results are measurably better: higher profits, fewer failures, faster scaling.

The IBM Institute for Business Value report is particularly striking. Based on a survey of 1,000 senior business and technology leaders, it found that one in four failed AI initiatives can be traced back to weak governance. More than half of executives said their companies still have no clear approach to managing AI risk, ethics, or accountability. Yet among those that do, the results are measurably better: higher profits, fewer failures, faster scaling.

As the IAPP report put it: AI governance can provide businesses with the certainty they need to continue to innovate with AI at scale, building faster, better, more reliable products that are trusted by consumers and enterprise partners alike. This framing — governance as a trust-building mechanism that enables scaling — was echoed by every major report published in 2025.

PwC's 2025 Responsible AI Survey showed this in practice. Among organizations at the "strategic" maturity stage — where responsible AI is actively integrated into operations — 78% said they were very effective at defining and communicating AI priorities, compared with just 35% at the "training" stage. Mature governance programs appreciate the value their responsible AI components offer in the form of discipline, measurability, and sustained business performance.

One example from PwC's research illustrates this beautifully: a major airline brought its risk management team together with senior business and technology leaders to shape its AI roadmap. Together, they chose to focus generative AI capabilities only on internal productivity tools, explicitly excluding any use cases that could affect passenger or employee safety. This early filtering — governance informing strategy — helped them accelerate development by designing proportionate controls that matched their risk posture. They moved faster precisely because governance helped them make clear decisions about where to invest and where to exercise caution.

Key Insight

Governance does not replace strategy. The two enable each other. Strategy provides direction for AI investment. Governance provides the trust, discipline, and risk clarity that let organizations execute that strategy at scale. As Issue #001 of this newsletter argued, AI strategy without governance is unsustainable. But the reverse is equally true: governance without strategic alignment is compliance theater.

The Regulatory Landscape: 2025 Milestones

2025 was the year AI regulation moved from theory to enforcement. The most significant milestones include:

EU AI Act implementation begins. The Act entered into force in August 2024, but 2025 saw the first phases of implementation take effect. The prohibition of unacceptable-risk AI systems began in February 2025. Requirements for general-purpose AI models took effect in August 2025. High-risk system requirements are scheduled for August 2026 — meaning organizations deploying AI in areas like employment, credit scoring, or essential services need to be preparing now.

ISO/IEC 42001 gains global traction. As we covered in depth in Issue #004, ISO 42001 emerged as the leading international standard for AI management systems in 2025. Organizations pursuing certification found that the standard's emphasis on AI system impact assessment — evaluating effects on individuals, groups, and society — represented a fundamentally different approach from traditional management system standards. ISO/IEC 42005, published in 2025, provided additional guidance on conducting these assessments.

Singapore releases agentic AI governance framework. In January 2025, Singapore's IMDA released the first government-backed framework specifically addressing agentic AI systems. Its concepts of "action-space" (what tools and systems an agent can access) and "autonomy" (what instructions govern the agent and how humans oversee it) became widely referenced across the industry.

US state-level AI legislation accelerates. While federal AI legislation remained limited, state-level activity surged. Multiple states introduced or advanced bills addressing AI in employment decisions, consumer protection, and automated decision-making. The patchwork nature of this regulation created complexity for organizations operating across jurisdictions.

India's DPDP Act implementation progresses. India's Digital Personal Data Protection Act, passed in 2023, saw continued implementation progress in 2025, with implications for AI systems processing personal data at scale — particularly relevant given India's position as a major technology services provider.

The Profession Matures: Who Leads AI Governance?

The IAPP report provided the most detailed picture yet of how organizations are structuring AI governance teams and leadership. Several findings stand out:

Privacy professionals lead the way. When the primary responsibility for AI governance sits with an organization's privacy function, respondents were significantly more likely to be confident in their ability to comply with the EU AI Act — 67% expressed confidence, compared to lower rates when governance was led by other functions. This makes sense: privacy teams bring experience in impact assessments, data protection, cross-functional collaboration, and regulatory compliance that transfers directly to AI governance.

Cross-functional structures outperform siloed ones. Organizations with AI governance committees reported better outcomes — 71% of those with ethics committees reported positive results. The IAPP case studies from organizations like Mastercard, IBM, Randstad, and BCG revealed no single model but shared patterns, including what some organizations describe as cross-functional "villages" of experts from legal, technical, ethical, and business backgrounds.

CEO involvement correlates with maturity. The IBM report found that 63% of organizations report CEO involvement in AI governance, rising to 81% among those with mature oversight. About one-third of enterprises have a CEO directly accountable for governance outcomes. When CEOs champion responsible AI, governance becomes embedded in culture and strategy — not just policy. McKinsey's high-performing organizations were three times more likely to have leaders who actively demonstrate ownership of AI initiatives.

Dedicated staffing remains a challenge. Almost every organization surveyed by IAPP reported needing more AI governance professionals. The emerging profile combines expertise in privacy, risk management, and technology — a skillset that barely existed five years ago but is now in acute demand. The IAPP's AI Governance Professional (AIGP) certification, launched to address this gap, saw rapid adoption throughout 2025.

The Incidents That Shaped the Conversation

Nothing accelerates governance adoption like real-world failures. Several incidents in 2025 reinforced why governance matters — not as a theoretical exercise but as a practical necessity:

The IBM breach data. According to IBM's 2025 Cost of a Data Breach Report, 13% of organizations reported breaches involving AI models or applications. Among those, 97% said they had no proper AI access controls in place. Meanwhile, 63% of organizations experiencing a breach did not have a formal AI governance policy. The correlation between governance absence and breach occurrence was stark.

AI hallucination in legal proceedings. The pattern of AI-generated legal citations being submitted in court — first seen in 2023 — continued through 2025, reinforcing the critical importance of human review and the dangers of treating AI output as authoritative without verification.

Shadow AI proliferation. As we covered in Issue #005, the rise of easily accessible AI tools led to widespread unauthorized use across organizations. The IBM data showing that 63% of organizations lack AI governance policies to manage AI use and prevent Shadow AI proliferation put a number on a risk that many governance teams had been struggling to articulate to leadership.

The Scaling Challenge: McKinsey's Findings

McKinsey's State of AI 2025 report, surveying nearly 2,000 participants, provided a sobering counterpoint to the adoption enthusiasm. While 88% of organizations report using AI in at least one business function, only about one-third have reached the scaling stage. And of those surveyed, only about 5% reported that more than 5% of their organization's EBIT is attributable to AI use.

What separates the high performers? According to McKinsey, they share several characteristics: they set growth and innovation as objectives (not just efficiency), they redesign workflows rather than simply adding AI to existing processes, and their senior leadership demonstrates ownership and commitment. The report found that workflow redesign has the single biggest effect on an organization's ability to see EBIT impact from AI.

For governance professionals, the implication is clear: governance should be embedded in the workflow redesign process, not bolted on afterward. When organizations redesign processes to incorporate AI, that is the moment to assess risk, define controls, and establish monitoring — not after the system is in production.

What To Do

If your organization is planning to scale AI in 2026, involve governance in the workflow redesign phase — not after deployment. McKinsey's data shows this is where the biggest impact on value creation happens. Governance embedded at design time is faster, cheaper, and more effective than governance retrofitted later.

Looking Ahead: What 2025 Signals for 2026

Based on everything published this year, several trends are clear for 2026:

Agentic AI governance becomes urgent. PwC's AI Agent Survey found that 79% of companies are already adopting AI agents, and 88% plan to increase AI-related budgets specifically because of agentic AI's potential. But trust lags: only 20% of executives trust agents to handle financial transactions, and 28% ranked lack of trust as a top challenge. Organizations that establish governance frameworks for agentic systems now will have a significant advantage as deployment accelerates.

EU AI Act enforcement begins in earnest. With high-risk system requirements taking effect in August 2026, organizations need to move from awareness to compliance. This includes conformity assessments, documentation requirements, human oversight mechanisms, and risk management systems. The cost of non-compliance is not just regulatory — it includes reputational damage and loss of market access.

Governance professionalization accelerates. The demand for AI governance professionals will continue to outpace supply. Organizations that invest in building this capability now — through hiring, training, and certification — will be better positioned than those scrambling to hire once regulatory deadlines arrive.

Tooling matures but doesn't replace judgment. IBM's watsonx.governance, along with competitors, moved AI governance tooling from concept to production in 2025. IDC and Forrester both recognized the maturation of the market. But tools are enablers, not solutions — they support governance processes that still require human judgment, cross-functional collaboration, and organizational commitment.

The governance-strategy alignment deepens. PwC's 2026 AI Predictions warned that companies taking a ground-up approach to AI — crowdsourcing initiatives rather than leading with top-down strategy — rarely achieve transformation. Governance and strategy must be aligned from the start. The organizations that treat governance as integral to their AI strategy, rather than a separate compliance function, will capture disproportionate value.

The Bottom Line

2025 was the year the data caught up with the argument. We no longer need to make the case for AI governance on principle alone. We can make it on performance: 34% higher operating profit, 27% of efficiency gains from governance, 60% of executives confirming ROI benefits, and a clear correlation between governance maturity and AI scaling success.

But the data also shows how far most organizations still have to go. The gap between having a policy (75%) and having fully operational governance (25%) is where incidents happen, value is lost, and regulatory exposure accumulates.

2026 will be the year of execution. The frameworks exist. The standards are published. The business case is proven. What remains is the hard work of implementation — building governance into AI operations, scaling it across the enterprise, and demonstrating that responsible AI isn't a constraint on innovation but the foundation for it.

Sources & Reports Referenced

IAPP — AI Governance Profession Report 2025 (published by IAPP and Credo AI)

IBM Institute for Business Value — "How Governance Increases Velocity" (December 2025)

PwC — 2025 US Responsible AI Survey: From Policy to Practice

McKinsey & Company — The State of AI 2025: Agents, Innovation, and Transformation (November 2025)

PwC — 2025 AI Agent Survey (May 2025)

PwC — 2026 AI Business Predictions

IBM — 2025 Cost of a Data Breach Report

Gartner — 2025 Executive Leaders Poll (1,800+ executives)

AuditBoard — "From Blueprint to Reality" (2025)

Pacific AI — 2025 AI Governance Survey

NACD — 2025 Board Governance Survey

Next Issue

Issue #007: The EU AI Act: A Practical Compliance Roadmap. With high-risk system requirements taking effect in August 2026, we break down what organizations actually need to do — from risk classification to conformity assessment — with a realistic timeline and resource guide.