The Clock Is Ticking

The Clock Is Ticking

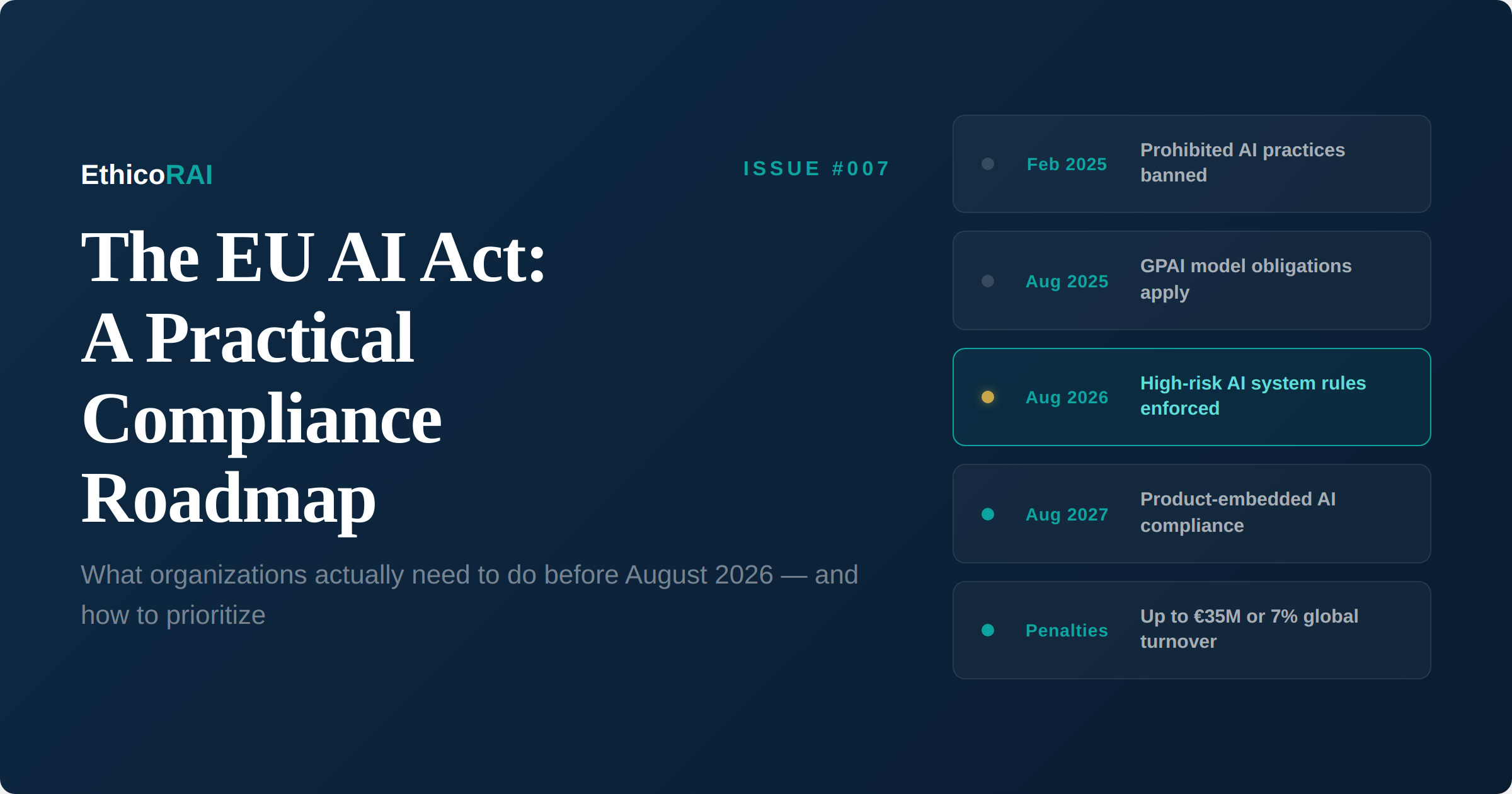

On August 2, 2026, the most consequential AI regulation in the world enters its critical enforcement phase. Requirements for high-risk AI systems under the EU AI Act become applicable — covering AI used in employment decisions, credit scoring, education, law enforcement, and essential services. Non-compliance carries penalties of up to €35 million or 7% of global annual turnover, whichever is higher.

That deadline is roughly eight months away. And for most organizations, the compliance gap is significant. Over half of enterprises lack systematic inventories of AI systems currently in production or development. Without knowing what AI exists within the organization, risk classification and compliance planning is impossible.

This issue provides a practical compliance roadmap — not a legal treatise, but an operational guide for governance professionals who need to move from awareness to action. We cover what the Act actually requires, how to classify your AI systems, and a phased plan for getting compliant.

Understanding the Risk-Based Framework

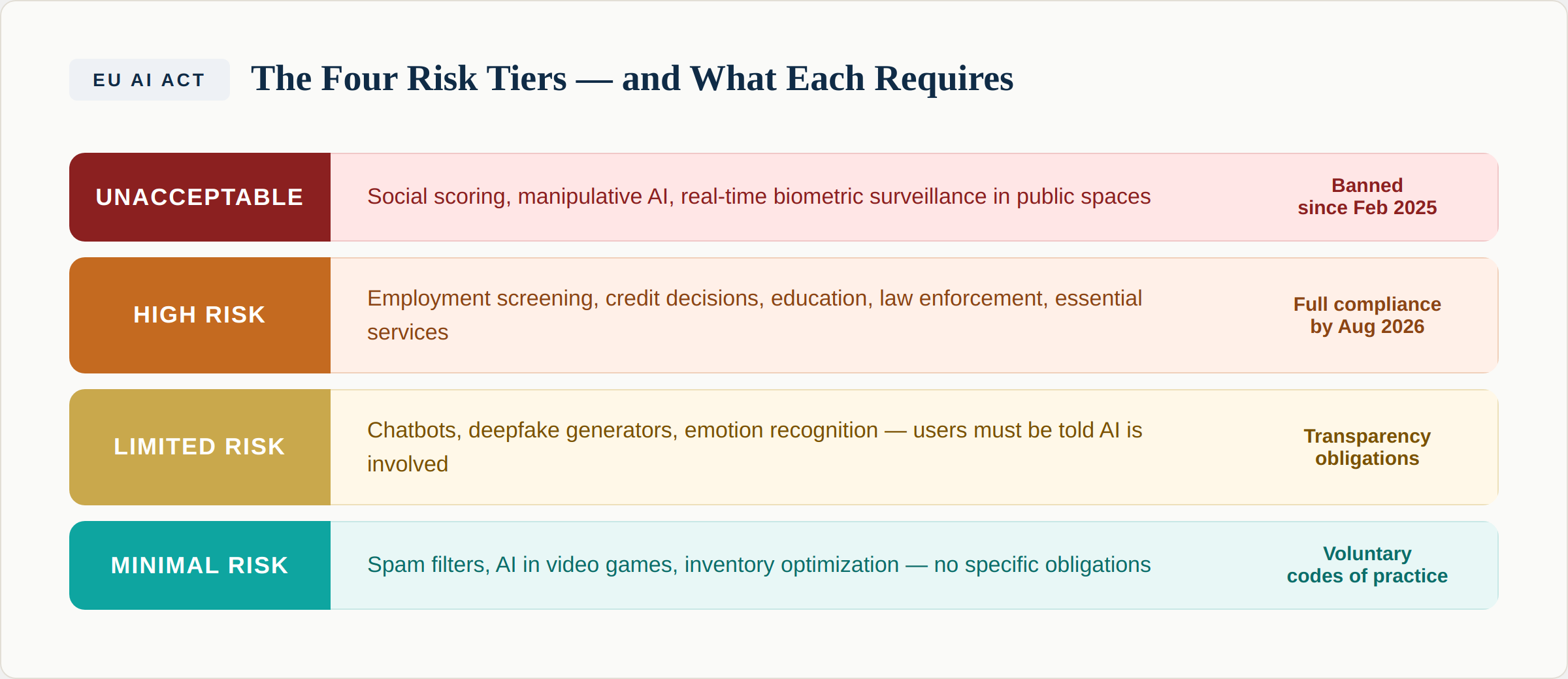

The EU AI Act uses a risk-based classification system that determines what obligations apply to different AI systems. This is the foundation of everything that follows — if you misclassify a system, you'll either over-invest in compliance for low-risk tools or under-invest in controls for high-risk ones.

Unacceptable risk — banned since February 2025. These AI practices are prohibited entirely. They include social scoring systems by public authorities, AI that manipulates behavior to cause harm, systems that exploit vulnerabilities of specific groups (children, elderly, disabled persons), and real-time remote biometric identification in publicly accessible spaces for law enforcement (with narrow exceptions). If you operate any of these, you must have ceased by February 2025.

Unacceptable risk — banned since February 2025. These AI practices are prohibited entirely. They include social scoring systems by public authorities, AI that manipulates behavior to cause harm, systems that exploit vulnerabilities of specific groups (children, elderly, disabled persons), and real-time remote biometric identification in publicly accessible spaces for law enforcement (with narrow exceptions). If you operate any of these, you must have ceased by February 2025.

High risk — full compliance by August 2026. This is where the heavy obligations fall. High-risk AI systems are defined in two ways under the Act. Annex III lists specific use cases: AI systems used in biometric identification, critical infrastructure management, education and vocational training, employment and worker management, access to essential services (credit, insurance, public benefits), law enforcement, migration and border control, and administration of justice. Additionally, AI systems embedded in products already covered by EU safety regulations (medical devices, vehicles, machinery) are classified as high risk under Annex I.

Limited risk — transparency obligations. AI systems that interact with people, generate content, or detect emotions must disclose that AI is involved. This includes chatbots (users must know they're interacting with AI), deepfake generators (content must be labeled), and emotion recognition systems (subjects must be informed). These transparency requirements apply from August 2026.

Minimal risk — no specific obligations. AI systems that don't fall into the above categories — spam filters, AI in video games, inventory optimization tools — face no specific regulatory requirements under the Act. Organizations can voluntarily adopt codes of practice.

What To Do

Start classification now. Go through every AI system in your inventory and determine which risk tier it falls under. Pay special attention to AI used in HR, hiring, performance management, credit or insurance decisions, and customer service — these are the most common high-risk systems that organizations underestimate. Remember our Shadow AI example from Issue #005: a productivity tracking tool built by a project manager is a high-risk employment AI system under the Act.

What High-Risk Compliance Actually Requires

If you have high-risk AI systems, the Act mandates a comprehensive set of requirements. Here is what they mean in practice:

Risk management system (Article 9). A continuous, iterative process that identifies and mitigates risks throughout the AI system's lifecycle. This isn't a one-time assessment — it must be updated as the system evolves. If you've implemented ISO 42001, your risk management framework maps directly to this requirement.

Data governance (Article 10). Training, validation, and testing datasets must meet quality criteria. You must ensure data is relevant, representative, free of errors, and complete. For systems that may affect fundamental rights, you need to consider potential biases in the data. This is where fairness — one of the nine responsible AI objectives we covered in Issue #003 — becomes a regulatory requirement, not just a principle.

Technical documentation (Article 11). Detailed documentation of the AI system before it's placed on the market. This includes a general description of the system, its intended purpose, how it was developed, its performance metrics, and the risk management measures applied. Think of this as the AI equivalent of a product specification sheet — but far more detailed.

Record-keeping and logging (Article 12). High-risk AI systems must automatically record events (logs) throughout their operation. These logs must be sufficient to allow for post-event analysis, particularly for identifying situations that may result in risks. This connects directly to the monitoring and drift detection we discussed in Issue #004 — logging is not optional under the Act.

Transparency and information to deployers (Article 13). Systems must be designed to be sufficiently transparent to enable deployers to interpret and use the system's output appropriately. Instructions for use must be provided, including the system's capabilities, limitations, and the degree of human oversight needed.

Human oversight (Article 14). High-risk AI systems must be designed to allow effective human oversight. This includes enabling the humans overseeing the system to understand its capabilities and limitations, monitor its operation, be able to interpret outputs, and be able to override or halt the system. The level of oversight must be proportionate to the risks.

Accuracy, robustness, and cybersecurity (Article 15). Systems must achieve appropriate levels of accuracy and be resilient to errors, faults, and attempts at manipulation. Cybersecurity measures must protect against unauthorized access or data corruption.

Quality management system (Article 17). Providers must establish a quality management system that covers strategy for regulatory compliance, design and development processes, testing and validation procedures, data management practices, risk management, post-market monitoring, incident reporting, and communication with competent authorities.

Conformity assessment (Article 43). Before placing a high-risk system on the market, providers must conduct a conformity assessment to demonstrate compliance. For most Annex III systems, this can be done through internal assessment (self-certification). For certain biometric identification systems, a third-party conformity assessment body is required.

EU database registration (Article 71). High-risk AI systems must be registered in the EU database before being placed on the market or put into service.

Key Insight

If this list feels overwhelming, notice how many requirements align with what a well-designed AI management system already provides. Risk management, data governance, documentation, monitoring, human oversight, quality management — these are the pillars of ISO 42001. Organizations that have been building governance frameworks are significantly ahead. Those starting from scratch have eight months of intensive work ahead.

Provider vs. Deployer: Know Your Role

The Act regulates based on your role in the AI value chain, and the obligations differ significantly:

Providers are organizations that develop AI systems (or have them developed) and place them on the market under their own name or trademark. Providers bear the heaviest obligations: conformity assessment, technical documentation, quality management, post-market monitoring, and registration.

Deployers are organizations that use AI systems under their authority — but didn't build them. Deployers must use systems according to instructions, ensure human oversight, monitor operations, keep logs, conduct fundamental rights impact assessments (for certain use cases), and inform individuals that they are subject to high-risk AI decisions.

Many organizations are both: they deploy third-party AI platforms while also building proprietary AI tools. Each system needs to be assessed independently for your role. And critically — if you substantially modify a provider's AI system, you may become a provider yourself under the Act, inheriting all provider obligations.

The Digital Omnibus: A Possible Extension

An important development to track: in late 2025, the European Commission proposed the "Digital Omnibus" package, which includes potential modifications to the AI Act timeline. The proposal would link the application of high-risk obligations to the availability of harmonized standards and support tools, potentially pushing the deadline to December 2027 for Annex III systems and August 2028 for product-embedded systems.

However — and this is critical — the Omnibus is still under negotiation between the European Parliament and the Council. It is not adopted, and it may change significantly. Organizations should not assume the extension will materialize. Prudent compliance planning treats August 2026 as the binding deadline. If the Omnibus provides additional time, that's a bonus for refinement — not an excuse to delay starting.

Your Compliance Roadmap

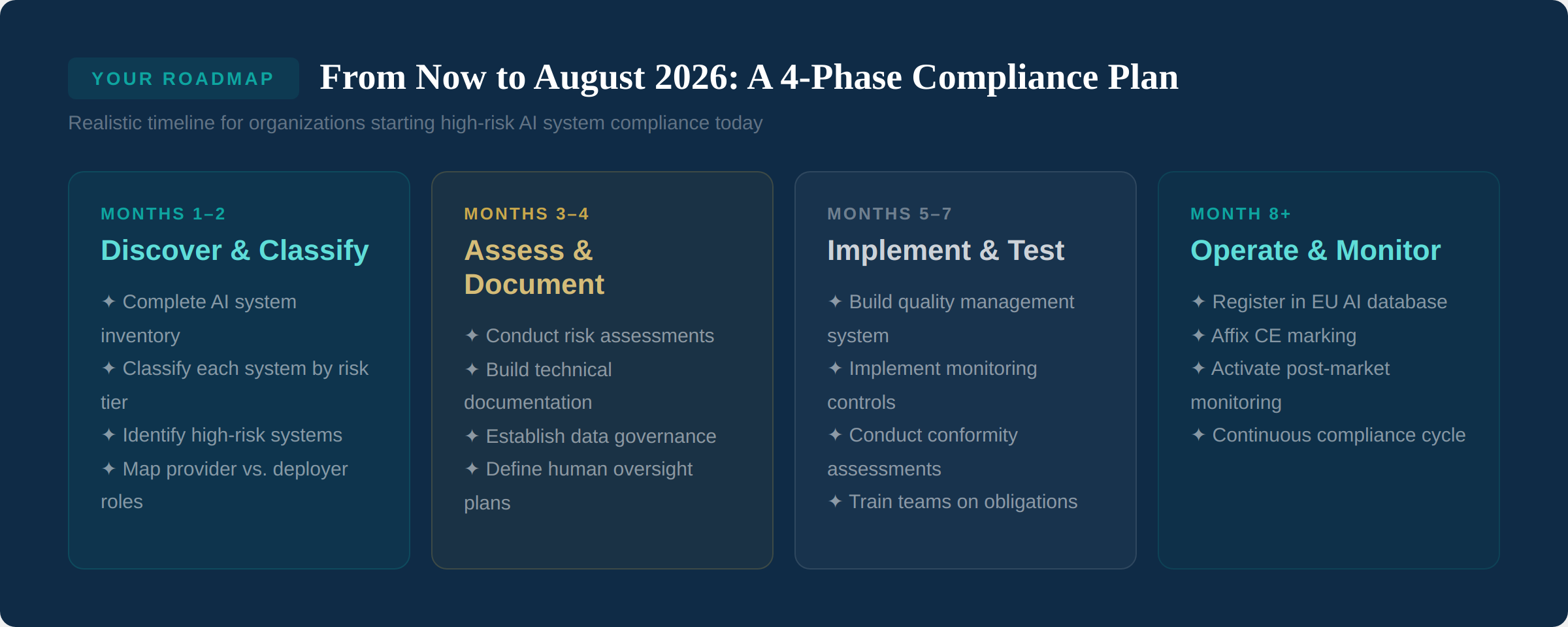

Phase 1: Discover and Classify (Months 1-2). Complete your AI system inventory — every AI tool, model, and application in use across the organization, including Shadow AI. Classify each system by risk tier. Identify which systems qualify as high-risk under Annex I or Annex III. Map whether you are a provider, deployer, or both for each system. Prioritize the highest-risk systems for immediate attention.

Phase 1: Discover and Classify (Months 1-2). Complete your AI system inventory — every AI tool, model, and application in use across the organization, including Shadow AI. Classify each system by risk tier. Identify which systems qualify as high-risk under Annex I or Annex III. Map whether you are a provider, deployer, or both for each system. Prioritize the highest-risk systems for immediate attention.

Phase 2: Assess and Document (Months 3-4). For each high-risk system, conduct a formal risk assessment. Begin building technical documentation. Establish or strengthen data governance practices. Define human oversight plans — who oversees each system, how they can intervene, what training they need. For deployers, conduct fundamental rights impact assessments where required.

Phase 3: Implement and Test (Months 5-7). Build or formalize your quality management system. Implement monitoring and logging controls. Conduct conformity assessments — internal for most systems, third-party for biometric identification. Train teams on their specific obligations. Test incident reporting procedures.

Phase 4: Operate and Monitor (Month 8 onwards). Register high-risk systems in the EU database. Affix CE marking where required. Activate post-market monitoring. Establish the continuous compliance cycle — governance is not a project with an end date, it's an ongoing operational capability.

What To Do

If you haven't started, Phase 1 is your immediate priority. You cannot comply with a regulation that applies to specific AI systems if you don't know what AI systems you have. The AI inventory exercise from Issue #001 and the Shadow AI discovery process from Issue #005 are your starting points. Everything else builds on that foundation.

The ISO 42001 Advantage

Organizations that have already implemented or are pursuing ISO 42001 certification have a significant head start on EU AI Act compliance. The overlap is substantial: ISO 42001's requirements for risk assessment, AI system impact assessment, data governance, monitoring, documentation, and continuous improvement map directly to the Act's obligations for high-risk systems.

This doesn't mean ISO 42001 certification equals EU AI Act compliance — the Act has specific requirements (CE marking, EU database registration, conformity assessment procedures) that go beyond what ISO 42001 covers. But the management system foundation, the organizational processes, and the governance culture that ISO 42001 builds are exactly what the Act demands. As we discussed in Issue #004, the paradigm shift in impact assessment — evaluating effects on individuals, groups, and society — prepares organizations for the fundamental rights dimension of the EU AI Act.

What Happens If You're Not Ready

The penalty framework is structured in three tiers. For prohibited AI practices: up to €35 million or 7% of global annual turnover. For other infringements of the Act (including non-compliance with high-risk requirements): up to €15 million or 3% of global annual turnover. For supplying incorrect or misleading information to authorities: up to €7.5 million or 1% of global annual turnover.

But financial penalties are only one dimension of non-compliance risk. Civil liability claims from individuals affected by non-compliant AI decisions are possible. Mandatory recalls or suspension of deployment can disrupt operations. Reputational damage from public enforcement actions affects customer and investor confidence. And loss of market access — non-compliant systems cannot legally be placed on the EU market.

The extraterritorial reach of the Act means these risks apply to any organization whose AI systems affect people in the EU, regardless of where the organization is based. If your AI touches EU residents, the Act applies to you.

The Bottom Line

The EU AI Act is the most comprehensive AI regulation in the world, and its high-risk requirements are approaching fast. But it is navigable — especially for organizations that have been building AI governance capabilities. The Act doesn't require reinventing your approach to AI oversight. It requires formalizing it, documenting it, and making it demonstrable.

Start with what you know: inventory your AI, classify it, and focus on the high-risk systems first. Build from there. Eight months is tight but sufficient if you start now and prioritize effectively.

Next Issue

Issue #008: Data Governance for AI — Building the Foundation. Data quality, lineage, and governance are consistently cited as the top barrier to trustworthy AI. We explore what data governance for AI actually looks like in practice and how it connects to both ISO 42001 and the EU AI Act's data requirements.