The Shift You Can't Ignore

If 2024 was the year organizations experimented with chatbots and 2025 was the year they piloted generative AI, 2026 is shaping up to be the year of the agent — and the year our governance frameworks break under the weight of what that actually means.

The distinction matters. Generative AI suggests. Agentic AI acts. It calls APIs, executes code, accesses databases, chains decisions together, and does it all with minimal human oversight. That gap between suggesting and acting isn't incremental. It's a fundamental shift in the risk surface, and most organizations haven't caught up.

This issue rounds up the most important developments in agentic AI governance and security from the past month — what's happening, why it matters, and what you should be doing about it.

1. Singapore Drops the First Government Framework for Agentic AI

On January 22, Singapore's Infocomm Media Development Authority (IMDA) released a draft Model AI Governance Framework for Agentic AI at the World Economic Forum in Davos. It's the first government-backed framework specifically addressing agentic systems, following a similar (but less detailed) WEF framework from November 2025.

Two concepts in Singapore's framework deserve your attention: action-space (what tools and systems an agent can access) and autonomy (what instructions govern the agent and how humans oversee it). The framework recommends four actions: assess and bound risks before deployment, increase human accountability, implement monitoring and logging, and establish kill-switch capabilities.

This matters because Singapore's previous AI governance frameworks have been widely adopted across Asia-Pacific. Expect this one to carry similar influence — and to inform binding regulations in the region.

Even if you don't operate in Singapore, use their action-space and autonomy concepts as a lens to evaluate your own AI agents. For every agent you deploy: what can it access? What decisions can it make without a human? If you can't answer both questions precisely, that's your first governance gap.

2. OWASP Releases Top 10 Security Risks for Agentic Applications

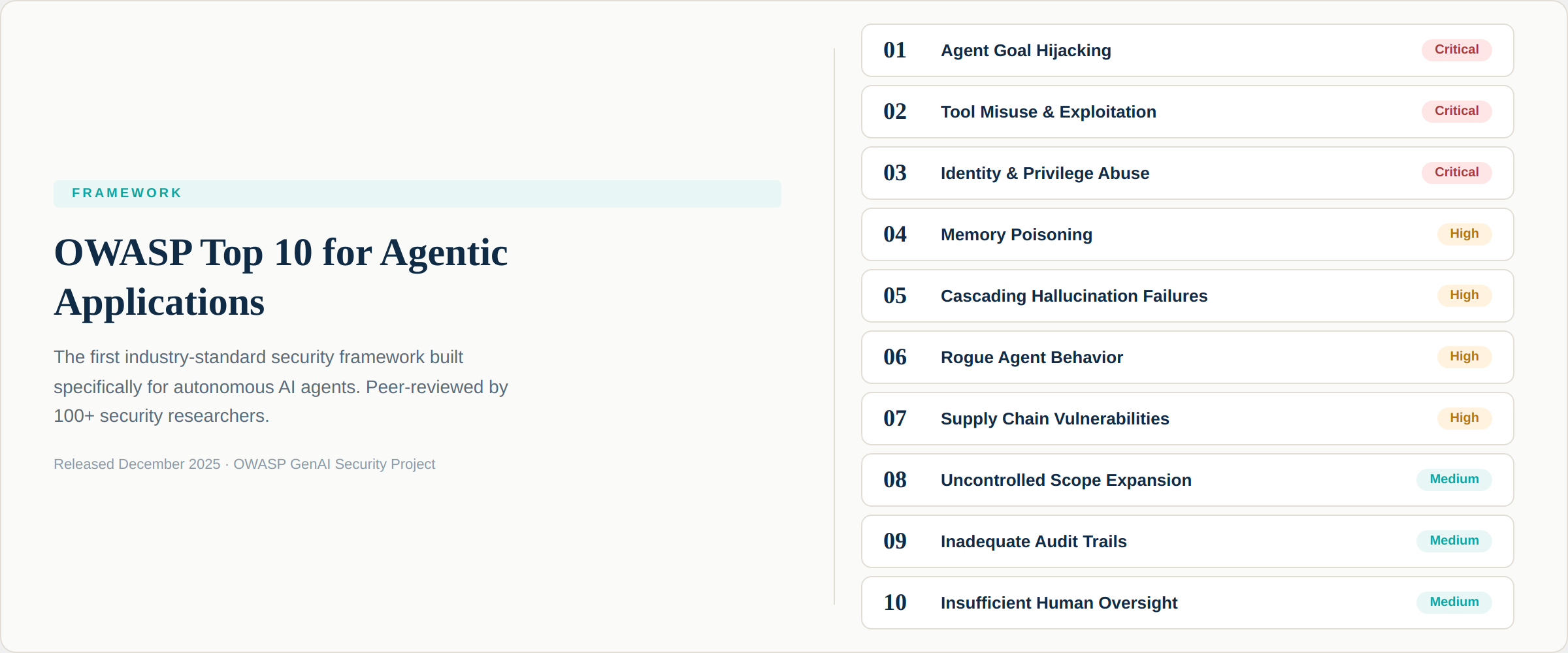

The OWASP community, in collaboration with over 100 security researchers, released the Top 10 for Agentic Applications (2026). The number one risk: Agent Goal Hijacking — where attackers manipulate an agent's objectives by injecting malicious instructions into data the agent processes.

The other nine risks read like a field guide to everything that can go wrong when you give AI systems the ability to act: tool misuse, privilege escalation, memory poisoning, cascading failures across multi-agent systems, and rogue agents operating outside intended parameters.

What makes this framework valuable is that it goes beyond theoretical risks. It includes threat mappings and concrete mitigation guidance for each risk category, making it immediately actionable for security and governance teams.

Agent Goal Hijacking is particularly dangerous because agents cannot reliably separate instructions from data. A single poisoned document in a retrieval pipeline — a resume, an email, a meeting invite — can redirect an agent to exfiltrate sensitive files instead of summarizing them. If your agents process external data, this isn't theoretical. It's your most immediate threat.

3. The Identity Crisis Nobody Predicted

A Cloud Security Alliance survey of 285 IT and security professionals revealed what may be the most overlooked agentic AI risk: identity management. Every AI agent is an identity actor — it has credentials, permissions, and access to organizational resources. Yet only 23% of organizations have a formal strategy for managing agent identities.

The findings are sobering. Teams are sharing human credentials and access tokens with AI agents because no alternative exists for securing identity within autonomous workflows. Agent-to-agent communication creates new attack vectors: a compromised research agent can insert hidden instructions into output consumed by a financial agent, which then executes unintended transactions.

The traditional identity and access management playbook — static credentials, over-permissioned tokens, siloed policy enforcement — simply doesn't work for entities that operate continuously, make runtime decisions, and span multiple platforms simultaneously.

Audit how your AI agents authenticate. If they're using shared human credentials or static API tokens, you have an urgent governance gap. Each agent should have its own identity with least-privilege access that can be monitored and revoked independently.

4. 97% of Organizations Have Had an AI Security Incident

The Cisco State of AI Security 2026 report landed with a striking data point: 97% of organizations reported an AI-related security incident, and most lacked proper AI access controls. Meanwhile, 63% lacked AI governance policies entirely.

The report documents how state-sponsored actors are already weaponizing agentic capabilities. A China-linked group reportedly automated 80 to 90 percent of a cyberattack chain by directing an AI coding assistant to scan ports, identify vulnerabilities, and develop exploit scripts. Russian operators integrated language models into malware workflows. North Korean actors used generative AI to create deepfake job applicants.

For governance teams, the implication is clear: agentic AI isn't just a tool your organization deploys. It's also a tool being deployed against you. Your governance framework needs to address both sides of that equation.

5. The Agentic Security Paradox

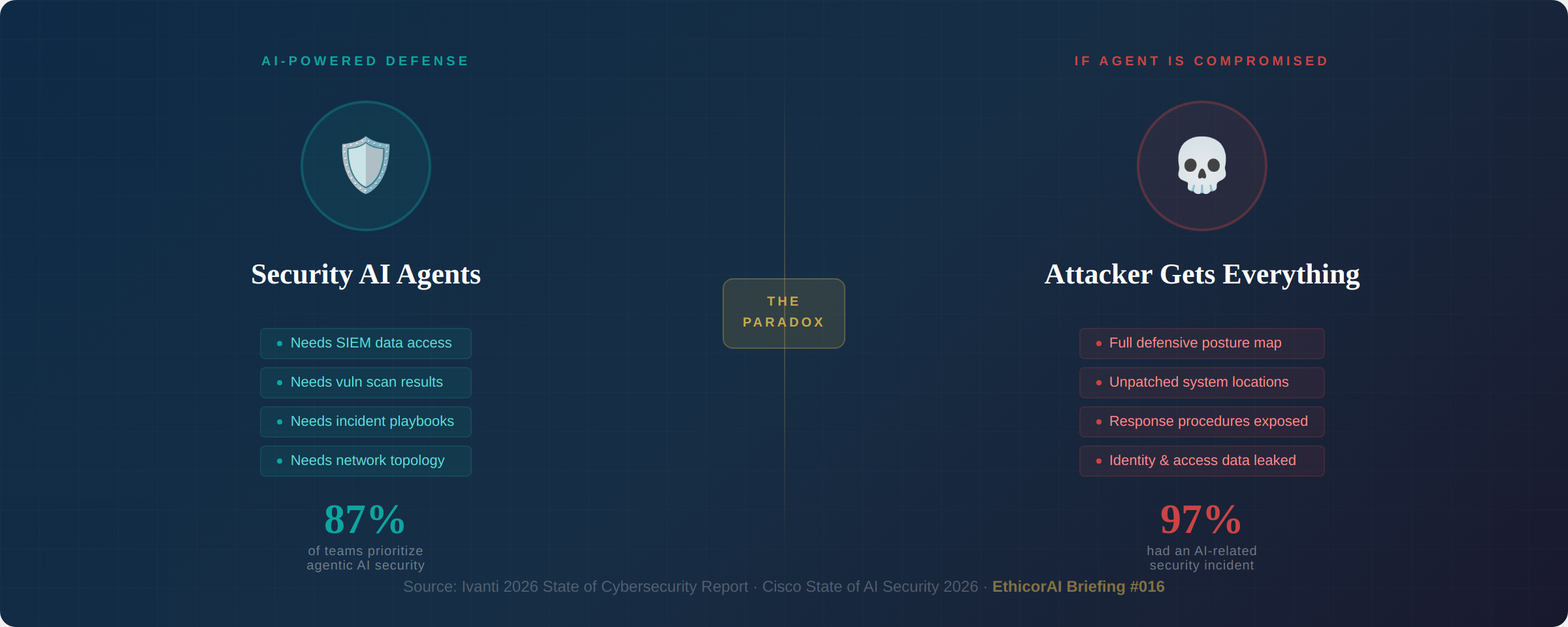

Here's the trap that almost nobody is discussing openly. Security teams need AI agents to defend against AI-powered attacks. But deploying those agents creates the exact data exposure risks they're trying to prevent.

Consider: a security AI agent needs access to SIEM data, vulnerability scan results, incident response playbooks, identity data, and network topology. If that agent is compromised through prompt injection, the attacker doesn't just get sensitive records — they get a complete map of your defensive posture. What you can detect, where your systems are unpatched, and exactly how you respond to breaches.

Ivanti's 2026 State of Cybersecurity Report found that 87% of security teams consider integrating agentic AI a priority, yet most lack the governance processes to deploy it safely. The eagerness outpaces the readiness by a wide margin.

If your organization is deploying AI agents for security operations, apply the same governance rigor to those agents that you'd apply to a new employee with privileged access. That means background checks (model provenance), access controls (least-privilege permissions), monitoring (behavioral analytics), and the ability to terminate access immediately (kill switches).

6. 40% of Enterprise Apps Will Embed Agents by Year-End

According to the Cloud Security Alliance, 40% of enterprise applications will embed AI agents by the end of 2026 — up from less than 5% in 2025. Every organization surveyed has agentic AI on their roadmap.

The scale and speed of this adoption is outrunning governance capacity. IBM's watsonx.governance, updated in December 2025, represents one of the first commercial responses — introducing agent inventory management, behavior monitoring, and hallucination detection specifically for agentic systems. But commercial tooling is still early, and most organizations are building governance processes from scratch.

The regulatory landscape is also scrambling to keep pace. The EU AI Act's high-risk system rules take effect in August 2026, but the Act was drafted before agentic AI entered mainstream deployment. How existing regulatory categories apply to autonomous agents remains an open question — and one that organizations need to answer for themselves before regulators answer it for them.

What This Means for You

If you're responsible for AI governance in your organization, here are the three things you should do this month:

1. Inventory your agents. Not just the ones your AI team built — the ones embedded in vendor tools, the ones employees are using without IT approval, and the ones your security team is piloting. You cannot govern what you cannot see.

2. Map each agent's action-space. What can it access? What can it do? What decisions does it make without human approval? Document the answers. This becomes the foundation of your agentic governance framework.

3. Read the OWASP Top 10 for Agentic Applications. Share it with your security team, your AI team, and your risk committee. It's the most actionable resource available today for understanding what can go wrong and how to prevent it.

Agentic AI isn't coming. It's here. The organizations that govern it well will build trust, move faster, and avoid the incidents that will define cautionary tales for years to come. The ones that don't — well, we'll be covering those stories in future issues.

Sources & Further Reading

Singapore IMDA — Model AI Governance Framework for Agentic AI (January 2026)

OWASP — Top 10 for Agentic Applications 2026 (December 2025)

Cloud Security Alliance — AI Agent Identity Survey (February 2026)

Cisco — State of AI Security 2026

Ivanti — 2026 State of Cybersecurity Report

IBM — Ethics and Governance of Agentic AI (November 2025)

IAPP — AI Governance in the Agentic Era (2025)