The AI You Didn't Buy

When organizations think about AI governance, they almost always start with the AI they built. The machine learning models their data science team developed. The chatbot their innovation lab piloted. The recommendation engine their engineering team deployed. These are visible, tangible, and usually the first items in the AI inventory.

But for most organizations, these internally built systems represent a fraction of their total AI exposure. The far larger surface — and the one most governance programs miss entirely — is the AI they procured, embedded, and inherited from third parties.

Consider what's happened in the last two years. Your CRM platform added AI-powered lead scoring. Your HR system introduced "smart" resume screening. Your customer service platform shipped an AI agent. Your ERP added demand forecasting. Your email security upgraded to AI-based threat detection. Your document management platform began auto-classifying and summarizing content. None of these required an AI procurement decision. They arrived as product updates to systems you already owned.

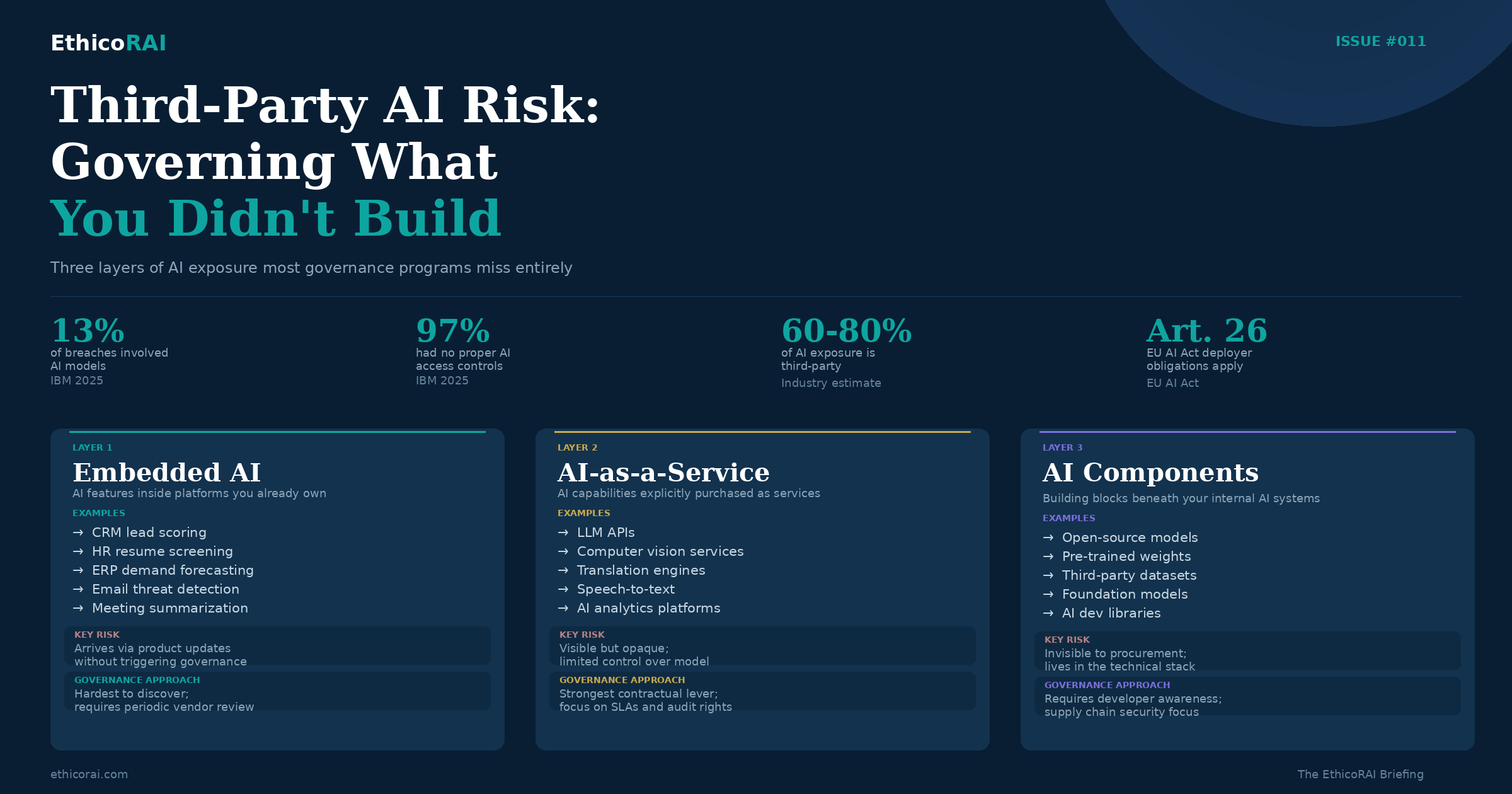

This is the hidden AI portfolio — and it's where the majority of organizational AI risk now lives. IBM's 2025 Cost of a Data Breach Report found that 13% of organizations reported breaches involving AI models, with the majority tracing back to supply chain or third-party components. Among those breached, 97% had no proper AI access controls in place.

In Issue #001, we established that you can't govern what you can't see. In Issue #005, we tackled Shadow AI — the tools employees adopt without IT knowledge. This issue addresses the other half of the visibility challenge: the AI that arrives through your vendor relationships, procurement decisions, and technology supply chain. Governing it requires a fundamentally different approach from governing AI you built yourself.

The Three Layers of Third-Party AI Exposure

Not all third-party AI is the same. The governance approach, risk profile, and contractual leverage differ significantly depending on how the AI enters your organization. Understanding these three layers is the starting point for any third-party AI governance program.

Layer 1: Embedded AI. AI features built into platforms you already use — often added through product updates rather than explicit procurement decisions. Your CRM's lead scoring algorithm. Your HR platform's resume screening. Your financial software's anomaly detection. Your collaboration tool's meeting summarization.

Embedded AI is the most pervasive and the hardest to govern. You didn't buy "an AI system" — you bought a platform that now has AI in it. The AI may have been activated by default. The vendor may not have prominently disclosed the AI capabilities. Your original procurement assessment — if you did one — evaluated the platform, not the AI. And your existing vendor contract almost certainly predates the AI features and contains no AI-specific provisions.

The risk is compounded by the fact that embedded AI often operates on your most sensitive data. Your HR platform's AI screens candidates using personal data. Your CRM's AI scores customers using behavioral and demographic data. Your financial platform's AI flags transactions using financial records. These aren't low-risk experimental tools — they're AI systems making or influencing consequential decisions using sensitive data, and they arrived without triggering your governance process.

Layer 2: AI-as-a-Service. AI capabilities explicitly purchased as standalone services — large language model APIs, computer vision services, translation engines, speech-to-text platforms, AI-powered analytics tools. Unlike embedded AI, these are deliberate AI procurement decisions. You knowingly bought an AI capability.

The governance challenge here is different. You have more visibility (you know the AI exists) and more contractual leverage (you negotiated a specific AI purchase). But you have limited control over the AI itself. The model is a black box. You can't inspect its training data, modify its architecture, or fully control its behavior. You're dependent on the provider for updates, performance, fairness, and security — and the provider's incentives don't always align with yours.

AI-as-a-Service also introduces dependency risk. If you build critical business processes on a vendor's AI API and that vendor changes the model, raises prices, deprecates the version you're using, or experiences an outage, your operations are directly impacted. Governance needs to address this operational dependency alongside the traditional AI risk dimensions.

Layer 3: AI Components. The building blocks beneath the AI systems your organization develops internally — open-source models, pre-trained weights, third-party training datasets, AI development libraries and frameworks, and foundation models used as base layers for fine-tuning.

This layer is often invisible to governance teams because it lives in the technical stack rather than in procurement records. Your data science team downloaded a pre-trained model from an open-source repository. They used a third-party dataset to fine-tune it. They built on top of a foundation model using an API. Each of these introduces third-party AI risk: unknown training data, potential biases, license restrictions, security vulnerabilities in the supply chain, and provenance gaps that make regulatory compliance harder.

As we discussed in Issue #008, when you use third-party data to train or fine-tune an AI model, you inherit whatever governance gaps exist in that data. The same principle applies to models: when you build on top of a third-party model, you inherit its biases, its limitations, and its blind spots.

The most dangerous third-party AI isn't the one you knowingly procured — it's the one your HR platform or CRM quietly added as a "smart feature" in a quarterly update. If your vendor management process only triggers governance reviews for new procurements, you're systematically missing the AI that arrives through existing contracts. Extend your AI inventory process to include periodic reviews of existing vendor platforms for new AI capabilities.

"But We Didn't Build Anything" — Why Responsible AI Applies to You

This is perhaps the most common question in third-party AI governance, and one that comes up in nearly every conversation with organizations procuring AI. It takes many forms: "We're just using an API — the provider is responsible for the AI." "We didn't build the model, so how can we be accountable for its outputs?" "Responsible AI is for AI developers, not AI consumers."

The answer has two dimensions — one rooted in organizational values, one in law. Both point to the same conclusion: if you deploy AI that affects people, you are accountable for how it affects them, regardless of who built the model.

The Values Dimension: Your Supply Chain Reflects Your Principles

Consider an analogy from a different industry. If your organization has a commitment to ethical sourcing, you wouldn't knowingly import textiles from a supply chain that employs child labor — even if the country of origin has no law prohibiting it. Your ethical commitment doesn't stop at your organizational boundary. It extends to the suppliers you choose, the standards you require, and the practices you're willing to accept in your supply chain. An organization that says "we don't employ child labor, but we don't ask our suppliers about their practices" hasn't made an ethical commitment. It's made a convenience choice.

AI works the same way. If your organization has responsible AI principles — fairness, transparency, accountability, privacy, safety — those principles apply to all AI that operates under your name and affects your stakeholders. When a vendor's AI system denies a customer's loan application, screens out a job candidate, or flags a transaction as fraudulent, that decision carries your organization's name. The customer doesn't care who built the model. They care that your organization made a decision that affected them.

Responsible AI procurement means aligning your vendor selection, due diligence, and ongoing monitoring with your organization's AI principles — not because a law requires it (though increasingly laws do), but because your principles are meaningless if they only apply to AI you build yourself. An organization that publishes responsible AI commitments while procuring AI systems with no governance scrutiny has a credibility gap that stakeholders, regulators, and customers will eventually notice.

The Legal Dimension: Deployment Creates Obligation

Beyond values, the legal landscape is increasingly clear: deploying AI creates regulatory obligations regardless of who built it. Here's how the major frameworks address this:

EU AI Act. The Act explicitly assigns obligations to "deployers" — organizations that use AI systems under their authority — separate from and in addition to obligations on providers. Under Article 26, deployers of high-risk AI must implement human oversight measures appropriate to the system, monitor the AI system's operation for risks, keep logs generated by the system, conduct fundamental rights impact assessments for certain use cases, inform individuals that they are subject to high-risk AI decision-making, and ensure staff who oversee the AI system have sufficient competence and authority. These obligations apply whether you built the AI or bought it. The Act recognizes that deployers control the context — they choose the use case, define the affected population, and determine the consequences of AI decisions. That context-level accountability cannot be outsourced to the provider.

For general-purpose AI models (which we'll address in detail below), the Act creates a layered responsibility framework. GPAI providers have their own obligations — technical documentation, training data transparency, copyright compliance. But deployers who build applications on top of GPAI models bear full deployer obligations for their specific use case. The GPAI provider's compliance doesn't discharge your compliance.

Singapore. Singapore's Model AI Governance Framework has consistently emphasized that organizations deploying AI bear governance responsibility proportional to the impact on individuals — regardless of internal versus external development. The 2020 framework established this principle. The 2024 Generative AI governance framework reinforced it for foundation models. And the 2026 Agentic AI framework extends it further with the concepts of action-space and autonomy: whoever deploys an AI agent is responsible for defining and bounding what it can do and how humans oversee it, regardless of who built the underlying model.

Singapore's approach is pragmatic: the entity closest to the deployment context is best positioned to assess and manage risk. A model provider in San Francisco cannot assess the fairness implications of how their model is used by a bank in Singapore to evaluate loan applications for Singaporean customers. That's the deployer's responsibility, informed by local context, local regulation, and local impact.

India. India's Digital Personal Data Protection (DPDP) Act 2023 takes a data-centric approach that has significant implications for AI procurement. The Act places obligations on "data fiduciaries" — the organizations that determine the purpose and means of processing personal data. If you use a vendor's AI model to process personal data of Indian citizens — whether for hiring decisions, customer segmentation, credit assessment, or any other purpose — you are the data fiduciary. The vendor is a "data processor" acting on your behalf. The DPDP Act's obligations — purpose limitation, data minimization, accuracy, consent, and the right to correction and erasure — fall on you as the fiduciary, not on the model provider. You cannot outsource your data protection obligations by outsourcing the AI processing.

India has not yet enacted AI-specific legislation comparable to the EU AI Act, but the combination of the DPDP Act, sector-specific regulations (particularly in financial services via the Reserve Bank of India), and the evolving National AI Strategy create a governance environment where "we didn't build it" provides no legal shelter for AI deployed on Indian data subjects.

Frame third-party AI governance to your leadership in two sentences: "Our responsible AI principles apply to all AI that affects our stakeholders, regardless of who built it. And in every major jurisdiction we operate in, the law holds us accountable for AI we deploy — not just AI we develop." This reframes the conversation from optional due diligence to organizational imperative.

Why Your Governance Probably Doesn't Cover This

If most organizations' AI risk comes from third-party systems, why do most governance programs focus on internally built AI? Three structural reasons:

Procurement treats AI as regular software. Most vendor risk assessments were designed for traditional software: uptime SLAs, data security, business continuity, and compliance certifications. They don't ask AI-relevant questions: What training data was used? How was the model tested for bias? Can the system explain its decisions? How does the vendor detect and manage model drift? What constitutes an AI incident? The procurement team isn't negligent — they're using an assessment framework that predates the AI capabilities now embedded in the products they're evaluating.

SaaS AI arrives without triggering procurement. When a vendor adds AI features to an existing platform via a product update, it typically doesn't trigger a new procurement process, a new vendor risk assessment, or a new contract negotiation. The AI arrives under the existing agreement, and the existing agreement almost certainly contains no AI-specific provisions. This means AI that may be making consequential decisions about your customers, employees, or operations is operating under a governance vacuum — procured, in effect, without anyone making a conscious decision to adopt AI.

The governance team doesn't know it exists. Unless the AI governance program has a proactive discovery process that periodically scans existing vendor platforms for new AI capabilities, embedded AI remains invisible. It doesn't appear in the AI inventory. It doesn't go through risk assessment. It doesn't get assigned an owner. It operates in a governance blind spot — sometimes for years — until an incident, an audit, or a regulatory inquiry reveals it.

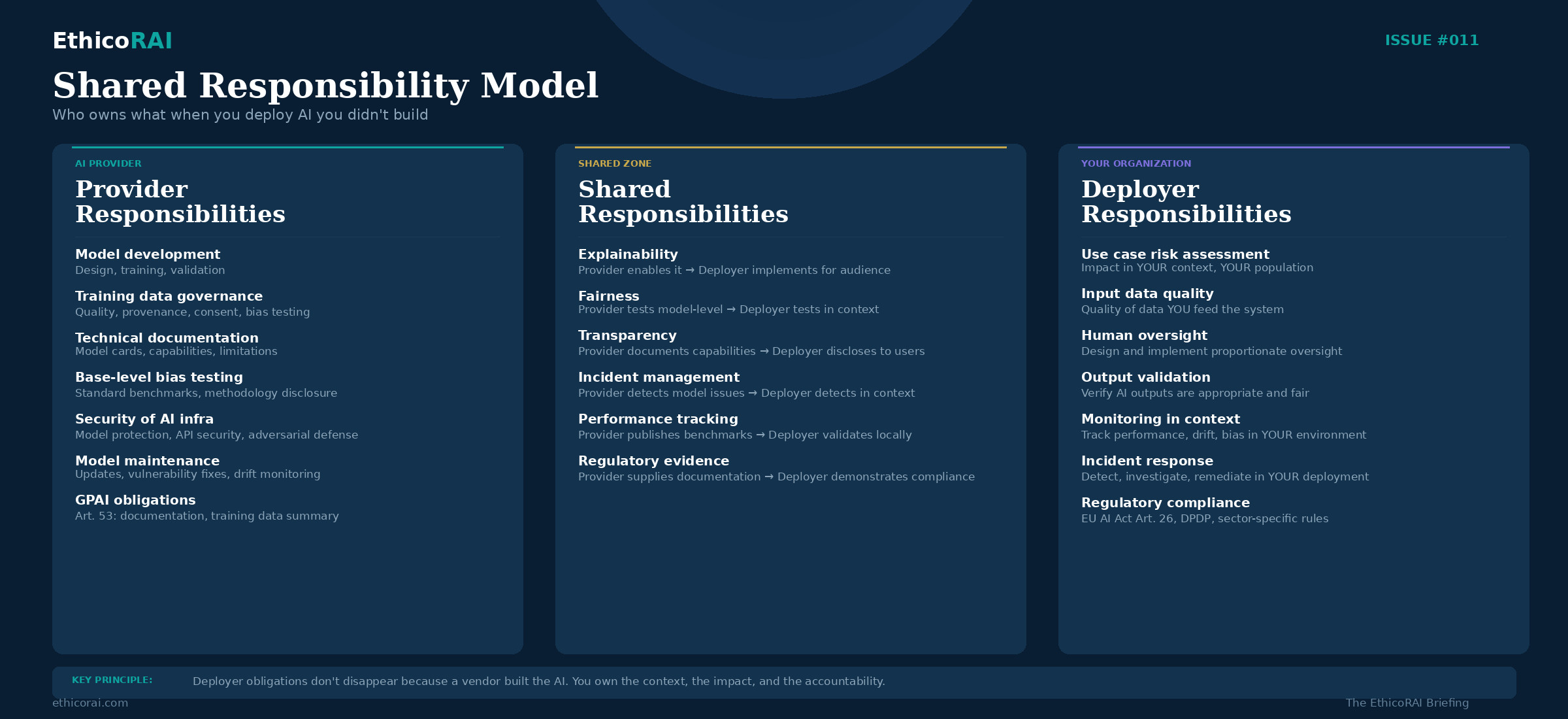

The Shared Responsibility Model

Cloud computing established the concept of shared responsibility: the cloud provider secures the infrastructure, the customer secures their data and applications. AI governance needs an equivalent model — one that clearly delineates what the AI provider is responsible for, what the deploying organization is responsible for, and what falls into a shared zone requiring collaboration.

Provider Responsibilities

The organization that developed and provides the AI system bears responsibility for the model itself and its foundational characteristics. This includes model development and validation — the design, training, testing, and initial validation of the AI model, including architecture decisions, hyperparameter tuning, and performance optimization. Training data governance — the quality, provenance, representativeness, and legal basis for the data used to train the model, including consent, licensing, and bias testing of the training corpus. Technical documentation — providing sufficient documentation for deployers to understand the model's capabilities, limitations, intended use, performance characteristics, and known failure modes. This includes model cards, system descriptions, and integration documentation. Base-level bias testing — testing the model for bias across protected characteristics using standard benchmarks and disclosing the methodology and results. Security of AI infrastructure — protecting the model, training pipeline, inference infrastructure, and API endpoints against adversarial attacks, unauthorized access, and data breaches. Ongoing model maintenance — monitoring model performance, managing updates, addressing discovered vulnerabilities, and communicating material changes to deployers.

Deployer Responsibilities

The organization deploying the AI system bears responsibility for the context in which the AI operates and the impact it has on people. This includes use case risk assessment — evaluating the risk of using this specific AI system in this specific context, with this specific affected population. The provider tested the model generally; you must assess it for your application. Input data quality — ensuring the data you feed into the AI system meets quality, relevance, and compliance standards. The provider built a capable model; if you feed it poor data, the outputs will be poor, and that's your governance failure, not the provider's. Human oversight — designing and implementing appropriate human oversight for AI decisions, proportional to the risk level. This includes defining who reviews AI outputs, what authority they have to override, and what training they've received. Output validation — verifying that AI outputs are appropriate, accurate, and fair in your operational context. Provider benchmarks don't replace your own testing in your data environment, with your population, in your use case. Monitoring in context — tracking AI performance, fairness, and reliability in your deployment environment on an ongoing basis. Drift that the provider doesn't detect at the model level may be visible in your application context. Incident response — detecting, investigating, and remediating AI incidents in your deployment. Maintaining your own incident log. Reporting to regulators where required. Regulatory compliance — meeting all deployer-side regulatory obligations, including EU AI Act deployer requirements, fundamental rights impact assessments, individual notification, and record-keeping.

Shared Responsibilities

Some governance responsibilities require active collaboration between provider and deployer. Explainability sits in the shared zone — the provider must build the AI system to be sufficiently transparent and provide tools or documentation that enable explanations, but the deployer must implement explainability for their specific audience (regulators, customers, internal teams). A model that is technically explainable but whose explanations are never communicated to affected individuals is a governance failure on the deployer side. Fairness is similarly shared — the provider tests the model for bias at the general level, but the deployer must test for fairness in their specific context. A model that shows no bias on standard benchmarks may exhibit significant bias when applied to a specific population in a specific use case. Contextual fairness testing is the deployer's responsibility, informed by the provider's base-level testing. Transparency follows the same pattern — the provider must document the AI system's capabilities and limitations, but the deployer must disclose AI use to affected individuals and communicate what the AI does in terms those individuals can understand.

For every third-party AI system in your inventory, create a simple three-column document: provider responsibilities, deployer responsibilities, shared responsibilities. Populate it using the framework above, adapted to your specific system and use case. This becomes the foundation for both contractual negotiation (ensuring the provider is committed to their responsibilities) and internal governance (ensuring you're executing yours). If the boundary is unclear for any item, that's a conversation to have with the vendor — ambiguity in shared responsibility is where governance gaps hide.

Governing General-Purpose AI Models

When your organization procures a general-purpose AI model — an LLM API, a foundation model for fine-tuning, a multimodal model for building applications — the governance challenge is qualitatively different from procuring a purpose-built AI solution. This distinction matters because general-purpose AI is becoming the default building block for enterprise AI, and the governance playbook for purpose-built systems doesn't fully apply.

What makes GPAI governance different. A purpose-built AI system (a vendor's HR screening tool, a credit scoring model) was designed for a specific use case. The provider assessed risks for that use case, tested fairness in that context, and designed the system with that application in mind. Your governance builds on theirs.

A general-purpose AI model wasn't built for your use case. It was built to be capable across many tasks. The provider doesn't know how you'll use it. They can tell you what the model can do — generate text, analyze images, process code — but they cannot tell you whether it should do what you're planning. This gap between capability and appropriateness is where GPAI governance lives.

Consider a large language model API that your organization plans to use for three different applications: drafting marketing copy, summarizing customer service interactions, and generating preliminary assessments for insurance claims. The same model, the same API, the same provider. But the risk profile of these three applications is fundamentally different. Marketing copy is low-risk — a suboptimal output means a bland email. Summarizing customer interactions is moderate-risk — inaccurate summaries could lead to misguided follow-up actions. Generating insurance claim assessments is high-risk — biased or inaccurate outputs could directly affect individuals' financial outcomes and may constitute a high-risk AI system under the EU AI Act.

The GPAI provider cannot assess these application-level risks for you. They can provide model-level safety testing, content filtering, and general capability benchmarks. But the use-case-specific risk assessment — fairness in your population, appropriateness for your decision context, adequacy of human oversight for your application — is entirely your responsibility.

The EU AI Act's layered approach to GPAI. The EU AI Act addresses this explicitly with a layered regulatory framework for general-purpose AI. Under Articles 51-56, all GPAI model providers must provide technical documentation describing the model's capabilities and limitations, make available a sufficiently detailed summary of the training data (including its sources and any known limitations), implement a copyright policy and comply with EU copyright law, and provide downstream deployers with information sufficient to meet their own obligations.

GPAI models classified as presenting systemic risk — generally models trained with computational resources exceeding a defined threshold, currently set at 10^25 FLOPs — face additional obligations: conducting and documenting adversarial testing (red-teaming), tracking and reporting serious incidents, ensuring adequate cybersecurity protections, and reporting on energy consumption.

But here's the critical point: these provider-level obligations do not discharge your deployer-level obligations. If you build a high-risk application on top of a GPAI model, you must meet all high-risk system requirements under the Act — risk management, data governance, technical documentation, human oversight, accuracy and robustness, and conformity assessment — for your specific application. The GPAI provider's compliance with their obligations (Articles 51-56) is necessary but not sufficient for your compliance as a deployer or provider of the downstream application.

What your governance framework should address for GPAI procurement:

1. Define acceptable use cases before deployment. Your responsible AI policy should specify what types of decisions this model can support, what types it cannot, and what risk thresholds trigger additional controls. This isn't about limiting innovation — it's about ensuring that when the model is used for consequential decisions, proportionate governance is in place before deployment, not after an incident.

2. Conduct use-case-specific impact assessments. The GPAI provider's model-level assessment does not replace your application-level assessment. For each use case, assess: Who is affected? What are the consequences of errors? Could the application produce biased outcomes for your specific population? What level of human oversight is appropriate? What happens when the model is wrong? This is the AI System Impact Assessment requirement under ISO 42001 applied to your deployment context — evaluating impact on individuals, groups, and society as we discussed in Issue #004.

3. Implement application-level guardrails. GPAI models are general-purpose — they'll attempt whatever you ask of them. Your governance must implement guardrails appropriate to your risk level: input validation (preventing inappropriate queries), output filtering (catching problematic responses), grounding mechanisms (connecting model outputs to verified data sources), confidence thresholds (routing low-confidence outputs to human review), and scope constraints (limiting the model's operating domain to your intended use case).

4. Manage model updates and version control. GPAI providers update their models — sometimes frequently, sometimes without advance notice. A model update can change behavior in your application: accuracy may improve in some areas and degrade in others; content filters may become more or less restrictive; response patterns may shift in ways that affect your user experience or your fairness metrics. Your governance should contractually require notification of material model changes, maintain the ability to pin to a specific model version, test new model versions in your application context before adopting them, and establish rollback procedures if an update degrades performance or fairness.

5. Address training data and intellectual property risk. GPAI models are trained on vast datasets that may include copyrighted content, personal data, and content from sources that create legal or reputational exposure. When you build on a GPAI model, you inherit potential exposure from its training data. Your due diligence should understand the provider's training data practices, assess IP risk for your specific outputs, implement usage policies that address content attribution and originality verification, and ensure your use of the model complies with licensing terms that may restrict commercial applications or specific use cases.

The fundamental governance principle for GPAI procurement: the model provider is responsible for the model's general safety and capability. You are responsible for everything that happens when that model meets your use case, your data, your users, and your regulatory environment. There is no governance shortcut that comes from using a well-known provider's model. The provider's reputation doesn't transfer to your application.

AI Vendor Due Diligence Framework

Vendor due diligence for AI isn't a checkbox exercise — it's a structured conversation that reveals vendor maturity and surfaces the risks you'll need to manage. The framework below covers the essential dimensions, organized around the questions that matter most.

Model Governance and Organizational Maturity

Does the vendor have a formal AI governance program? Is there a named individual or team responsible for responsible AI outcomes? Have they achieved or are they pursuing ISO 42001 certification? Do they publish responsible AI principles, and can they demonstrate how those principles are operationalized — not just stated? Do they maintain an internal AI risk register? Have they undergone third-party assessments or audits of their AI governance practices?

This dimension tells you whether the vendor treats responsible AI as an organizational capability or a marketing statement. Vendors with mature governance will answer these questions readily. Vendors who struggle to answer them are telling you something important about their maturity — and about the governance burden that will fall on you.

Training Data and Model Development

Can the vendor describe the provenance of training data — where it came from, how it was collected, and what consent or licensing basis exists? Can they describe how training data was assessed for representativeness and bias? Do they disclose training data characteristics at a level sufficient for your regulatory compliance (particularly EU AI Act Article 53 requirements for GPAI)? How was the model validated? What testing methodology was used, and on what benchmarks? Can the vendor provide model cards or system descriptions that document capabilities, limitations, intended use, and known failure modes?

Transparency and Explainability

Does the vendor provide sufficient technical documentation for you to understand how the AI system works at a level appropriate for your compliance needs? Can the AI system provide explanations for its outputs or decisions — and if so, in what form and to what level of detail? Does the vendor support your ability to meet transparency obligations — disclosing AI use to affected individuals, explaining the role of AI in decisions, communicating capabilities and limitations?

Performance, Reliability, and Monitoring

What performance metrics does the vendor track, and are AI-specific metrics included — accuracy, precision, recall, hallucination rates, fairness metrics? What SLAs exist for AI-specific performance (not just uptime and latency)? How does the vendor monitor for model drift, performance degradation, and emergent bias? What is the vendor's process for model updates — how frequently do they occur, how are they tested, and how are deployers notified?

Security

How is the AI system protected against adversarial attacks — prompt injection, data poisoning, model extraction, goal hijacking? What access controls govern the model and its training data? Does the vendor conduct adversarial testing (red-teaming)? For AI-as-a-Service, how is customer data isolated? What protections exist against cross-tenant data leakage?

Privacy and Data Handling

Where is data processed — geographically and in terms of infrastructure? Is customer data used for model training or improvement? If so, can this be opted out of? What data retention and deletion practices apply to data submitted to the AI system? How does the vendor handle data subject rights (access, correction, erasure) in the context of AI processing? Is the vendor a data processor under applicable privacy laws (GDPR, DPDP Act), and have appropriate data processing agreements been executed?

Incident Management

How does the vendor define an AI incident — and is that definition documented? What is the notification timeline for AI incidents affecting your deployment? What investigation and root cause analysis capabilities does the vendor have? What access do you have to investigation findings and remediation plans? Does the vendor maintain a record of past AI incidents, and is it willing to share relevant information during due diligence?

Regulatory Alignment

Can the vendor support your EU AI Act compliance — particularly for high-risk systems? Will they provide conformity assessment documentation if needed? Do they maintain compliance with relevant standards (ISO 42001, ISO 27001, SOC 2)? How do they track and respond to regulatory changes that may affect their AI products? For GPAI providers: do they comply with Article 53 obligations (technical documentation, training data summary, copyright compliance)?

Vendor due diligence isn't about finding perfect vendors — none exist. It's about understanding the governance risks you're inheriting and whether those risks are within your capacity to manage. A vendor with a less mature AI governance program may still be acceptable if the use case is low-risk and your organization can compensate with stronger deployer-side controls. A vendor supplying a high-risk system needs to meet a higher standard, because the consequences of governance gaps are more severe and your ability to compensate is more limited.

Contractual Safeguards

Due diligence tells you what the vendor's governance looks like today. Contracts define what the vendor is obligated to deliver throughout the relationship. The contract is your primary governance lever for third-party AI — it converts vendor promises into enforceable commitments.

AI Transparency Clauses. The vendor must disclose when AI is used within their product or service — including AI added through product updates after contract execution. The vendor must notify you of material changes to AI models, including updates that could affect performance, fairness, or behavior in your deployment context. The vendor must provide documentation sufficient for your regulatory compliance, updated as the AI system evolves.

Data Use Restrictions. Clear and explicit prohibition on using your data for model training, improvement, or any purpose beyond the contracted service — unless explicitly agreed in writing. This is non-negotiable for regulated industries and any deployment involving personal data. Data processing location requirements — specifying where data is processed and stored, with restrictions on cross-border transfers where applicable. Data deletion rights — the ability to request deletion of all data submitted to the AI system, with verification that deletion has occurred. Data isolation guarantees — particularly for AI-as-a-Service, ensuring your data is not commingled with other customers' data in ways that create privacy or competitive risk.

Performance Commitments. AI-specific SLAs covering accuracy, fairness metrics, availability, and response quality — not just traditional uptime and latency metrics. Defined remediation timeline and process when AI performance degrades below agreed thresholds. Right to receive regular performance reports from the vendor, including fairness metrics and drift indicators.

Incident Notification. Mandatory notification of AI incidents within a defined and short timeframe — 24 to 72 hours depending on severity. Obligation to cooperate in investigation — providing log data, technical analysis, and root cause assessment. Access to remediation plans and timeline for resolution. Right to receive post-incident reports documenting what happened, what was affected, and what was done to prevent recurrence.

Right to Audit.

Audit rights are the teeth of AI governance contracts — they transform trust-based vendor relationships into verifiable ones. But not all audit clauses are created equal. Meaningful audit rights should include:

Right to conduct or commission independent audits of the vendor's AI governance practices, with reasonable notice and at the deployer's cost. This should cover the vendor's AI governance framework, risk assessment processes, bias testing methodology, monitoring practices, and incident management procedures.

Access to bias testing methodology and results — not just a statement that "we tested for bias" but the specific methodology used, the metrics measured, the populations tested, and the findings. A vendor that tests for bias but won't disclose methodology is providing assurance without evidence.

Access to model documentation including model cards, training data descriptions, system architecture summaries, and technical documentation sufficient to support your regulatory compliance — particularly EU AI Act conformity assessment documentation.

Right to audit incident handling — verifying that AI incidents were detected, reported within contractual timeframes, investigated to root cause, and remediated effectively. This is especially important because AI incidents are often ambiguous (was it a bias issue or a data quality issue?) and vendor self-reporting may undercount them.

Defined frequency and scope. Annual audits for high-risk AI systems. Triggered audits following material incidents or significant model changes. Scope defined to cover governance practices, not just security controls (which are typically covered by existing SOC 2 or ISO 27001 audits but don't address AI-specific risks).

The practical reality: Many large AI providers — particularly GPAI providers — will not grant individual customer audit rights. The volume of customers makes it impractical. In these cases, the next-best options are SOC 2 Type II with AI-specific controls — look for providers whose SOC 2 reports include AI governance, model management, and fairness controls alongside traditional security controls. ISO 42001 certification — a third-party-audited management system for AI governance. If a GPAI provider is ISO 42001 certified, an independent auditor has already verified their governance framework against the international standard. Third-party audit reports — some providers commission independent assessments of their AI practices and make summary findings available to customers. Industry certifications and attestations — emerging AI-specific attestation standards that provide independent verification of AI governance practices.

Where individual audit rights aren't available, your contract should require the vendor to maintain relevant certifications, provide you with copies of audit reports, and notify you of any material audit findings that affect your deployment.

Regulatory Cooperation. The vendor must support your regulatory compliance obligations — including EU AI Act conformity assessment, fundamental rights impact assessments, and any regulatory inquiries or investigations involving the vendor's AI system. The vendor must provide technical documentation and cooperation required by regulators, within commercially reasonable timeframes.

Exit Provisions. Data portability — the ability to extract all your data in a usable format. Model documentation handover — transfer of all documentation necessary to support transition to an alternative provider. Transition support period — a defined period during which the vendor continues to provide the service while you migrate. Clear terms on what happens to your data when the contract ends — deletion timelines, verification, and certification of destruction.

Review your top 5 AI vendor contracts this week. Check specifically for four provisions: AI transparency obligations (are they required to tell you when AI changes?), data use restrictions (can they use your data for model training?), audit rights (can you or a third party verify their governance practices?), and incident notification requirements (how quickly must they tell you about AI incidents?). If any of these are missing from a contract governing a high-risk AI system, you have a governance gap that needs contractual remediation at the next renewal — or sooner if the risk warrants it.

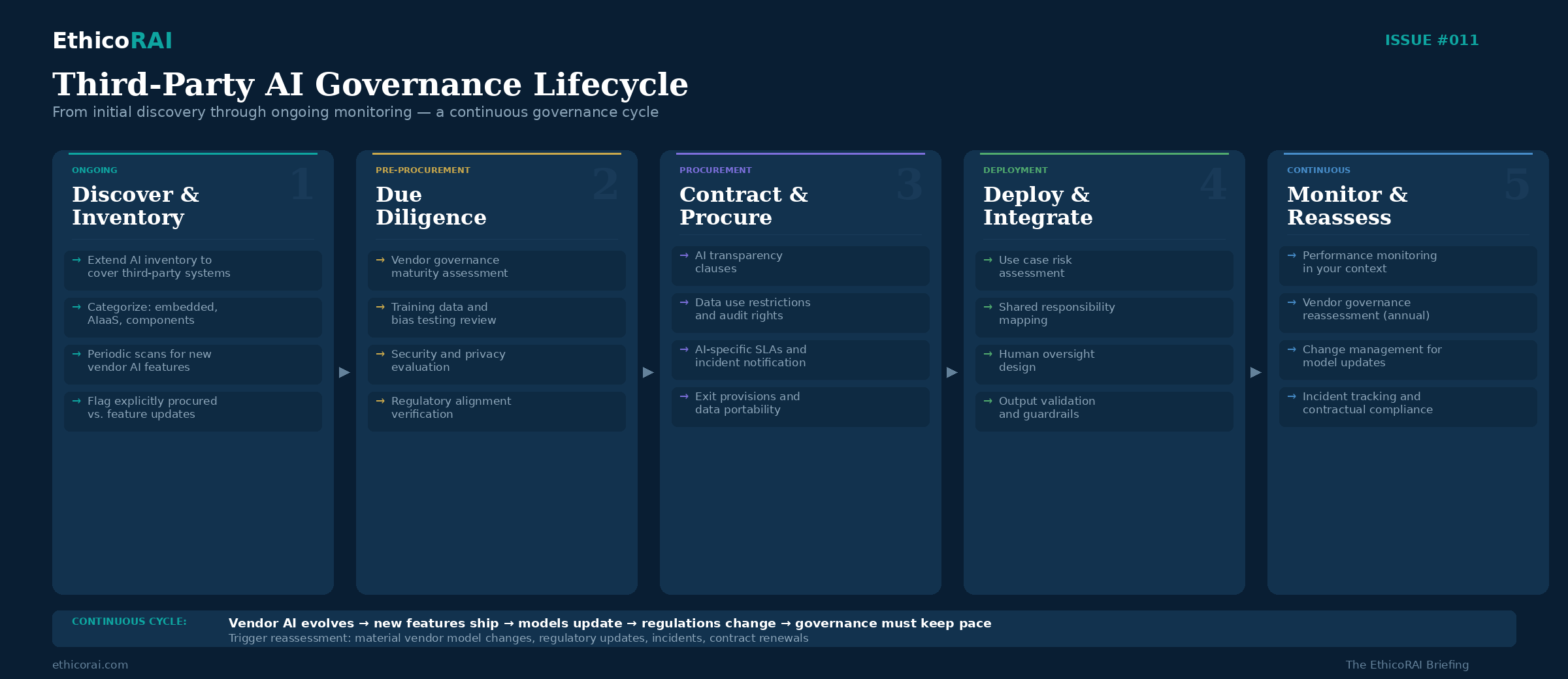

Ongoing Monitoring — Governance Doesn't End at Procurement

Due diligence and contracts establish the foundation. Ongoing monitoring is the operating model that keeps third-party AI governance alive throughout the relationship. Without it, you have a point-in-time assessment that becomes progressively less relevant as the vendor's AI evolves, your usage patterns change, and the regulatory landscape shifts.

Vendor Governance Maturity Reassessment. Periodic reassessment of the vendor's AI governance program — annually for high-risk AI, bi-annually for moderate-risk. Track whether the vendor has maintained or achieved relevant certifications (ISO 42001, SOC 2 with AI controls). Review published responsible AI reports, transparency reports, and model cards for updates. Monitor industry reporting on vendor AI incidents or controversies.

Performance Monitoring in Your Context. Track AI-specific performance metrics in your operational environment — not just vendor-reported benchmarks. Monitor for drift in accuracy, fairness, and reliability using your own data and your own affected population. Establish alert thresholds that trigger review: a fairness metric that shifts beyond a defined range, an accuracy drop that exceeds tolerance, or an increase in outputs requiring human correction. Document monitoring results — these become evidence for your own regulatory compliance and internal audit.

Change Management for Vendor Updates. Establish a process for evaluating vendor model updates and AI feature additions before they enter production in your environment. Not every update needs full reassessment — but material changes to high-risk AI systems do. "Material" should be defined in advance: changes to the model architecture, training data, or core capabilities; changes that the vendor identifies as potentially affecting performance or behavior; changes to AI systems classified as high-risk in your inventory.

Incident Tracking. Maintain your own log of AI incidents involving third-party systems — don't rely solely on vendor incident reports. Correlate your observations with vendor-reported incidents. Track vendor response times against contractual commitments. Feed incident data into your governance committee's regular reporting and into your internal audit program.

Connect this to the three lines of defense from Issue #010: first-line teams using the third-party AI system should own day-to-day monitoring. Second-line governance function should review vendor assessments, aggregate third-party AI risk across the portfolio, and manage the vendor reassessment calendar. Third-line internal audit should periodically verify that monitoring is occurring as designed and that contractual commitments are being enforced.

The Provider Trap — When Deployers Become Providers

A critical concept under the EU AI Act that most organizations procuring AI overlook: substantial modification. Under Article 28, if you substantially modify a provider's AI system, you may be reclassified as a "provider" under the Act — inheriting all provider obligations, including conformity assessment, technical documentation, quality management systems, and post-market monitoring.

Substantial modification can include fine-tuning a vendor's model on your own data in a way that materially changes its behavior or capabilities. Modifying the intended purpose of the AI system — using a system designed for one application in a significantly different context. Integrating the AI into a system where it performs a different function than originally intended. Modifying the system in a way that affects compliance with the Act's requirements.

This is particularly relevant for organizations building on GPAI models. If you take a foundation model, fine-tune it for a specific application, and deploy it as a product or service, you are likely a provider of that downstream system — not merely a deployer of the foundation model. The GPAI provider's obligations cover the base model. Your obligations cover the application you built.

The practical implication: before modifying any third-party AI system, assess whether the modification crosses the "substantial" threshold. If it does, your governance obligations expand significantly. This assessment should be part of your development process for any project that builds on third-party AI components.

Fine-tuning a foundation model for a specific business application is almost certainly a substantial modification under the EU AI Act. If the resulting application qualifies as high-risk, you bear full provider obligations — conformity assessment, technical documentation, quality management, post-market monitoring — not just deployer obligations. Understand this before you commit to building on third-party models.

Building Your Third-Party AI Governance Program — A Practical Starting Point

If you're starting from scratch on third-party AI governance, here's a prioritized action plan that builds on your existing governance capabilities:

1. Extend your AI inventory to cover third-party systems. Add columns to your AI register: "Built vs. Procured," vendor name, which of the three layers the AI falls into (embedded, AI-as-a-Service, component), and whether the AI was explicitly procured or arrived as a feature update. This single exercise typically reveals 2-3x more AI exposure than organizations expected. Include a periodic review cycle — quarterly at minimum — to capture AI capabilities added through vendor updates.

2. Risk-classify your third-party AI portfolio. Apply the same risk classification framework you use for internally built AI. Which third-party systems are making or influencing consequential decisions? Which process personal data? Which fall under EU AI Act high-risk categories? Prioritize governance attention on the highest-risk third-party systems first.

3. Conduct due diligence on high-risk vendors. Using the framework in this issue, assess the AI governance maturity of vendors supplying your highest-risk AI systems. This doesn't need to be exhaustive for every vendor — start with your top 5-10 by risk, and expand from there.

4. Review and strengthen contracts. For your highest-risk vendor AI relationships, identify contractual gaps and negotiate AI-specific provisions at the next renewal — or sooner for critical gaps. Focus on the four essentials: transparency obligations, data use restrictions, audit rights, and incident notification.

5. Establish ongoing monitoring proportional to risk. Define monitoring requirements for each risk tier. High-risk third-party AI: continuous performance monitoring, annual vendor reassessment, triggered reassessment for material changes. Moderate-risk: quarterly performance review, bi-annual vendor reassessment. Low-risk: annual review.

6. Update procurement processes. Integrate AI-specific assessment criteria into your standard vendor risk assessment and procurement processes. This ensures that new AI procurements go through appropriate governance from day one, rather than being discovered after deployment. Train procurement teams on what to look for and what questions to ask.

7. Establish a shared responsibility map for critical AI vendors. For each high-risk third-party AI system, document the provider-deployer responsibility split using the shared responsibility model. Make this document the basis for both contractual alignment and internal governance planning.

Start with step 1 this week. Add one column to your AI register: "Built vs. Procured." For every procured system, add the vendor name and note whether the AI capability was explicitly purchased or arrived as an embedded feature. This single exercise is the most consistently eye-opening step organizations take in their third-party AI governance journey — and it's the foundation for everything else in this issue.

Sources & Further Reading

EU AI Act — Articles 25-28 (Provider and Deployer Obligations), Articles 51-56 (General-Purpose AI Models)

ISO/IEC 42001:2023 — Annex A Controls (Third-party and customer relationships)

Singapore IMDA — Model AI Governance Framework (2020, 2024, 2026)

India — Digital Personal Data Protection Act 2023

IBM — 2025 Cost of a Data Breach Report

NIST AI Risk Management Framework — GOVERN and MAP Functions

PwC — 2025 US Responsible AI Survey

Cloud Security Alliance — AI Supply Chain Security (2025)

IAPP — AI Governance Profession Report 2025

Next Issue

Issue #012: AI Incident Management: When Things Go Wrong. What counts as an AI incident? How should you respond? We explore incident definitions, response frameworks, learning loops, and why most organizations can't answer the most basic question — "what is an AI incident?" — a finding that surfaces in nearly every governance audit.