The Paper Governance Problem

If you've been following this newsletter, you've built a solid foundation. You understand why strategy and governance must work together (Issue #001). You know the regulatory landscape (Issue #002). You can articulate risk across ten domains (Issue #003). You understand what ISO 42001 requires (Issue #004). You've mapped Shadow AI exposure (Issue #005). And you've seen the data proving governance enables rather than inhibits AI value (Issue #006).

But here's the uncomfortable truth: none of that matters if you can't operationalize it.

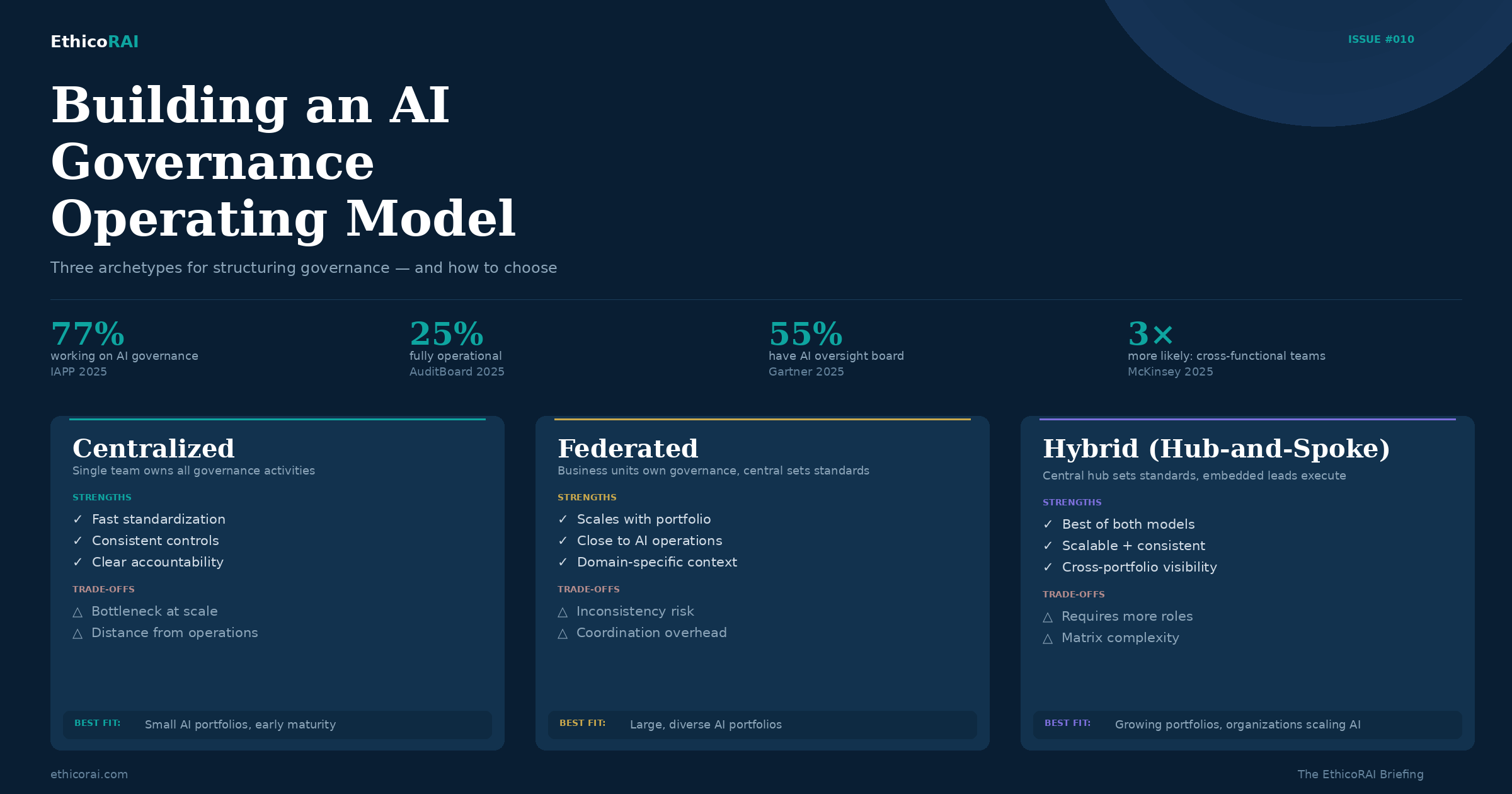

The gap between having governance and doing governance is where most organizations stall. The IAPP AI Governance Profession Report 2025 quantified this precisely: 77% of organizations are working on AI governance, but only 25% are fully operational. That 52-point gap isn't a knowledge problem. It's an operating model problem. Organizations have policies but no defined roles. They have risk frameworks but no escalation paths. They have committees that meet quarterly but make no decisions.

This issue addresses the how. Not what policies to write or what risks to assess — we've covered those. But how to build the organizational machinery that makes governance happen every day, across every AI system, without depending on heroic individual effort.

Why Operating Models Matter

Policies set the direction for AI governance. They articulate principles, define acceptable use, and establish requirements. But policies don't execute themselves. An operating model is what translates policy intent into organizational action — it defines who does what, when, how decisions get made, and where accountability sits.

Consider an analogy. An information security policy might state that all systems must be patched within 30 days of a critical vulnerability disclosure. That's valuable. But without an operating model — patch management teams, scanning schedules, escalation procedures, exception processes — the policy is aspirational at best.

AI governance is no different. You can have an excellent AI ethics policy, a comprehensive risk taxonomy, and full regulatory awareness. But if nobody knows who approves new AI use cases, who monitors deployed systems, who investigates when something goes wrong, or who reports to the board — governance exists on paper but not in practice.

In audits, this gap is visible almost immediately. Organizations present well-crafted governance documents. But when you ask operational questions — walk me through the last AI use case that went through your governance process; show me the monitoring logs for your highest-risk system; who decided the risk appetite for this deployment? — the silence reveals the distance between documentation and execution.

Three Governance Archetypes

How should AI governance be structured within an organization? There's no universal answer, but most models fall into three archetypes — each with trade-offs that depend on your AI portfolio size, organizational culture, and existing governance maturity.

Centralized. A dedicated AI governance team owns all governance activities — policy development, risk assessment, monitoring, incident response, regulatory compliance, and board reporting. Everything flows through a single team with direct authority.

This model works well when the AI portfolio is small enough for one team to manage comprehensively, when the organization is early in its governance journey and needs to build consistent foundations, or when rapid standardization is a priority. The risk is that the central team becomes a bottleneck as the AI portfolio grows. If every use case needs central team review, governance slows deployment rather than enabling it — exactly the dynamic we warned against in Issue #001.

Federated. Business units own governance for the AI systems they operate. A small central team sets standards and provides templates, but execution responsibility sits with the teams closest to the AI systems. Each unit has its own governance leads who apply the framework to their context.

This model scales well and keeps governance close to where AI decisions happen. But it risks inconsistency — different business units may interpret risk differently, apply controls unevenly, or develop conflicting practices. Without strong coordination, federated governance can fragment into isolated pockets that look good individually but don't add up to an organization-wide capability.

Hybrid (Hub-and-Spoke). A central governance hub sets policy, defines standards, provides tooling, maintains the AI inventory, and handles board and regulatory reporting. Embedded governance leads within business units (the spokes) execute governance activities — conducting impact assessments, managing day-to-day monitoring, and ensuring local compliance with central standards.

This is the model most commonly adopted by organizations with mature governance programs. The IAPP's 2025 case studies from organizations like Mastercard, BCG, and Randstad revealed a shared pattern: cross-functional structures — what some describe as "villages" of experts from legal, technical, ethical, and business backgrounds — that combine central coordination with distributed execution.

Don't pick an archetype based on theory — pick based on your current reality. If you're just starting, a centralized model lets you build consistent foundations quickly. As your AI portfolio grows beyond what one team can manage, evolve toward hybrid by embedding governance leads in the business units with the most AI activity. The model should grow with your maturity, not be designed for a future state you haven't reached.

The Three Lines of Defense for AI

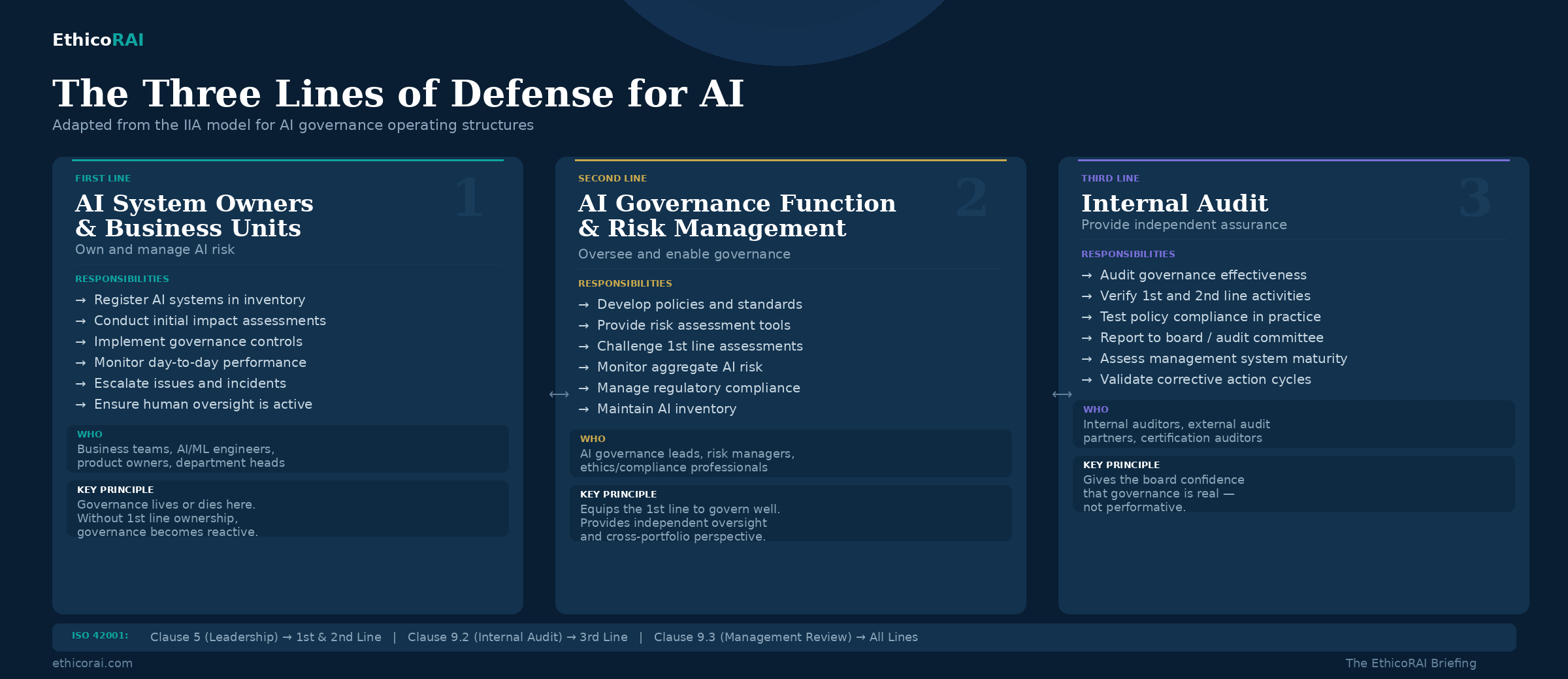

The Three Lines of Defense model — widely used in financial services and increasingly in other regulated industries — provides a powerful structure for distributing governance responsibilities. Adapted for AI, it clarifies who is responsible for what across the organization.

First Line: AI System Owners and Business Units. The teams that build, deploy, and operate AI systems are the first line of governance. Their responsibilities include registering AI systems in the inventory, conducting initial impact assessments, implementing controls specified by the governance framework, monitoring day-to-day performance, escalating issues and incidents, and ensuring human oversight is in place for their systems.

The first line is where governance either lives or dies. If business teams see governance as someone else's problem — something the "governance team" handles — then governance becomes reactive and adversarial. Effective operating models make the first line accountable for governance outcomes, with the central team providing support, not policing.

Second Line: AI Governance Function and Risk Management. The central governance team (or risk function with AI governance responsibilities) provides the framework within which the first line operates. This includes developing and maintaining AI governance policies and standards, providing risk assessment methodologies and tools, independently reviewing and challenging first-line assessments, monitoring aggregate AI risk across the organization, managing regulatory compliance and reporting, and maintaining the organizational AI inventory.

The second line doesn't do governance for the first line — it equips the first line to do governance well, and it provides independent oversight to ensure consistency and quality.

Third Line: Internal Audit. Internal audit provides independent assurance that the governance framework is operating as intended. This includes auditing the effectiveness of AI governance controls, verifying that first-line and second-line activities are functioning, testing whether policies are being followed in practice (not just documented), and reporting to the audit committee or board on governance maturity and gaps.

The third line is what gives boards and senior leadership confidence that governance is real — not performative. It's also what ISO 42001 requires: Clause 9.2 mandates internal audits of the AI management system at planned intervals.

The most dangerous gap in an AI governance operating model is a missing first line. When business teams deploying AI don't see governance as their responsibility, the central governance function becomes a bottleneck at best and a police force at worst — always reactive, always behind, and never close enough to the actual AI systems to govern them effectively. Governance must be embedded where AI decisions happen.

Key Roles and Decision Rights

An operating model needs named roles with clear accountability — not just job descriptions, but defined decision rights. Here are the roles that matter most and the decisions each one owns:

Head of AI Governance / Chief AI Officer. The person ultimately accountable for the organization's AI governance program. This role owns strategic direction, resource allocation, board reporting, and regulatory engagement. They set governance priorities, define risk appetite recommendations for leadership approval, and ensure the operating model evolves with the organization's AI maturity. This role needs organizational authority — governance without executive backing becomes advisory, not operational.

AI Governance Committee. The cross-functional oversight body that makes the decisions no single role should make alone. The committee approves policies, adjudicates risk appetite for high-risk AI systems, reviews aggregate AI risk, and provides strategic direction. We'll address committee design in detail in the next section.

AI System Owners. The most underassigned role in most organizations. Every AI system — whether built internally or procured from a vendor — needs a named individual who is accountable for its governance outcomes. Not the developer, not the vendor, not "IT" — a specific person who owns the risk register entry, ensures monitoring is active, and is answerable when things go wrong. Without system owners, accountability is diffused to the point of meaninglessness.

AI Risk and Ethics Leads. Second-line specialists who provide independent challenge and advisory support to the first line. They review impact assessments, flag risks that system owners may have underestimated, and bring cross-portfolio perspective. In organizations with mature privacy teams, this function often evolves from existing privacy professionals — not because privacy is the most important dimension, but because privacy teams bring transferable experience in impact assessments, cross-functional collaboration, and regulatory compliance.

Data Governance Leads. The bridge between AI governance and the data foundations that AI depends on. As we explored in Issue #008, 76% of organizations cite data quality as the top barrier to trustworthy AI. Data governance leads ensure that AI systems operate on data that meets quality, lineage, privacy, and bias standards. This role is the connective tissue between the AI governance and data governance functions.

McKinsey's State of AI 2025 report found that high-performing AI organizations — those seeing measurable EBIT impact — are three times more likely to have cross-functional governance structures with clear decision rights. The pattern is consistent: it's not which function leads governance that matters most, but whether decision rights are clearly defined and distributed across functions that bring complementary expertise.

Designing the AI Governance Committee

The governance committee is the decision-making engine of the operating model. Most organizations establish one — Gartner's 2025 poll found that 55% now have an AI board or dedicated oversight committee. But many struggle with composition and effectiveness.

Composition. Effective committees bring together perspectives that no single function can provide: legal and compliance (regulatory requirements, liability), risk management (enterprise risk alignment, risk appetite), technology and engineering (technical feasibility, system architecture), business representatives (use case context, value drivers), data governance (data quality, privacy), and ethics and responsible AI (impact assessment, fairness). A committee of 6-8 members typically provides sufficient breadth without becoming unwieldy.

The composition challenge is finding the right altitude. Committees composed entirely of senior executives bring decision authority but often lack the operational detail to assess AI risks meaningfully — meetings become update sessions rather than working sessions. Committees composed of operational staff bring deep technical and domain knowledge but lack the authority to make risk appetite decisions or allocate resources. The most effective committees blend both: senior sponsors who own decisions, supported by operational members who prepare assessments and bring ground-level insight.

Cadence and authority. Monthly meetings are the minimum for organizations with active AI portfolios; quarterly may suffice for organizations in earlier stages. The committee must have real authority — it should approve or reject high-risk AI deployments, not merely advise. Without decision-making power, committees become discussion forums that generate meeting minutes but no governance outcomes.

Standing agenda. Every meeting should cover three things: new AI use cases submitted for review (pipeline management), active risk and incident updates for deployed systems (portfolio monitoring), and regulatory and standards developments that affect the governance framework (horizon scanning). Additional topics rotate in as needed: audit findings, vendor reviews, policy updates, maturity assessments.

Inputs that drive effectiveness. The committee's quality depends on the quality of what it receives: current AI system inventory with risk classifications, completed impact assessments for new and existing systems, monitoring dashboards showing performance and drift indicators, incident reports and near-miss analyses, and external intelligence on regulatory changes and industry developments.

If you're establishing a committee from scratch, start lean: 6-8 members, monthly cadence, and the three standing agenda items above. Resist the urge to make it comprehensive from day one. Let the committee develop its rhythm, identify what information it needs, and expand scope as it matures. A small committee that makes decisions is infinitely more valuable than a large one that deliberates.

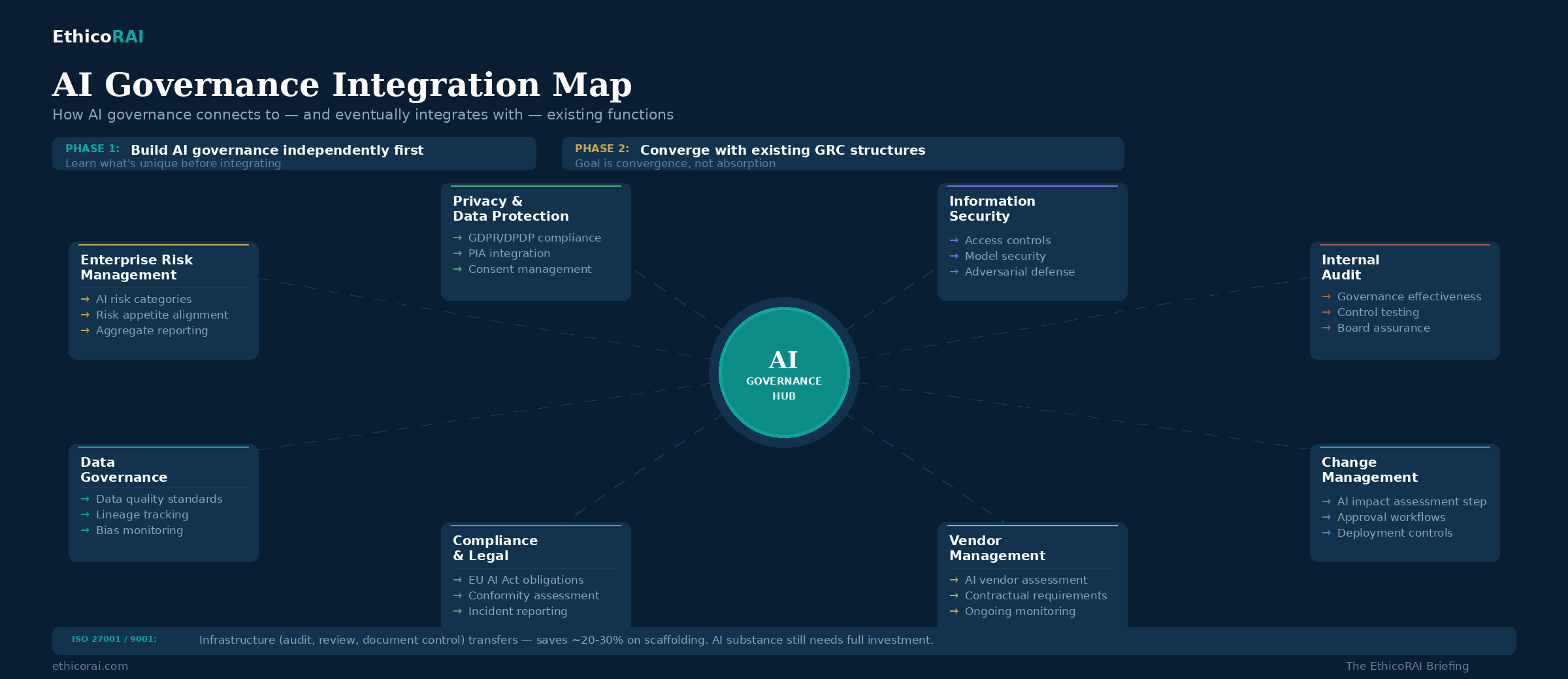

Integrating with Existing GRC — But Not Too Fast

There are two schools of thought on where AI governance should sit organizationally. One says integrate immediately into existing GRC — use your enterprise risk register, your existing committees, your audit programs. The other says start it as a standalone discipline — give the team space to learn, develop AI-specific processes, and build competence without being constrained by existing frameworks that weren't designed for AI.

In practice, the second approach often works better for organizations just starting. AI governance has characteristics that don't map neatly onto traditional GRC: impact assessments that consider societal harm, risk that changes with use case context rather than system properties, monitoring requirements for model drift and emergent behavior, and the challenge that the same AI output can be low-risk or high-risk depending on application — a concept we explored in depth in Issue #004.

Starting with some operational independence lets the AI governance team develop these AI-specific capabilities without forcing them into existing templates that may not fit. A risk register designed for operational risks may not accommodate the probabilistic, context-dependent nature of AI risk. An audit program designed for IT controls may not know how to assess model fairness or explainability. These aren't shortcomings in existing GRC — they're simply different domains that require purpose-built approaches.

The integration should come — but it should come once the AI governance team understands what's genuinely different about AI risk and what can be shared with existing functions. The goal is convergence, not absorption. Specifically:

AI risks belong in the enterprise risk register — but they need AI-specific risk categories, not just a line item under "technology risk." Once the governance team has developed a working risk taxonomy for AI (which you started in Issue #003), those categories should feed into the enterprise view.

AI deployments should use existing change management processes — with AI-specific gates added. Rather than creating a parallel approval process, extend the existing one with an AI impact assessment step and governance review for systems above a defined risk threshold.

Internal audit should include AI in its audit universe — but with auditors who understand AI-specific controls. This may require training existing auditors or bringing in specialist expertise for the first audit cycles.

Vendor management processes should cover third-party AI — a topic we'll explore in depth in the next issue. Your procurement and vendor management teams already assess vendor risk; the extension to AI-specific due diligence is a natural progression.

Organizations with mature ISO 27001 or ISO 9001 management systems have a structural advantage when building AI governance. The management system infrastructure — document control, internal audit cycles, management review, corrective action processes — transfers directly and saves meaningful time on process design. But this is an infrastructure advantage, not a content advantage. The substance of AI governance — impact assessment on individuals and society, use-case-driven risk classification, model monitoring, explainability requirements — is fundamentally new work. An existing management system certification can save 20-30% of the implementation effort on process scaffolding. It doesn't halve the total effort, because the AI-specific substance still requires full investment.

From Model to Maturity — Two Paths

How long does it take to operationalize AI governance? The honest answer: it depends on whether you try to govern everything at once or start with a focused scope and expand.

Path A: Pioneer and Expand (Recommended)

Start with a defined subset — one business unit, one high-risk use case cluster, or one AI deployment domain. Build the full governance cycle there, learn what works, then expand with that knowledge.

Months 1-3: Foundation (Pioneer Scope). Appoint an AI governance lead. Establish the governance committee. Register AI systems within the pioneer scope. Set baseline policies. Conduct first impact assessments. Define the risk assessment process and escalation paths. This phase builds the governance playbook — not as a theoretical exercise, but tested against real AI systems in a real business context.

Months 3-6: Operationalization (Pioneer Scope). Embed first-line responsibilities with the business teams in scope. Run the risk assessment process end-to-end for every AI system in the pioneer area. Establish monitoring cadence — what gets tracked, how often, what triggers escalation. Conduct the first governance review cycle. Most importantly, document what worked and what needed adapting. This documentation becomes the playbook for expansion.

Month 7 onwards: Dual Track. The pioneer scope enters optimization — measure governance effectiveness, automate where possible, refine processes based on real findings, begin aligning with existing GRC structures. Simultaneously, the next scope area begins its own foundation phase — but compressed to 4-8 weeks instead of three months, because policies, templates, escalation paths, and committee structures already exist. Only the scope-specific work — inventory, impact assessment, risk classification — is new.

Each subsequent expansion cycle gets faster as the operating model matures and governance muscle memory builds across the organization. By month 12, a well-executed pioneer-and-expand approach typically has two or three areas fully operational and a proven, repeatable playbook for onboarding the rest.

Path B: Enterprise-Wide (Big Bang)

Some organizations — particularly those under immediate regulatory pressure or with a small, well-defined AI portfolio — choose to roll out governance across the entire organization simultaneously. This path follows the same three phases but applied enterprise-wide: foundation across all areas in months 1-3, operationalization enterprise-wide in months 3-9, and optimization from months 9-18.

This path works when the AI portfolio is manageable in size, senior leadership is fully committed, and dedicated resources are available across all business units. But for most organizations, the pioneer-and-expand approach delivers faster learning, lower risk of governance fatigue, and more practical governance outcomes — because it's easier to refine a process that's running in one area than to fix one that's struggling in ten.

If you're starting your governance journey, resist the urge to govern everything at once. Pick your highest-risk AI area — the one where a governance failure would hurt most — and build the full governance cycle there first. The playbook you develop in that pioneer scope becomes the foundation for everything that follows. Speed comes from depth first, then breadth.

The Operating Model Checklist

Before we close, here's a practical diagnostic. If you can answer "yes" to all six, your operating model is functional. If not, the gaps tell you exactly where to invest.

1. Is there a named person accountable for AI governance outcomes? Not advisory, not influential — accountable. With organizational authority and resources to act.

2. Does every AI system have an assigned owner? Not the vendor. Not "IT." A specific individual who owns the risk and governance outcomes for that system.

3. Is there a defined process for new AI use cases? A clear path from "someone wants to deploy AI" to "it's been assessed, approved, and registered" — with decision criteria and escalation points.

4. Are governance responsibilities distributed across three lines? Business teams own first-line governance. A central function provides second-line oversight. Internal audit provides third-line assurance.

5. Does a governance committee meet regularly and make decisions? Not just discuss. Approve. Reject. Escalate. Allocate resources. A committee that produces minutes but not decisions is not governing.

6. Can you demonstrate governance activity — not just documentation? Risk assessments that have been reviewed and updated. Monitoring logs that are being generated. Incident reports that triggered corrective actions. Evidence that the system is alive.

If you scored 4 or fewer, you have a governance framework, not a governance operating model. The framework describes what should happen. The operating model makes it happen.

Sources & Further Reading

IAPP — AI Governance Profession Report 2025

IBM Institute for Business Value — "How Governance Increases Velocity" (December 2025)

McKinsey & Company — The State of AI 2025

PwC — 2025 US Responsible AI Survey

Gartner — 2025 Executive Leaders Poll

IIA — The Three Lines Model (2020, adapted for AI governance)

AuditBoard — "From Blueprint to Reality" (2025)

ISO/IEC 42001:2023 — Clauses 5 (Leadership), 9.2 (Internal Audit), 9.3 (Management Review)

Next Issue

Issue #011: Third-Party AI Risk: Governing What You Didn't Build. Most organizations' AI exposure comes from systems they didn't develop — embedded AI in SaaS platforms, vendor APIs, and third-party models. We cover vendor due diligence, contractual requirements, and the shared responsibility model for AI you procure but don't control.