The Insurance Claim That Changed Everything

Consider this scenario. A customer submits a health insurance claim. The AI system flags it for denial. The customer calls to ask why. The agent looks at the system, sees a recommendation of "deny" with a confidence score of 87%, and can offer no further explanation. The customer escalates. The compliance team reviews the case and finds that the AI system's decision cannot be traced to any specific factor the customer would recognize. The denial stands, the customer files a complaint with the regulator, and the organization discovers — too late — that it cannot explain a decision that materially affected a person's access to healthcare.

This is not a hypothetical. It is the type of scenario that is already generating regulatory attention, customer complaints, and legal challenges across industries. And it perfectly illustrates why explainability is one of the hardest — and most important — responsible AI objectives to operationalize.

As we covered in Issue #003, explainability is one of nine responsible AI objectives aligned with ISO 42001. But unlike safety or security, which have well-established engineering practices behind them, explainability sits at the intersection of technical capability, regulatory requirement, and human communication. Getting it right requires understanding not just how to generate explanations, but who needs them, what level of detail is appropriate, and how to build explainability into AI systems from design rather than bolting it on as an afterthought.

Explainability vs. Interpretability: A Critical Distinction

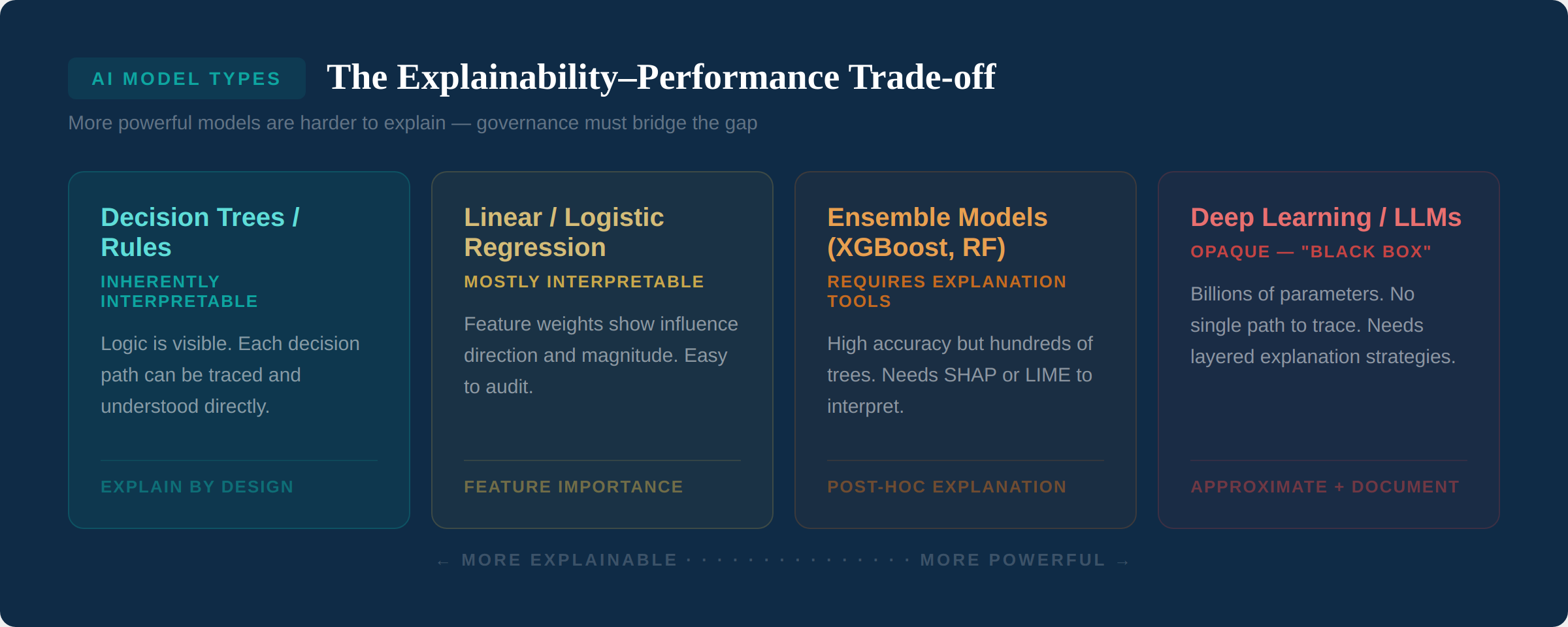

Before we go further, a distinction that matters: interpretability means a model's internal logic can be directly understood by examining its structure. A decision tree is interpretable — you can read every branch and understand exactly how a decision was made. Explainability is broader — it means that a model's behavior can be described in terms that a specific audience can understand, even if the model itself is too complex to inspect directly.

The distinction matters because most modern AI systems — particularly deep learning models and large language models — are not inherently interpretable. They are "black boxes" whose internal operations involve billions of parameters interacting in ways no human can directly follow. For these systems, explainability requires additional techniques that approximate or summarize the model's reasoning in human-understandable terms.

The trade-off is real: the most powerful AI models are typically the least explainable. A simple logistic regression model can be fully explained by listing each feature and its weight. A large language model with 70 billion parameters cannot. This doesn't mean powerful models can't be used in high-stakes decisions — it means they require more investment in explanation techniques and governance processes to compensate for their opacity.

Who Needs Explanations — and What Kind?

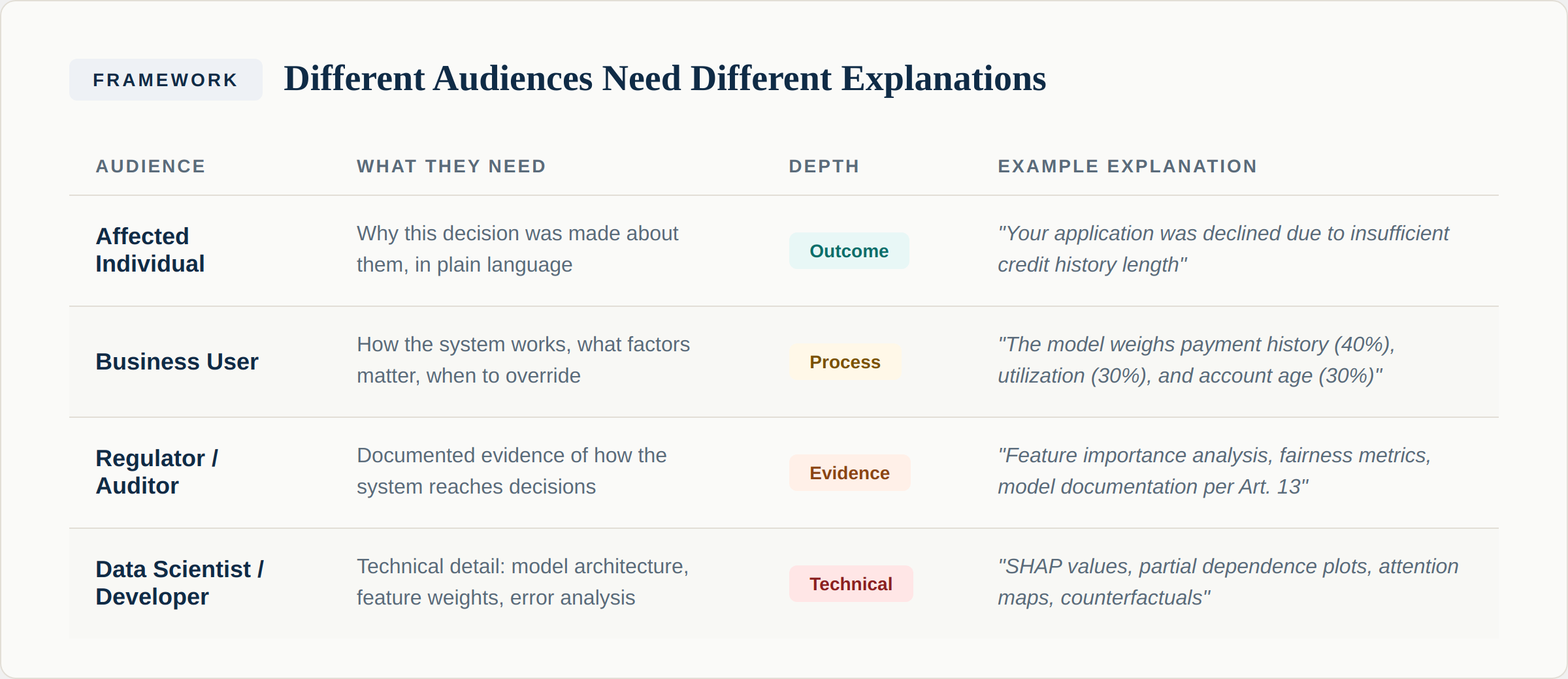

The single most common mistake organizations make with explainability is treating it as a single requirement. It isn't. Different audiences need fundamentally different types of explanations, at different levels of detail, delivered in different formats.

Affected individuals need to understand why a specific decision was made about them, in plain language. They don't need to know the model architecture or the feature weights. They need to know: what was the outcome, what were the main factors that influenced it, and what can they do if they disagree? This is what the EU AI Act's transparency obligations (Article 13) and GDPR's right to explanation (Article 22) are designed to protect. The insurance claim denial in our opening example failed at this level.

Business users — the people who operate AI systems day to day — need process-level understanding. They need to know how the system generally works, what factors it considers, how confident its outputs typically are, and when they should exercise their own judgment rather than following the system's recommendation. This is the human oversight requirement from Article 14 of the EU AI Act: the people overseeing AI systems must understand the system well enough to effectively monitor and override it.

Regulators and auditors need documented evidence. They're not looking for a plain-language summary — they want to see the methodology, the testing results, the fairness metrics, and the documentation that demonstrates the system operates as described. Article 11 of the EU AI Act requires technical documentation; Article 12 requires logging. Together, these create the evidence base that enables regulatory review. As an ISO 42001 auditor, this is exactly what I look for: not whether the organization can explain the AI in theory, but whether they can demonstrate it in practice.

Data scientists and developers need technical detail — model architecture, training methodology, feature importance scores, error analysis, and the specific tools used to generate explanations. This is the level at which debugging happens and where improvements are designed.

For every high-risk AI system, define your explanation strategy by audience. Map each of the four audiences above to a specific explanation type, format, and delivery mechanism. A system that can generate SHAP values for data scientists but can't produce a plain-language reason for the customer it just denied has failed at the most important level of explainability.

Explanation Techniques: A Practical Overview

The field of explainable AI (XAI) has produced a range of techniques. Here are the ones that matter most for governance professionals:

Feature importance identifies which input variables had the most influence on a model's output. For tabular data models, this is often the most practical explanation technique. It answers the question: "What factors mattered most in this decision?" Techniques include built-in feature importance for tree-based models, permutation importance (shuffling each feature and measuring the impact on predictions), and SHAP (SHapley Additive exPlanations) values, which provide mathematically grounded attribution of each feature's contribution to a specific prediction.

Local explanations explain individual predictions rather than the model as a whole. LIME (Local Interpretable Model-agnostic Explanations) creates a simple, interpretable model that approximates the complex model's behavior in the neighborhood of a specific prediction. This is particularly useful for generating the individual-level explanations that affected persons and regulators need.

Counterfactual explanations answer the question: "What would need to change for the outcome to be different?" For example: "Your loan application was denied. If your account history had been 24 months instead of 12, the application would have been approved." These are powerful because they're actionable — they tell the affected person what they can do differently. They're also directly aligned with the spirit of GDPR's right to explanation.

Attention visualization is relevant for transformer-based models (including LLMs). It shows which parts of the input the model focused on most when generating its output. While attention maps don't provide complete explanations of model behavior, they can identify what the model was "looking at" — useful for debugging and for high-level explanations to business users.

Model cards and system documentation are not a technical explanation technique, but they're a governance explanation technique. A model card documents what a model does, how it was built, what data it was trained on, how it performs across different groups, and what its known limitations are. This is the evidence layer that regulators need. It's also required by Article 11 of the EU AI Act for high-risk systems.

You don't need all techniques for every system. Match the technique to the risk level. A recommendation engine might only need aggregate feature importance and a model card. A credit scoring system needs individual SHAP explanations, counterfactuals for declined applicants, documented fairness metrics, and full model documentation. Proportionality is key — over-engineering explainability for low-risk systems wastes resources; under-engineering it for high-risk systems creates liability.

The GenAI Explainability Challenge

Large language models present a unique explainability challenge. Traditional explanation techniques were designed for models that take structured inputs and produce categorical or numerical outputs — approve/deny, score of 0.87, category A. LLMs take unstructured text as input and produce unstructured text as output, with the reasoning embedded in billions of parameters and attention mechanisms.

For GenAI systems, explainability shifts from "explain the model" to "explain the system." This means documenting what the system is designed to do and what it's not designed to do, what data it has access to (particularly for RAG-based systems — which retrieved documents informed this response?), what guardrails and filters are in place, how the system handles uncertainty and edge cases, and what human oversight mechanisms exist.

Chain-of-thought prompting — asking the model to show its reasoning step by step — provides a form of transparency, but it's important to understand its limitations. The model's stated reasoning may not accurately reflect its actual computational process. It's more like a rationalization than a true explanation. For governance purposes, chain-of-thought is useful as a transparency measure but insufficient as a compliance measure.

Building Explainability Into Your Governance Program

Here's a practical framework for operationalizing explainability:

Step 1: Classify your systems by explanation requirement. Not every AI system needs the same level of explainability. Map each system to the EU AI Act risk tier (from Issue #007) and define the explanation obligations accordingly. High-risk systems need all four audience levels. Limited-risk systems may only need transparency notifications. Minimal-risk systems have no specific requirements.

Step 2: Choose explanation techniques during design, not after deployment. Explainability is dramatically easier to build in than to retrofit. When selecting a model architecture, consider its explainability characteristics alongside its performance. If a slightly less complex model provides adequate performance with built-in interpretability, it may be the better governance choice for high-risk applications.

Step 3: Test your explanations with actual users. The most technically accurate explanation is useless if the audience can't understand it. Test customer-facing explanations with customers. Test business user explanations with the actual operators. Test regulatory documentation with your compliance team. Iterate based on feedback.

Step 4: Document everything. Your model cards, explanation methodologies, testing results, and audience-specific explanation templates constitute your compliance evidence. They demonstrate to regulators and auditors that you have thought about explainability systematically and implemented it proportionately.

Step 5: Monitor explanation quality over time. As models are retrained or updated, explanations may become stale or inaccurate. Include explanation validation in your model monitoring process. When the model's behavior changes (drift), verify that the explanations still accurately reflect how the system operates.

Start with your highest-risk AI system. Write down — right now — how you would explain a specific decision from that system to each of the four audiences: the affected individual, the business user, the regulator, and the developer. If you can't produce a clear, audience-appropriate explanation for all four, you have an explainability gap that needs to be addressed before August 2026.

The Accountability Connection

Explainability and accountability are deeply linked. As we noted in Issue #003, accountability is the cross-cutting dimension that connects all nine responsible AI objectives. You cannot be accountable for a decision you cannot explain. And you cannot explain a decision made by a system you don't understand.

Consider the airline chatbot case we referenced: when the chatbot made incorrect promises to a customer, the airline initially argued it shouldn't be liable because the AI acted independently. That argument failed — the tribunal held that the airline was accountable for its AI system's outputs. Explainability would have allowed the airline to understand why the chatbot produced that response, to identify the root cause, and to fix it. Without explainability, they were left with a system they couldn't control and couldn't defend.

This is why explainability belongs in your governance framework, not just your ML pipeline. It's not a technical feature — it's an organizational capability that enables accountability, builds trust, and satisfies regulatory requirements. The organizations that build this capability now will be the ones that can deploy AI confidently as the regulatory landscape tightens.

Next Issue

Issue #010: Building an AI Governance Operating Model. You've assessed risks, written policies, and mapped regulatory requirements. Now how do you actually operationalize governance? We explore the roles, structures, processes, and tools that turn governance principles into daily practice.